Database of existential risk estimates

By MichaelA🔸 @ 2020-04-15T12:43 (+130)

This post was written for Convergence Analysis, though the opinions expressed are my own.

This post:

- Provides a spreadsheet you can use for making your own estimates of existential risks (or of similarly “extreme” outcomes)

- Announces a database of estimates of existential risk (or similarly extreme outcomes), which I hope can be collaboratively expanded and updated

- Discusses why I think this database may be valuable

- Discusses some pros and cons of using or making such estimates

Key links relevant to existential risk estimates

-

Here’s a spreadsheet listing some key existential-risk-related things people have estimated, without estimates in it.

- The link makes a copy of the spreadsheet so that you can add your own estimates to it.

- I mention this first so that you have the option of providing somewhat independent estimates, before looking at (more?) estimates from others.

- Some discussion of good techniques for forecasting, which may or may not apply to such long-range and extreme-outcome forecasts, can be found here, here, here, here, and here.

-

Here’s a database of all estimates of existential risks, or similarly extreme outcomes (e.g., reduction in the expected value of the long-term future), which I’m aware of. I intend to add to it over time, and hope readers suggest additions as well.

-

Here's an EAGx talk I gave that's basically a better structured version of this post (as I've now thought about this topic more), though without the links. So you may wish to watch that, and then just skim this post for links.

-

The appendix of this article by Beard et al. is where I got many of the estimates from, and it provides more detail on the context and methodologies of those estimates than I do in the database.

-

Beard et al. also critically discuss the various methodologies by which existential-risk-relevant estimates have been or could be derived.

-

In this post, I discuss some pros and cons of using or stating explicit probabilities in general.

Why this database may be valuable

I’d bet that the majority of people reading this sentence have, at some point, seen one or more estimates of extinction risk by the year 2100, from one particular source.[1] These estimates may in fact have played a role in major decisions of yours; I believe they played a role in my own career transition. That source is an informal survey of global catastrophic risk researchers, from 2008.

As Millett and Snyder-Beattie note:

The disadvantage [of that survey] is that the estimates were likely highly subjective and unreliable, especially as the survey did not account for response bias, and the respondents were not calibrated beforehand.

Additionally, in any case, it was just one informal survey, and is now 12 years old.[2] So why is it so frequently referenced? And why has it plausibly (in my view) influenced so many people?

I also expect that essentially the same pattern is likely to repeat, perhaps for another dozen years, but now with Toby Ord’s recent existential risk estimates. Why do I expect this?

I originally thought the answer to each of these questions was essentially that we have so little else to go on, and the topic is so important. It seemed to me there had just been so few attempts to actually estimate existential risks or similarly extreme outcomes (e.g., extinction risk, reduction in the expected value of the long-term future).

I’d argue that this causes two problems:

- We have less information to inform decisions such as whether to prioritise longtermism over other cause areas, whether to prioritise existential risk reduction over other longtermist strategies, and especially which existential risks to be most concerned about.

- We may anchor too strongly on the very sparse set of estimates we are aware of, and get caught in information cascades.

Indeed, it seems to me probably not ideal how many strategic decisions people concerned about existential risks (myself included) have made so far without having first collected and critiqued a wide array of such estimates.

This second issue seems all the more concerning given the many reasons we have for skepticism about estimates on these matters, such as:

- The lack of directly relevant data (e.g., prior human extinction events) to inform the estimates

- Estimates often being little more than quick guesses

- It often being hard to interpret what is actually being estimated

- The possibility of response bias

- The estimators typically being people who are especially concerned about existential risks, and thus arguably being essentially “selected for” above average pessimism

- There's limited evidence about how trustworthy long-range forecasts in general are, and some evidence of expert forecasts often being unreliable even for events just a few years out (e.g., from Tetlock)

Thus, we may be anchoring on a small handful of estimates which could in fact warrant little trust.

But what else are we to do? One option would be to each form our own, independent estimates. This may be ideal, which is why I opened by linking to a spreadsheet you can duplicate to do that in.

But it seems plausible that “expert” estimates of these long-range, extreme outcomes would correlate better with what the future actually has in store for us than the estimates most of us could come up with, at least without investing a great deal of time into it. (Though I’m certainly not sure of that, and I don’t know of any directly relevant evidence. Plus, Tetlock’s work may provide some reason to doubt the idea that “experts” can be assumed to make better forecasts in general.)

And in any case, most of us have probably already been exposed to a handful of relevant estimates, making it harder to generate “independent” estimates of our own.

So I decided to collect all quantitative estimates I could find of existential risks or other similarly “extreme outcomes”, as this might:

- Be useful for decision-making, because I do think these estimates are likely better than nothing or than just phrases like “plausible”, plausibly by a very large margin.

- Reduce a relatively “blind” reliance on any particular source of estimates, and perhaps even make it easier for people to produce effectively somewhat independent estimates. My hope is that providing a variety of different estimates will throw anchors in many directions (so to speak) in a way that somewhat “cancels out”, and that it’ll also highlight the occasionally major discrepancies between different estimates. I do not want you to take these estimates as gospel.

Above, I said “I originally thought the answer to each of these questions was essentially that we have so little else to go on, and the topic is so important.” I still think that’s not far off the truth. But since starting this database, I discovered Beard et al.’s appendix, which has a variety of other relevant estimates. And I wondered whether that meant this database wouldn’t be useful.

But then I realised that many or most of the relevant estimates from that appendix weren’t mentioned in Ord’s book. And it’s still the case that I’ve seen the 2008 survey estimates many times, and never seen most of the estimates in Beard et al.’s appendix (see this post for an indication that I’m not alone in that). And Beard et al. don’t mention Ord’s estimates (perhaps because it was released only a couple months before Ord’s book was), nor a variety of other estimates I found (perhaps because they’re from “informal sources” like 80,000 Hours articles).

So I still think collecting all relevant estimates in one place may be useful. And another benefit is that this database can be updated over time, including via suggestions from readers, so it can hopefully become comprehensive and stay up to date.

So please feel free to:

- Make your own estimates

- Take a look through the database as and when you’d find that useful

- Comment in there regarding estimates or details that could be added, corrections if I made any mistakes, etc.

- Comment here regarding whether my rationale for this database makes sense, regarding pros, cons, and best practice for existential risk estimates in general, etc.

This post was sort-of inspired by one sentence in a comment by MichaelStJules, so my thanks to him for that. My thanks also to David Kristoffersson for helpful feedback and suggestions on this post, and to Ofer Givoli and Juan García Martínez for comments or suggestions on the database itself.

See also my thoughts on Toby Ord’s existential risk estimates, which are featured in the database.

For example, it’s referred to in these three 80,000 Hours articles. ↩︎

Additionally, Beard et al.’s appendix says the survey had only 13 participants. The original report of the survey’s results doesn’t mention the number of participants. ↩︎

Pablo_Stafforini @ 2020-09-01T12:44 (+10)

An important new aggregate forecast, based on inputs from LW users:

- Aggregated median date: January 26, 2047

- Aggregated most likely date: November 2, 2033

- Earliest median date of any forecast: June 25, 2030

- Latest median date of any forecast: After 2100

MichaelA @ 2020-09-02T08:45 (+2)

Thanks for sharing this here.

For the benefit of other readers: These estimates are about the "Timeline until human-level AGI". The post Pablo linked to describes the methodology behind the estimates.

I don't think this really falls directly under the scope of the spreadsheet (in that this isn't directly an estimate of the chance of a catastrophe), so I haven't added it to the spreadsheet itself, but it's definitely relevant when considering x-risk/GCR estimates.

Do you know if there's a collection of AI timelines forecasts somewhere? I'd imagine there would be?

Pablo_Stafforini @ 2020-09-02T12:50 (+6)

Yes, sorry, I should have made that clearer—I posted here because I couldn't think of a more appropriate thread, and I didn't want to create a separate post (though maybe I could have used the "shortform" feature).

AI Impacts has a bunch of posts on this topic. This one discusses what appears to be the largest dataset of such predictions in existence. This one lists several analyses of time to human-level AI. And this one provides an overview of all their writings on AI timelines.

MichaelA @ 2020-09-02T14:10 (+4)

I think sharing that link in a comment here made sense; it's definitely relevant in relation to this topic. I was just flagging that I didn't think it made sense to add it to the spreadsheet itself.

But I think a similar spreadsheet to collect AI timelines estimates seems valuable (though I haven't thought much about information/attention hazards considerations in relation to that). So thanks for linking to that AI Impacts post.

I've now sent AI Impacts a message asking whether they'd considered making it easy for people to comment on that dataset with links to additional predictions. So perhaps they'll do so, or perhaps they'll decide not to for good reasons.

amandango @ 2020-09-07T19:29 (+9)

Thank you for putting this spreadsheet database together! This seemed like a non-trivial amount of work, and it's pretty useful to have it all in one place. Similar to other comments, seeing this spreadsheet really made me want more consistent questions and forecasting formats such that all these people can make comparable predictions, and also to see the breakdowns of how people are thinking about these forecasts (I'm very excited about people structuring their forecasts more into specific assumptions and evidence).

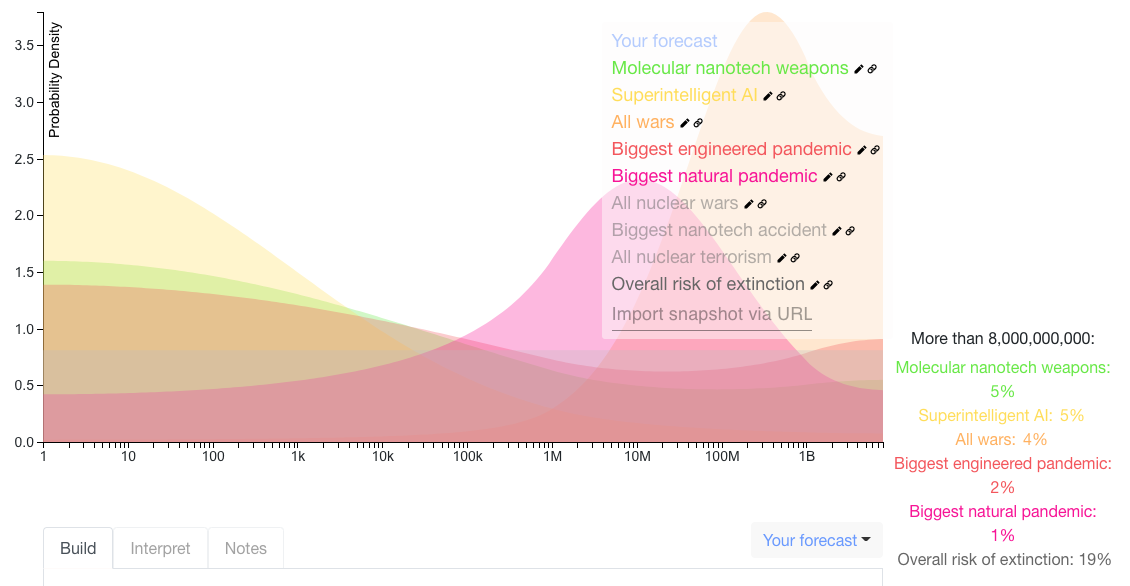

I thought the 2008 GCR questions were really interesting, and plotted the median estimates here. I was surprised by / interested in:

- How many more deaths were expected from wars than other disaster scenarios

- For superintelligent AI, most of the probability mass was < 1M deaths, but there was a high probability (5%) on extinction

- A natural pandemic was seen as more likely to cause > 1M deaths than an engineered pandemic (although less likely to cause > 1B deaths)

(I can't figure out how to make the image bigger, but you can click the link here to see the full snapshot)

MichaelA @ 2020-09-07T19:56 (+3)

Also, your comment suggests you already saw my comment quoting Beard et al. on breaking down the thought process behind forecasts. You might also be interested in Baum's great paper replying to and building on Beard et al.'s paper. I also commented on Baum's paper here, and Beard et al. replied to it here.

amandango @ 2020-09-07T21:00 (+1)

Oh this looks really interesting, I'll check it out, thanks for linking!

MichaelA @ 2020-09-07T19:51 (+2)

Thanks for your comment!

Yes, I share that desire for more comparability in predictions and more breakdowns of what's informing one's predictions. Though I'd also highlight that the predictions are often not even very clear in what they're about, let alone very comparable or clear in what's informing them. So clarity in what's being predicted might be the issue I'd target first. (Or one could target multiple issues at once.)

And your comments on and graph of the 2008 GCR conference results are interesting. Are the units on the y axis percentage points? E.g., is that indicating something like a 2.5% chance superintelligent AI kills 1 or fewer people? I feel like I wouldn't want to extrapolate that from the predictions the GCR researchers made (which didn't include predictions for 1 or fewer deaths); I'd guess they'd put more probability mass on 0.

amandango @ 2020-09-07T20:55 (+1)

The probability of an interval is the area under the graph! Currently, it's set to 0% that any of the disaster scenarios kill < 1 people. I agree this is probably incorrect, but I didn't want to make any other assumptions about points they didn't specify. Here's a version that explicitly states that.

MichaelA @ 2020-09-08T07:04 (+2)

The probability of an interval is the area under the graph!

It's not obvious to me how to interpret this without specifying the units on the y axis (percentage points?), and when the x axis is logarithmic and in units of numbers of deaths. E.g., for the probability of superintelligent AI killing between 1 and 10 people, should I multiply ~2.5 (height along x axis) by ~10 (length along y axis) and get 25%? But then I'll often be multiplying the height along the x axis by more than 100 and getting insane probabilities?

So at the moment I can make sense of which events are seen as more likely than other ones, but not the absolute likelihood they're assigned.

I may be making some basic mistake. Also feel free to point me to a pre-written guide to interpreting Elicit graphs.

David_Kristoffersson @ 2020-04-16T10:47 (+7)

I think this is an excellent initiative, thank you, Michael! (Disclaimer: Michael and I work together on Convergence.)

An assortment of thoughts:

- More and more studious estimates of x-risks seem clearly very high value to me due to how much the likelihood of risks and events affect priorities and how the quality of the estimates affect our communication about these matters.

- More estimates should generally should increase our common knowledge of the risks, and individually, if people think about how to make these estimates, they will reach a deeper understanding of the questions.

- Breaking down the causes of one's estimates is generally valuable. It allows one to improve one's estimates, understanding of causation, and to discuss them in more detail.

- More estimates can be bad if low quality estimates swamp out better quality ones somehow.

- Estimates building on new (compared to earlier estimates) sources of information are especially interesting. Independent data sources increase our overall knowledge.

- I see space for someone writing an intro post on how to do estimates of this type better. (Scott Alexander's old posts here might be interesting.)

MichaelA @ 2020-04-16T12:20 (+5)

I strongly agree about the value of breaking down the causes of one's estimates, and about estimates building on new sources of info being particularly interesting. And I tentatively agree with your other points.

Two things I'd add:

- Beard et al. have an interesting passage relevant to the idea of "Breaking down the causes of one's estimates":

Another approach is what Tonn and Stiefel refer to as ‘Evidential Reasoning’. This involves specifying the effect every piece of evidence has on one’s beliefs about the survival of humanity. Importantly, these probabilities should only reflect the change that this evidence makes, not one's initial prior beliefs, allowing others to assess them independently. As such, they will only be ‘imprecise probabilities’ that describe a small portion of the overall probability space, where the contribution each piece of evidence makes to one’s belief and its complement need not sum to 1. For instance, one might reason that evidence about the adaptability of humans to environmental changes suggests a 30 % probability that we will survive the next 1000 years, but only a 10 % probability that we will not.4 Combination functions can then be used to aggregate these imprecise probabilities to return the overall probability of extinction within this period. This method not only helps assessors determine the probability of extinction, but also provides others with information about the sources of evidence that contributed to this decision and the opportunity to determine how additional information might affect this.

- I think an intro post on how to do estimates of this type better could be valuable. I also think it would likely benefit by drawing on the insights in (among other things) the sources I linked to in this sentence: "Some discussion of good techniques for forecasting, which may or may not apply to such long-range and extreme-outcome forecasts, can be found here, here, here, here, and here." And Beard et al. is also relevant, though much of what it covers might be hard for individual forecasters to implement with low effort.

MichaelA @ 2021-12-13T08:12 (+6)

I don't have time to write a detailed self-review, but I can say that:

- I still think this is one my most useful posts; I think it's pretty obvious that something like this should exist, and I recommend it often, and I see it cited/recommended often.

- I think it's kind of crazy that nothing very much like this database existed before I made it, especially given how simple making it was.

- Note that I made this in not a huge amount of time and when still very "junior"; I was within 4 months of posting my first EA Forum post and within 2 years of having first learned of EA. So it really seems like other people could've done it too!

- I had already noted earlier in 2020 that there seemed to be many low-hanging fruit in the form of making summaries and collections of EA-relevant stuff, and that therefore I'd encourage people to try making such things. I think this database not existing till I made it is a good example of that.

- I've also continued to come across more examples of that since making this database (see here for an example and links to some other examples).

- I've recently realised various ways this database could be improved, which are essentially exemplified in a "Database of nuclear risk estimates" I'm now working on. Please contact me if you might be interested in making a copy of this database that includes these improvements I've now thought of. In that case, I could probably share with you my in-progress nuclear risk database and an accompanying doc & slideshow (so you see what improvements I'm talking about), have a call if useful, review your new version of this database once you've drafted it, and then replace links to this database with a link to the improved version you make (with you credited, of course).

- Though I reserve the right to back out of that arrangement if there's some reason I should back out.

- If you'd want to be paid for this, let me know, and I could probably promise to do so if you do a really good job and it doesn't take up too much of my time.

- EDIT: I think the post itself was a bit too meandering and self-doubting. I structured my thoughts better in my later lightning talk on roughly the same subject. Here's a transcript.

Pablo_Stafforini @ 2020-07-21T15:13 (+6)

Not in your database, I think:

“Personally, I now think we humans will be wiped out this century,” Frank Tipler told me. He may be the most pessimistic of Bayesian doomsayers, followed by Willard Wells (who gives the same time frame for the end of civilization and the beginning of the postapocalypse).

William Poundstone, The Doomsday Calculation, p. 259

MichaelA @ 2020-07-21T23:23 (+2)

Thanks for mentioning this; it indeed wasn't in the database, and I've now added it.

Though I felt a bit hesitant about doing so, and haven't filled in the "What is their estimate?" column; I left this just as a quote.

We could interpret Tiper as saying "I now think there's a greater than 50% chance humans will be wiped out this century". But when people just say things like "I think X will happen" or "I think X won't happen", I don't feel super confident that they really meant "greater than 50%" or "less than 50%". For example, it seems plausible they just mean something like "I think we should pay [or less] more attention to the chance of X".

Also, when people don't give quantitative estimates, I feel a little less confident that they've thought for more than a few seconds about (a) precisely what they're predicting (e.g., does Tipler really mean extinction, or just the end of civilization as we know it?), and (b) how likely they really think the thing is. I don't feel super confident people have thought about (a) and (b) when they give quantitative predictions either, and indeed it's unclear what many predictions in the database are really about, and many of the predictors explicitly say things like "but this is quite a made up number and I'd probably say something different if you asked me again tomorrow". But I feel like people might tend to take their statements even less seriously when those statements remain fully qualitative.

(I could be wrong about all of that, though.)

Risto_Uuk @ 2021-02-24T11:39 (+4)

Thanks for this database! I'm currently working on a project for the Foresight Centre (a think-tank at the Estonian parliament) about existential risks and the EU’s role in reducing them. I cover risks form AI, engineered pandemics, and climate change. For each risk, I discuss possible scenarios, probabilities, and the EUs role. I've found a couple of sources from your database on some of these risks that I hadn't seen before.

MichaelA @ 2021-02-26T02:39 (+3)

That sounds like a really valuable project! Glad to hear this database has been helpful in that.

I cover risks form AI, engineered pandemics, and climate change.

Do you mean that those are the only existential risks the Foresight Centre's/Estonian Parliament's work will cover (rather than just that those are the ones you're covering personally, or the ones that are being covered at the moment)? If so, I'd be interested to hear a bit about why? (Only if you're at liberty to discuss that publicly, of course!)

I ask because, while I do think that AI and engineered pandemics are probably the two biggest existential risks, I also think that other risks are noteworthy and probably roughly on par with climate change. I have in mind in particular "unforeseen" risks/technologies, nuclear weapons, nanotechnology, stable and global authoritarianism, and natural pandemics. I'd guess that it'd be hard to get buy-in for discussing nanotechnology, authoritarianism, and maybe unforeseen risks/technologies in the sort of report you're writing, but not too hard to get buy-in for discussing nuclear weapons and natural pandemics.

Risto_Uuk @ 2021-02-26T15:50 (+3)

Existential risks are not something they have worked on before, so my project is a new addition to their portfolio. I didn't mention this but I intend to have a section for other risks depending on space. The reason climate change gets prioritized in the project is that arguably the EU has more of a role to play in climate change initiatives compared to, say, nuclear risks.

MichaelA @ 2021-02-26T23:49 (+3)

Makes sense!

I imagine having climate change feature prominently could also help get buy-in for the whole project, since lots of people already care about climate change and it could be highlighted to them that the same basic reasoning arguably suggests they should care about other existential risks as well.

FWIW, I'd guess that the EU could do quite a bit on nuclear risks. But I haven't thought about that question specifically very much yet, and I'd agree that they can tackle a larger fraction of the issue of climate change.

WilliamKiely @ 2021-02-24T03:49 (+4)

You may very well already be aware of this (I didn't look at the post or comments too closely), but Elicit IDE has a "Search Metaforecast database" tool to search forecasts on several sites that may be helpful to your existential risk forecast database project. Here are the first 120 results for "existential risk."

MichaelA @ 2021-02-26T02:42 (+3)

Thanks for sharing that!

From a very quick look, I think that most of the things in that spreadsheet are either already in this database, only somewhat relevant (e.g., general AI capabilities questions), or hardly relevant at all. But I wouldn't be surprised if some fiddling around with that tool could narrow the results down to be more useful (I haven't tried the tool yet), or if it becomes more useful in future.

ofer @ 2020-04-17T08:44 (+3)

Interesting project! I especially like that there's a sheet for conditional estimates (one can argue that the non-conditional estimates are not directly decision-relevant).

MichaelA @ 2020-04-17T10:23 (+2)

Thanks for the feedback! And for your helpful suggestions in the spreadsheet itself.

Could you expand on what you mean by "one can argue that the non-conditional estimates are not directly decision-relevant"?

It does seem that, holding constant the accuracy of different estimates, the most decision-relevant estimates would be things like pairings of "What's the total x-risk this century, conditional on the EA community doing nothing about it" with "What's the total x-risk this century, conditional on a decent fraction of EAs dedicating their careers to x-risk reduction".

But it still seems like I'd want to make different decisions if I had reason to believe the risks from AI are 100 times larger than those from engineered pandemics, compared to if I believed the inverse. Obviously, that's not the whole story - it ignores at least tractability, neglectedness, and comparative advantage. But it does seem like a major part of the story.

ofer @ 2020-04-17T14:23 (+1)

But it still seems like I'd want to make different decisions if I had reason to believe the risks from AI are 100 times larger than those from engineered pandemics, compared to if I believed the inverse.

Agreed, but I would argue that in this example, acquiring that belief is consequential because it makes you update towards the estimate: "conditioned on us not making more efforts to mitigate existential risks from AI, the probability of an AI related existential catastrophe is ...".

I might have been nitpicking here, but just to give a sense of why I think this issue might be relevant:

Suppose Alice, who is involved in EA, tells a random person Bob: "there's a 5% chance we'll all die due to X". What is Bob more likely to do next: (1) convince himself that Alice is a crackpot and that her estimate is nonsense; or (2) accept Alice's estimate, i.e. accept that there's a 5% chance that he and everyone he cares about will die due to X, no matter what he and the rest of humanity will do. (Because the 5% estimate already took into account everything that Bob and the rest of humanity will do about X.)

Now suppose instead that Alice tells Bob "If humanity won't take X seriously, there's a 10% chance we'll all die due to X." I suspect that in this scenario Bob is more likely to seriously think about X.

MichaelA @ 2020-04-18T02:35 (+1)

I think I see your reasoning. But I might frame things differently.

In your first scenario, if Alice sees Bob seems to be saying "Oh, well then I've just got to accept that there's a 5% chance", she can clarify "No, hold up! That 5% was my unconditional estimate. That's what I think is going to happen. But I don't know how much effort people are going to put in. And you and I can help decide what the truth is on that - we can contribute to changing how much effort is put in. My estimate conditional on humanity taking this somewhat more seriously than I currently expect is a 1% chance of catastrophe, and conditional on us taking it as seriously as I think we should, the chance is <0.1%. And we can help make that happen."

See also a relevant passage from The Precipice, which I quote here.

Also, I think I see the difference between unconditional and conditional on us doing more than currently expected as more significant than the difference between unconditional and conditional on us doing less than currently expected. For me, the unconditional estimate is relevant mainly because it updates me towards beliefs like "There are probably low-hanging fruit for reducing this risk", or "conditioned on us making more efforts to mitigate existential risks from AI than currently expected, the probability of an AI related existential catastrophe is [notably lower number]."

Sort-of relevant to the above: "If humanity won't take X seriously, there's a 10% chance we'll all die due to X" is consistent with "Even if humanity does take X really really seriously, there still a 10% chance we'll all die due to X." So in either case, you plausibly might need to clarify what you mean and what the alternative scenarios are, depending on how people react.

ofer @ 2020-04-18T05:30 (+1)

In your first scenario, if Alice sees Bob seems to be saying "Oh, well then I've just got to accept that there's a 5% chance"

Maybe a crux here is what fraction of people in the role of Bob would instead convince themselves that the unconditional estimate is nonsense (due to motivated reasoning).

MichaelA @ 2020-04-16T12:25 (+3)

Another thought: If you do make your own existential risk estimates (whether or not you use the template I link to for that), feel free to "comment" a link to them in the main database. And if you do so, perhaps consider noting somewhere how you came up with those estimates, which prior estimates you believe you'd seen already, whether you had those in mind when you made your estimates, etc.

It seems to me that it could be valuable to pool together new estimates from the "general EA public" and track how "independent" they seem likely to be (though I'm unsure about how valuable that'd be).

Davidmanheim @ 2020-04-17T14:47 (+6)

It seems to me that it could be valuable to pool together new estimates from the "general EA public"

I think this is basically what Metaculus already does.

(But the post seems good / useful.)

MichaelA @ 2020-04-18T02:26 (+1)

Good point.

The relevant questions I'm aware of are this and these. So I guess I'd encourage people to answer those as well. Those questions are also linked to in the database already, so answers there would contribute to the pool here. And I believe people could comment there to explain their reasoning, what estimates they'd previously seen, etc.

There are more questions I'd be interested in estimates for which aren't on Metaculus at the moment (e.g., 100% rather than >95%, dystopia rather than other risks). But I imagine they could easily be put up by someone. (I'm not very familiar with the platform yet.)

TeddyW @ 2022-12-20T13:27 (+2)

There is an author who has done a top down analysis using a modification of Gott's estimator . Prospects for Human Survival: Wells, Willard H. It is grounded in numerical methods. He also has an earlier treatment,Apocalypse When?: Calculating How Long the Human Race Will Survive.

His methods are more refined than any I have checked in your table.

MichaelA @ 2021-05-29T15:59 (+2)

If I wrote this post again, I would probably include brief discussion somewhere of hedge drift, and perhaps memetic downside risk. I think one downside of this database is that it could induce those things.

Quoting from the first link, hedge drift refers to cases where:

The originator of [a] claim adds a number of caveats and hedges to their claim [e.g., “somewhat”, qualifications of where the claim doesn’t hold], which makes it more defensible, but less striking and sometimes also less interesting. When others refer to the same claim, the caveats and hedges gradually disappear, however.

Relatedly and somewhat unfortunately, this database draws more attention to the numbers people gave than to the precise phrasing of their statements and (especially) the context surrounding those statements.

MichaelA @ 2020-09-24T07:24 (+2)

There's currently an active thread on LessWrong for making forecasts of existential risk across the time period from now to 2120. Activity on that thread is already looking interesting, and I'm hoping more people add their forecasts, their reasoning, and questions/comments to probe and improve other people's reasoning.

I plan to later somehow add to this database those forecasts, an aggregate of them, and/or links.

MichaelA @ 2020-06-19T23:53 (+2)

Since I'm now linking to this post decently often, I'll mention a couple more sources that are somewhat relevant to the pros and cons of explicit, quantitative estimates, and best practices for making them. (But note that these sources aren't about existential risk estimates in particular.)

- How to Measure Anything - Douglas Hubbard

- Perhaps one of the most useful books I've read.

- Luke Muehlhauser provides an excellent summary of key points. I recommend reading that, then feeling inspired to get the full book, then doing so.

- Sequence thinking vs. cluster thinking - Holden Karnofsky

I'll probably add to this list over time. And see also the various sources linked to in this post itself.