OpenAI from Non-profit to deal with the U.S. Department of War

By Nick @ 2026-02-28T10:31 (+94)

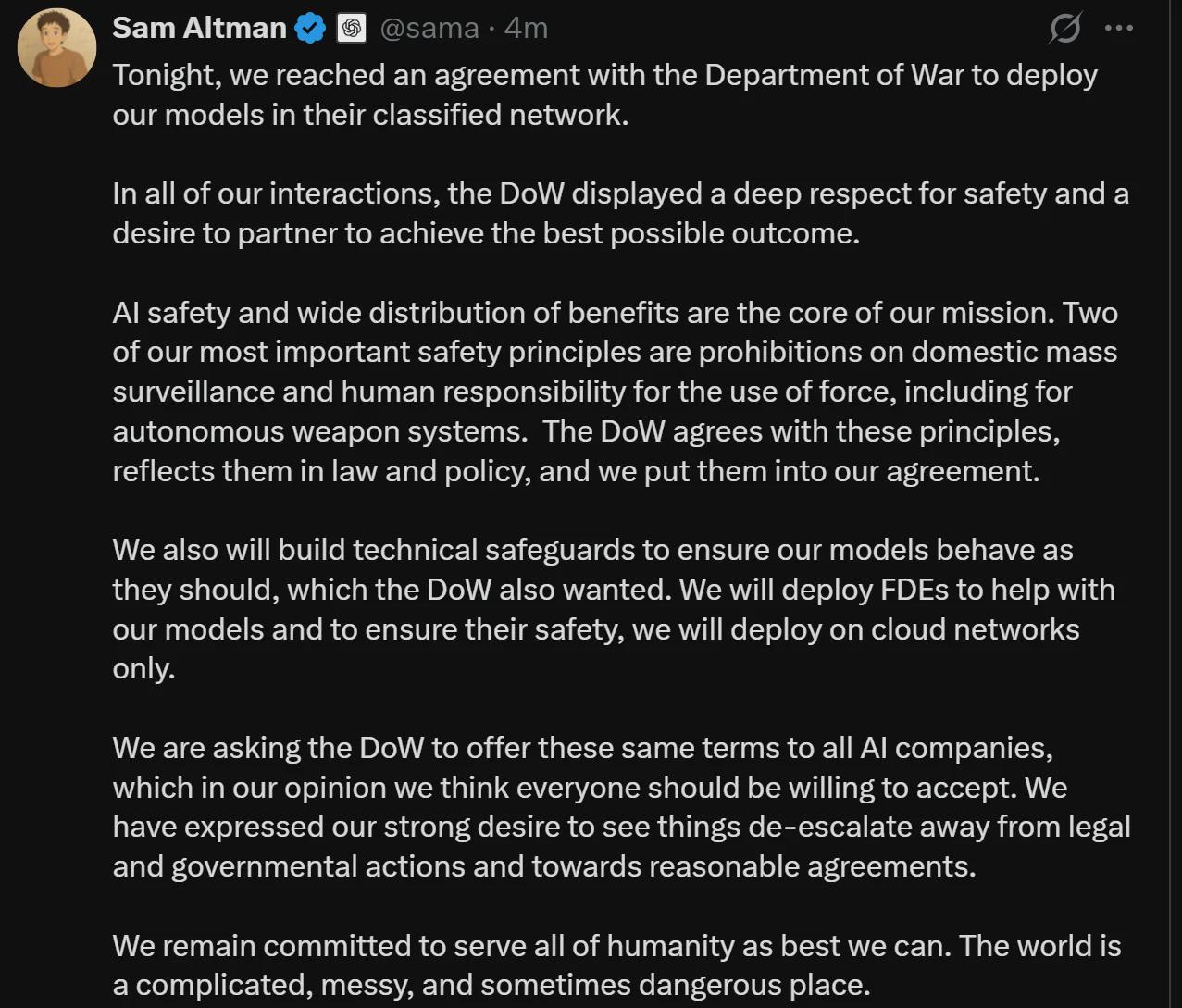

OpenAI's new deal to deploy AI models on U.S. Department of War classified network:

I think I’m not alone in this assessment, but @80,000 Hours should remove all OpenAI roles from their job board and amend any articles they’ve published recommending working at OpenAI.

We had these discussions before on the forum:

Kestrel🔸 @ 2026-02-28T18:26 (+51)

https://80000hours.org/2015/08/what-are-the-10-most-harmful-jobs/

Number 6: Weapons research

Weapons researchers develop new ways of waging war. While in some cases new weapons will be more targeted and less harmful than the old ones, in general we expect this work to be very bad for the world. Over the medium term new weapons technologies becomes widely disseminated and so both sides end up being able to use them. Usually this makes any remaining wars more destructive.

Furthermore, weapons researchers are among the most likely to accidentally design new technologies that could be used to accidentally destroy humanity, such as nuclear or biological weapons in the past. We just don’t know what new opportunities for mass destruction are available, and we are probably better off not knowing.

NickLaing @ 2026-02-28T21:03 (+11)

I agree, for me personally (I don't speak for others) this is an important moment for how i perceive EA orgs like 80k. Even if earlier there may have been some decent arguments for working within OpenAI, I think now it's close to indefensible. I'm hoping for a bunch of public statements and clear position changes in the next week or so.

Dicentra @ 2026-02-28T23:36 (+4)

I think the basic argument that there's a good chance that OpenAI creates an ASI, and so it's important that they have a good safety team, remains very strong. I think for a long time the case for working at OpenAI has not been that we ought to trust the company or agree with most of its policy decisions. It's that they might create the most powerful entity that has ever existed, and making that go better is high EV.

NickLaing @ 2026-03-01T07:02 (+24)

Is that the argument? I've never seen that written on any org website or in an official announcement.

If that is the main argument then I think especially given the current fraught situation, that needs to be made explicit so we can have real discussions about whether that's a good idea or not. I would feel better if the 80k site said something like.

1."There's a good chance that OpenAI creates an ASI, and so it's important that they have a good safety team."

2. Open AI have repeatedly proved to be untrustworthy, to so if you work there you will need to have strong moral resistance to much of the rhetoric you will hear from inside the company. Otherwise there's s high chance you will forget the reason you joined and cease to be an effective worker for AI safety.

3. You'll need to be eyes wide open that working inside an org which you disagree with many of their objectives and where you can't share all your intentions will be emotionally and psychologically taxing.

I didn't do the best job there but you get the idea.

Also my objections aren't only about trusting the company or their decisions, it's about them proving themselves repeatedly to be a bad actor and having done a series of bad things! from sacking their EA influence, to dissolving the initial non profit founders intent, to now signing up to operate mass surveillance and autonomous weapons. I think the problem with this company is far bigger than policy and intentions.

Alex Parry @ 2026-03-01T17:43 (+15)

I massively agree with this, if people are still determined to work within OpenAI then they should probably have a proven track record of strong emotional and moral resilience within hostile organisations/settings, and strong enough interpersonal skills to build internal networks of aligned parties willing to speak up at key times.

I mean this with genuine care, but most machine learning engineers (and comp sci folks in general) do not fit this bill. I realise that's a generalisation, but it should be somewhat self evident that the type of person who has spent most of their life in academia, enjoys hours of solo computer time and loves complex mathematics probably isn't simultaneously a charismatic, relationally dynamic political machine.