Third-wave AI safety needs sociopolitical thinking

By richard_ngo @ 2025-03-27T00:55 (+84)

This is a linkpost to https://www.youtube.com/watch?v=6sZhpTHhJZ8&list=PLwp9xeoX5p8M97UqjY0cP20mwpYipJVxZ&index=9

This is a crosspost, probably from LessWrong. Try viewing it there.

nullDavid Mathers🔸 @ 2025-03-27T08:22 (+119)

Because you are so strongly pushing a particular political perspective on twitter-tech right=good roughly, I worry that your bounties are mostly just you paying people to say things you already believe about those topics. Insofar as you mean to persuade people on the left/centre of the community to change their views on these topics, maybe it would be better to do something like make the bounties conditional on people who disagree with your takes finding the investigations move their views in your direction.

I also find the use of the phrase "such controversial criminal justice policies" a bit rhetorical dark artsy and mildly incompatible with your calls for high intellectual integrity. It implies that a strong reason to be suspicious of Open Phil's actions has been given. But you don't really think the mere fact that a political intervention on an emotive, polarized topic is controversial is actually particularly informative about it. Everything on that sort of topic is controversial, including the negation of the Open Phil view on the US incarceration rate. The phrase would be ok if you were taking a very general view that we should be agnostic all political issues where smart, informed people disagree. But you're not doing that, you take lots of political stances in the piece: de-regulatory libertarianism, the claim that environmentalism has been net negative and Dominic Cummings can all accurately be described as "highly controversial".

Maybe I am making a mountain out of a molehill here. But I feel like rationalists themselves often catastrophise fairly minor slips into dark arts like this as strong evidence that someone lacks integrity. (I wouldn't say anything as strong as that myself; everyone does this kind of thing sometimes.) And I feel like if the NYT referred to AI safety as "tied to the controversial rationalist community" or to "highly controversial blogger Scott Alexander" you and other rationalists would be fairly unimpressed.

More substantively (maybe I should have started with this as it is a more important point), I think it is extremely easy to imagine the left/Democrat wing of AI safety becoming concerned with AI concentrating power, if it hasn't already. The entire techlash anti "surveillance" capitalism, "the algorithms push extremism" thing from left-leaning tech critics is ostensibly at least about the fact that a very small number of very big companies have acquired massive amounts of unaccountable power to shape political and economic outcomes. More generally, the American left has, I keep reading, been on a big anti-trust kick recently. The explicit point of anti-trust is to break up concentrations of power. (Regardless of whether you think it actually does that, that is how its proponents perceive it. They also tend to see it as "pro-market"; remember that Warren used to be a libertarian Republican before she was on the left.) In fact, Lina Khan's desire to do anti-trust stuff to big tech firms was probably one cause of Silicon Valley's rightward shift.

It is true that most people with these sort of views are currently very hostile to even the left-wing of AI safety, but lack of concern about X-risk from AI isn't the same thing as lack of concern about AI concentrating power. And eventually the power of AI will be so obvious that even these people have to concede that it is not just fancy autocorrect.

It is not true that all people with these sort of concerns only care private power and not the state either. Dislike of Palantir's nat sec ties is a big theme for a lot of these people, and many of them don't like the nat sec-y bits of the state very much either. Also a relatively prominent part of the left-wing critique of DOGE is the idea that it's the beginning of an attempt by Elon to seize personal effective control of large parts of the US federal bureaucracy, by seizing the boring bits of the bureaucracy that actually move money around. In my view people are correct to be skeptical that Musk will ultimately choose decentralising power over accumulating it for himself.

Now strictly speaking none of this is inconsistent with your claim that the left-wing of AI safety lacks concern about concentration of power, since virtually none of these anti-tech people are safetyists. But I think it still matters for predicting how much the left wing of safety will actually concentrate power, because future co-operation between them and the safetyists against the tech right and the big AI companies is a distinct possibility.

richard_ngo @ 2025-03-27T17:17 (+22)

I worry that your bounties are mostly just you paying people to say things you already believe about those topics

This is a fair complaint and roughly the reason I haven't put out the actual bounties yet—because I'm worried that they're a bit too skewed. I'm planning to think through this more carefully before I do; okay to DM you some questions?

I think it is extremely easy to imagine the left/Democrat wing of AI safety becoming concerned with AI concentrating power, if it hasn't already

It is not true that all people with these sort of concerns only care private power and not the state either. Dislike of Palantir's nat sec ties is a big theme for a lot of these people, and many of them don't like the nat sec-y bits of the state very much either.

I definitely agree with you with regard to corporate power (and see dislike of Palantir as an extension of that). But a huge part of the conflict driving the last election was "insiders" versus "outsiders"—to the extent that even historically Republican insiders like the Cheneys backed Harris. And it's hard for insiders to effectively oppose the growth of state power. For instance, the "govt insider" AI governance people I talk to tend to be the ones most strongly on the "AI risk as anarchy" side of the divide, and I take them as indicative of where other insiders will go once they take AI risk seriously.

But I take your point that the future is uncertain and I should be tracking the possibility of change here.

(This is not a defense of the current administration, it is very unclear whether they are actually effectively opposing the growth of state power, or seizing it for themselves, or just flailing.)

Matrice Jacobine @ 2025-03-27T11:59 (+17)

Yeah, this feel particularly weird because, coming from that kind of left-libertarian-ish perspective, I basically agree with most of it but also every time he tries to talk about object-level politics it feels like going into the bizarro universe and I would flip the polarity of the signs of all of it. Which is an impression I generally have with @richard_ngo's work in general, him being one of the few safetyists on the political right to not have capitulated to accelerationism-because-of-China (as most recently even Elon did). Still, I'll try to see if I have enough things to say to collect bounties.

richard_ngo @ 2025-03-27T18:07 (+7)

him being one of the few safetyists on the political right to not have capitulated to accelerationism-because-of-China (as most recently even Elon did).

Thanks for noticing this. I have a blog post coming out soon criticizing this exact capitulation.

every time he tries to talk about object-level politics it feels like going into the bizarro universe and I would flip the polarity of the signs of all of it

I am torn between writing more about politics to clarify, and writing less about politics to focus on other stuff. I think I will compromise by trying to write about political dynamics more timelessly (e.g. as I did in this post, though I got a bit more object-level in the follow-up post).

titotal @ 2025-03-27T18:08 (+9)

I think it is extremely easy to imagine the left/Democrat wing of AI safety becoming concerned with AI concentrating power, if it hasn't already

To back this up: I mostly peruse non-rationalist, left leaning communities, and this is a concern in almost every one of them. There is a huge amount of concern and distrust of AI companies on the left.

Even AI skeptical people are concerned about this: AI that is not "transformative" can concentrate power. Most lefties think that AI art is shit, but they are still concerned that it will cost people jobs: this is not a contradiction as taking jobs does not mean AI needs to better than you, just cheaper. And if AI does massively improve, this is going to make them more likely to oppose it, not less.

Larks @ 2025-03-28T20:38 (+12)

AI art seems like a case of power becoming decentralized: before this week, few people could make Studio Ghibli art. Now everyone can.

Ozzie Gooen @ 2025-03-31T21:26 (+2)

Edit: Sincere apologies - when I read this, I read through the previous chain of comments quickly, and missed the importance of AI art specifically in titotal's comment above. This makes Lark's comment more reasonable than I assumed. It seems like we do disagree on a bunch of this topic, but much of my comment wasn't correct.

---

This comment makes me uncomfortable, especially with the upvotes. I have a lot of respect for you, and I agree with this specific example. I don't think you were meaning anything bad here. But I'm very suspicious that this specific example is really representative in a meaningful sense.

Often, when one person cites one and only single example of a thing, they are making an implicit argument that this example is decently representative. See the Cooperative Principle (I've been paying more attention to this recently). So I assume readers might take, "Here's one example, it's probably the main one that matters. People seem to agree with the example, so they probably agree with the implication from it being the only example."

Some specifics that come to my mind:

- In this specific example, it arguably makes it very difficult for Studio Ghibli to have control over a lot of their style. I'm sure that people at Studio Ghibli are very upset about this. Instead, OpenAI gets to make this accessible - but this is less an ideological choice but instead something that's clearly commercially beneficial for OpenAI. If OpenAI wanted to stop this, it could (at least, until much better open models come out). More broadly, it can be argued that a lot of forms of power are being brought from media groups like Studio Ghibli, to a few AI companies like OpenAI. You can definitely argue that this is a good or bad thing on the net, but I think this is not exactly "power is now more decentralized."

- I think it's easy to watch the trend lines and see where we might expect things to change. Generally, startups are highly subsidized in the short-term. Then they eventually "go bad" (see Enshittification). I'm absolutely sure that if/when OpenAI has serious monopoly power, they will do things that will upset a whole lot of people.

- China has been moderating the ability of their LLMs to say controversial things that would look bad for China. I suspect that the US will do this shortly. I'm not feeling very optimistic with Elon Musk with X.AI, though that is a broader discussion.

On the flip side of this, I could very much see it being frustrating as "I just wanted to leave a quick example. Can't there be some way to enter useful insights without people complaining about a lack of context?"

I'm honestly not sure what the solution is here. I think online discussions are very hard, especially when people don't know each other very well, for reasons like this.

But in the very short-term, I just want to gently push back on the implication of this example, for this audience.I could very much imagine a more extensive analysis suggesting that OpenAI's image work promotes decentralization or centralization. But I think it's clearly a complex question, at very least. I personally think that people broadly being able to do AI art now is great, but I still find it a tricky issue.

Larks @ 2025-04-01T04:12 (+8)

I'm not sure why you chose to frame your comment in such an unnecessarily aggressive way so I'm just going to ignore that and focus on the substance.

Yes, the Studio Ghibli example is representative of AI decentralizing power:

- Previously, only a small group of people had an ability (to make good art, or diagnose illnesses, or translate a document, or do contract review, or sell a car, or be a taxi driver, etc.)

- Now, due to a large tech company (e.g. Google, Uber, AirBnB, OpenAI) everyone who used to be able to still can, and also ordinary people can as well. This is a decentralization of power.

- The fact that this was not due to an ideological choice made by AI companies is irrelevant. Centralization and decentralization often occurs for non ideological reasons.

- The fact that things might change in the future is also not relevant. Yes, maybe one day Uber will raise prices to twice the level taxis used to charge, with four times the wait time and ten times the odor. But for now, they have helped decentralize power.

- The group of producers who are now subject to increased competition are unsurprisingly upset. For fairly nakedly self-interested reasons they demand regulation.

- Ideological leftists provide rhetorical ammunition to the rent-seekers, in classic baptists and bootleggers style.

- These demands for regulation affect four different levels of the power hierarchy:

- The government (most powerful): increases power

- Tech platform: reduces power

- Incumbent producers: increases power

- Ordinary people (least powerful): reduces power

- Because leftists focus on the second and third bullet points, characterizing it as a battle between small artisans and big business, they falsely claim to be pushing for power to be decentralized.

- But actually they are pushing for power to be more centralized: from tech companies towards the leviathan, and from ordinary people towards incumbent producers.

Ozzie Gooen @ 2025-04-01T05:40 (+2)

Thanks for providing more detail into your views.

Really sorry to hear that my comment above came off as aggressive. It was very much not meant like that. One mistake is that I too quickly read the comments above - that was my bad.

In terms of the specifics, I find your longer take interesting, though as I'm sure you expect, I disagree with a lot of it. There seem to be a lot of important background assumptions on this topic that both of us have.

I agree that there are a bunch of people on the left who are pushing for many bad regulations and ideas on this. But I think at the same time, some of them raise some certain good points (i.e. paranoia about power consolidation)

I feel like it's fair to say that power is complex. Things like ChatGPT's AI art will centralize power in some ways and decentralize it in others. On one hand, it's very much true that many people can create neat artwork that they couldn't before. But on the other, a bunch of key decisions and influence are being put into the hands of a few corporate actors, particularly ones with histories of being shady.

I think that some forms of IP protection make sense. I think this conversation gets much messier when it comes to LLMs, for which there just hasn't been good laws yet on how to adjust for them. I'd hope that future artists who come up with innovative techniques could get some significant ways of being compensated for their contributions. I'd hope that writers and innovators could similarly get certain kinds of credit and rewards for their work.

Matrice Jacobine @ 2025-03-27T20:09 (+8)

This isn't really the best example to use considering AI image generation is very much the one area where all the most popular models are open-weights and not controlled by big tech companies, so any attempt at regulating AI image generation would necessarily mean concentrating power and antagonizing the free and open source software community (something which I agree with OP is very ill-advised), and insofar as AI-skeptics are incapable of realizing that, they aren't reliable.

Sharmake @ 2025-03-28T15:20 (+1)

I basically agree with this, with one particular caveat, in that the EA and LW communities might eventually need to fight/block open source efforts due to issues like bioweapons, and it's very plausible that the open-source community refuses to stop open-sourcing models even if there is clear evidence that they can immensely help/automate biorisk, so while I think the fight was done too early, I think the fighty/uncooperative parts of making AI safe might eventually matter more than is recognized today.

Matrice Jacobine @ 2025-03-28T17:35 (+6)

If you mean Meta and Mistral I agree. I trust EleutherAI and probably DeepSeek to not release such models though, and they're more centrally who I meant.

AGB 🔸 @ 2025-03-30T11:51 (+112)

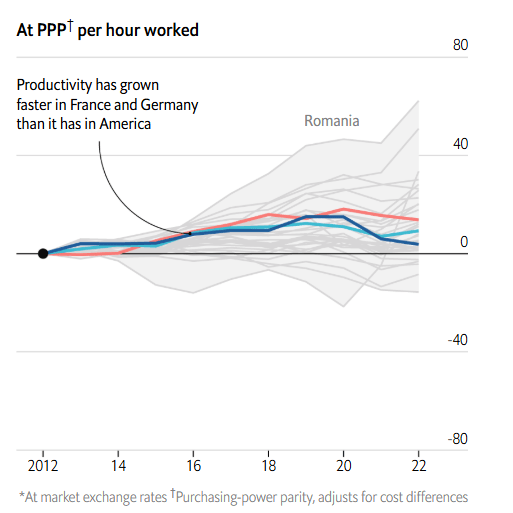

Just to respond to a narrow point because I think this is worth correcting as it arises: Most of the US/EU GDP growth gap you highlight is just population growth. In 2000 to 2022 the US population grew ~20%, vs. ~5% for the EU. That almost exactly explains the 55% vs. 35% growth gap in that time period on your graph; 1.55 / 1.2 * 1.05 = 1.36.

This shouldn't be surprising, because productivity in the 'big 3' of US / France / Germany track each other very closely and have done for quite some time. Below source shows a slight increase in the gap, but of <5% over 20 years. If you look further down my post the Economist has the opposing conclusion, but again very thin margins. Mostly I think the right conclusion is that the productivity gap has barely changed relative to demographic factors.

I'm not really sure where the meme that there's some big / growing productivity difference due to regulation comes from, but I've never seen supporting data. To the extent culture or regulation is affecting that growth gap, it's almost entirely going to be from things that affect total working hours, e.g. restrictions on migration, paid leave, and lower birth rates[1], not from things like how easy it is to found a startup.

But in aggregate, western Europeans get just as much out of their labour as Americans do. Narrowing the gap in total GDP would require additional working hours, either via immigration or by raising the amount of time citizens spend on the job.

- ^

Fertility rates are actually pretty similar now, but the US had much higher fertility than Germany especially around 1980 - 2010, converging more recently, so it'll take a while for that to impact the relative sizes of the working populations.

NickLaing @ 2025-04-03T16:01 (+7)

Most changed mind votes in history of EA comments? This blew my mind a bit, I feel like I've read so much about American productivity outpacing Europe, think this deserves a full length article.

jackva @ 2025-03-27T12:33 (+25)

I find it pretty difficult to see how to get broad engagement on this when being so obviously polemical / unbalanced in the examples.

As someone who is publicly quite critical of mainstream environmentalism, I still find the description here so extreme that it is hard to take seriously as more than a deeply partisan talking point.

The "Environmental Protection Act" doesn't exist, do you mean the "National Environmental Policy Act" (NEPA)?

Neither is it true that environmentalists are single-handedly responsible for nuclear declining and clearly modern environmentalism has done a huge amount of good by reducing water and air pollution.

I think your basic point -- that environmentalism had a lot more negative effects than commonly realized and that we should expect similar degrees of unintended negative effects for other issues -- is probably true (I certainly believe it).

But this point can be made with nuance and attention to detail that makes it something that people with different ideological priors can read and engage with constructively. I think the current framing comes across as "owning the libs" or "owning the enviros" in a way that makes it very difficult for those arguments to get uptake anywhere that is not quite right-coded.

richard_ngo @ 2025-03-27T17:06 (+18)

Thanks for the feedback!

FWIW a bunch of the polemical elements were deliberate. My sense is something like: "All of these points are kinda well-known, but somehow people don't... join the dots together? Like, they think of each of them as unfortunate accidents, when they actually demonstrate that the movement itself is deeply broken."

There's a kind of viewpoint flip from being like "yeah I keep hearing about individual cases that sure seem bad but probably they'll do better next time" to "oh man, this is systemic". And I don't really know how to induce the viewpoint shift except by being kinda intense about it.

Upon reflection, I actually take this exchange to be an example of what I'm trying to address. Like, I gave a talk that was according to you "so extreme that it is hard to take seriously" and your three criticisms were:

- An (admittedly embarrassing) terminological slip on NEPA.

- A strawman of my point (I never said anyone was "single-handedly responsible", that's a much higher bar than "caused"—though I do in hindsight think that just saying "caused" without any qualifiers was sloppy of me).

- A critique of an omission (on water/air pollution).

I imagine you have better criticisms to make, but ultimately (as you mention) we do agree on the core point, and so in some sense the message I'm getting is "yeah, listen, environmentalism has messed up a bunch of stuff really badly, but you're not allowed to be mad about it".

And I basically just disagree with that. I do think being mad about it (or maybe "outraged" is a better term) will have some negative effects on my personal epistemics (which I'm trying carefully to manage). But given the scale of the harms caused, this level of criticism seems like an acceptable and proportional discursive move. (Though note that I'd have done things differently if I felt like criticism that severe was already common within the political bubble of my audience—I think outrage is much worse when it bandwagons.)

EDIT: what do you mean by "how to get broad engagement on this"? Like, you don't see how this could be interesting to a wider audience? You don't know how to engage with it yourself? Something else?

jackva @ 2025-03-27T18:51 (+6)

I think we are misunderstanding each other a bit.

I am in no way trying to imply that you shouldn't be mad about environmentalism's failings -- in fact, I am mad about them on a daily basis. I think if being mad about environmentalism's failing is the main point than what Ezra Klein and Derek Thompson are currently doing with Abundance is a good example of communicating many of your criticisms in a way optimized to land with those that need to hear it.

My point was merely that by framing the example in such extreme terms it will lose a lot of people despite being only very tangentially related to the main points you are trying to make. Maybe that's okay, but it didn't seem like your goal overall to make a point about environmentalism, so losing people on an example that is stated in such an extreme fashion did not seem worth it to me.

Gideon Futerman @ 2025-03-27T20:54 (+10)

In fairness to Richard, I think it comes across in text a lot more strongly than in my view it came across listening on youtube

richard_ngo @ 2025-03-27T19:32 (+4)

Ah, gotcha. Yepp, that's a fair point, and worth me being more careful about in the future.

I do think we differ a bit on how disagreeable we think advocacy should be, though. For example, I recently retweeted this criticism of Abundance, which is basically saying that they overly optimized for it to land with those who hear it.

And in general I think it's worth losing a bunch of listeners in order to convey things more deeply to the ones who remain (because if my own models of movement failure have been informed by environmentalism etc, it's hard to talk around them).

But in this particular case, yeah, probably a bit of an own goal to include the environmentalism stuff so strongly in an AI talk.

Ozzie Gooen @ 2025-03-31T21:44 (+6)

My quick take:

I think that at a high level you make some good points. I also think it's probably a good thing for some people who care about AI safety to appear to the current right as ideologically aligned with them.

At the same time, a lot of your framing matches incredibly well with what I see as current right-wing talking points.

"And in general I think it's worth losing a bunch of listeners in order to convey things more deeply to the ones who remain"

-> This comes across as absurd to me. I'm all for some people holding uncomfortable or difficult positions. But when those positions sound exactly like the kind of thing that would gain favor by a certain party, I have a very tough time thinking that the author is simultaneously optimizing for "conveying things deeply". Personally, I find a lot of the framing irrelevant, distracting, and problematic.

As an example, if I were talking to a right-wing audience, I wouldn't focus on example of racism in the South, if equally-good examples in other domains would do. I'd expect that such work would get in the way of good discussion on the mutual areas where myself and the audience would more easily agree.

Honestly, I have had a decent hypothesis that you are consciously doing all of this just in order to gain favor by some people on the right. I could see a lot of arguments people could make sense for this. But that hypothesis makes more sense for the Twitter stuff than here. Either way, it does make it difficult for me to know how to engage. On one hand, I am very uncomfortable and I highly disagree with a lot of MAGA thinking, including some of the frames you reference (which seem to fit that vibe), if you do honestly believe this stuff. Or on the other, you're actively lying about what you believe, in a critical topic, in an important set of public debates.

Anyway, this does feel like a pity of a situation. I think a lot of your work is quite good, and in theory, what read to me like the MAGA-aligned parts don't need to get in the way. But I realize we live in an environment where that's challenging.

(Also, if it's not obvious, I do like a lot of right-wing thinkers. I think that the George Mason libertarians are quite great, for example. But I personally have had a lot of trouble with the MAGA thinkers as of late. My overall problem is much less with conservative thinking than it is MAGA thinking.)

richard_ngo @ 2025-03-31T22:12 (+3)

a lot of your framing matches incredibly well with what I see as current right-wing talking points

Occam's razor says that this is because I'm right-wing (in the MAGA sense not just the libertarian sense).

It seems like you're downweighting this hypothesis primarily because you personally have so much trouble with MAGA thinkers, to the point where you struggle to understand why I'd sincerely hold this position. Would you say that's a fair summary? If so hopefully some forthcoming writings of mine will help bridge this gap.

It seems like the other reason you're downweighting that hypothesis is because my framing seems unnecessarily provocative. But consider that I'm not actually optimizing for the average extent to which my audience changes their mind. I'm optimizing for something closer to the peak extent to which audience members change their mind (because I generally think of intellectual productivity as being heavy-tailed). When you're optimizing for that you may well do things like give a talk to a right-wing audience about racism in the south, because for each person there's a small chance that this example changes their worldview a lot.

I'm open to the idea that this is an ineffective or counterproductive strategy, which is why I concede above that this one probably went a bit too far. But I don't think it's absurd by any means.

Insofar as I'm doing something I don't reflectively endorse, I think it's probably just being too contrarian because I enjoy being contrarian. But I am trying to decrease the extent to which I enjoy being contrarian in proportion to how much I decrease my fear of social judgment (because if you only have the latter then you end up too conformist) and that's a somewhat slow process.

Ozzie Gooen @ 2025-03-31T23:20 (+3)

It seems like you're downweighting this hypothesis primarily because you personally have so much trouble with MAGA thinkers, to the point where you struggle to understand why I'd sincerely hold this position. Would you say that's a fair summary? If so hopefully some forthcoming writings of mine will help bridge this gap.

If you're referring to the part where I said I wasn't sure if you were faking it - I'd agree. From my standpoint, it seems like you've shifted to hold beliefs that both seem highly suspicious and highly convenient - this starts to raise the hypothesis that you're doing it, at least partially, strategically.

(I relatedly think that a lot of similar posturing is happening on both sides of the political isle. But I generally expect that the politicians and power figures are primarily doing this for strategic interests, while the news consumers are much more likely to actually genuinely believe it. I'd suspect that others here would think similar of me, if it were the case that we had a hard-left administration, and I suddenly changed my tune to be very in line with that.)

I'm optimizing for something closer to the peak extent to which audience members change their mind (because I generally think of intellectual productivity as being heavy-tailed).

Again, this seems silly to me. For one thing, I think that while I don't always trust people's publicly-stated political viewpoints, I state their reasons for doing these sorts of things even less. I could imagine that your statement is what it honestly feels to you, but this just raises a bunch of alarm bells to me. Basically, if I'm trying to imagine someone coming up with a convincing reason to be highly and unnecessarily (from what I can tell) provocative, I'd expect them to raise some pretty wacky reasons for it. I'd guess that the answer is often simpler, like, "I find that trolling just brings with it more attention, and this is useful for me," or "I like bringing in provocative beliefs that I have, wherever I can, even if it hurts an essay about a very different topic. I do this because I care a great deal about spreading these specific beliefs. One convenient thing here is that I get to sell readers on an essay about X, but really, I'll use this as an opportunity to talk about Y instead."

Here, I just don't see how it helps. Maybe it attracts MAGA readers. But for the key points that aren't MAGA-aligned, I'd expect that this would just get less genuine attention, not more. To me it sounds like the question is, "Does a MAGA veneer help make intellectual work more appealing to smart people?" And this clearly sounds to me as pretty out-there.

When you're optimizing for that you may well do things like give a talk to a right-wing audience about racism in the south, because for each person there's a small chance that this example changes their worldview a lot.

To be clear, my example wasn't "I'm trying to talk to people in the south about racism" It's more like, "I'm trying to talk to people in the south about animal welfare, and in doing so, I bring up examples around South people being racist."

One could say, "But then, it's a good thing that you bring up points about racism to those people. Because it's actually more important that you teach those people about racism than it is animal welfare."

But that would match my second point above; "I like bringing in provocative beliefs that I have". This would sound like you're trying to sneakily talk about racism, pretending to talk about animal welfare for some reason.

The most obvious thing is that if you care about animal welfare, and give a presentation to the deep US South, you can avoid examples that villainizes people in the South.

Insofar as I'm doing something I don't reflectively endorse, I think it's probably just being too contrarian because I enjoy being contrarian. But I am trying to decrease the extent to which I enjoy being contrarian in proportion to how much I decrease my fear of social judgment (because if you only have the latter then you end up too conformist) and that's a somewhat slow process.

I liked this part of your statement and can sympathize. I think that us having strong contrarians around is very important, but also think that being a contrarian comes with a bunch of potential dangers. Doing it well seems incredibly difficult. This isn't just an issue of "how to still contribute value to a community." It's also an issue of "not going personally insane, by chasing some feeling of uniqueness." From what I've seen, disagreeableness is a very high-variance strategy, and if you're not careful, it could go dramatically wrong.

Stepping back a bit - the main things that worry me here:

1. I think that disagreeable people often engage with patterns that are non-cooperative, like doing epistemic slight-of-hands and trolling people. I'm concerned that some of your work around this matches some of these patterns.

2. I'm nervous that you and/or others might slide into clearly-incorrect and dangerous MAGA worldviews. Typically the way for people to go crazy into any ideology is that they begin by testing the waters publicly with various statements. Very often, it seems like the conclusion of this is a place where they get really locked into the ideology. From here, it seems incredibly difficult to recover - for example, my guess is that Elon Musk has pissed off many non-MAGA folks, and at this point has very little way to go back without losing face. You writing using MAGA ideas both implies to me that you might be sliding this way, and worries me that you'll be encouraging more people to go this route (which I personally have a lot of trouble with).

I think you've done some good work and hope you can do so in the forward. At the same time, I'm going to feel anxious about such work whenever I suspect that (1) and (2) might be happening.

richard_ngo @ 2025-04-01T00:24 (+3)

To be clear, my example wasn't "I'm trying to talk to people in the south about racism" It's more like, "I'm trying to talk to people in the south about animal welfare, and in doing so, I bring up examples around South people being racist."

Yeah I got that. Let me flesh out an analogy a little more:

Suppose you want to pitch people in the south about animal welfare. And you have a hypothesis for why people in the south don't care much about animal welfare, which is that they tend to have smaller circles of moral concern than people in the north. Here are two types of example you could give:

- You could give an example which fits with their existing worldview—like "having small circles of moral concern is what the north is doing when they're dismissive of the south". And then they'll nod along and think to themselves "yeah, fuck the north" and slot what you're saying into their minds as another piece of ammunition.

- Or you could give an example that actively clashes with their worldview: "hey, I think you guys are making the same kind of mistake that a bunch of people in the south have historically made by being racist". And then most people will bounce off, but a couple will be like "oh shit, that's what it looks like like to have a surprisingly small circle of moral concern and not realize it".

My claims:

- Insofar as the people in the latter category have that realization, it will be to a significant extent because you used an example that was controversial to them, rather than one which already made sense to them.

- People in AI safety are plenty good at saying phrases like "some AI safety interventions are net negative" and "unilateralist's curse" and so on. But from my perspective there's a missing step of... trying to deeply understand the forces that make movements net negative by their own values? Trying to synthesize actual lessons from a bunch of past fuck-ups?

I personally spent a long time being like "yeah I guess AI safety might have messed up big-time by leading to the founding of the AGI labs" but then not really doing anything differently. I only snapped out of complacency when I got to observe first-hand a bunch of the drama at OpenAI (which inspired this post). And so I have a hypothesis that it's really valuable to have some experience where you're like "oh shit, that's what it looks like for something that seems really well-intentioned that everyone in my bubble is positive-ish about to make the world much worse". That's what I was trying to induce with my environmentalism slide (as best I can reconstruct, though note that the process by which I actually wrote it was much more intuitive and haphazard than the argument I'm making here).

I'm nervous that you and/or others might slide into clearly-incorrect and dangerous MAGA worldviews.

Yeah, that is a reasonable fear to have (which is part of why I'm engaging extensively here about meta-level considerations, so you can see that I'm not just running on reflexive tribalism).

Having said that, there's something here reminiscent of I can tolerate anything except the outgroup. Intellectual tolerance isn't for ideas you think are plausible—that's just normal discussion. It's for ideas you think are clearly incorrect, e.g. your epistemic outgroup. Of course you want to draw some lines for discussion of aliens or magic or whatever, but in this case it's a memeplex endorsed (to some extent) by approximately half of America, so clearly within the bounds of things that are worth discussing. (You added "dangerous" too, but this is basically a general-purpose objection to any ideas which violate the existing consensus, so I don't think that's a good criterion for judging which speech to discourage.)

In other words, the optimal number of people raising and defending MAGA ideas in EA and AI safety is clearly not zero. Now, I do think that in an ideal world I'd be doing this more carefully. E.g. I flagged in the transcript a factual claim that I later realized was mistaken, and there's been various pushback on the graphs I've used, and the "caused climate change" thing was an overstatement, and so on. Being more cautious would help with your concerns about "epistemic slight-of-hands". But for better or worse I am temperamentally a big-ideas thinker, and when I feel external pressure to make my work more careful that often kills my motivation to do it (which is true in AI safety too—I try to focus much more on first-principles reasoning than detailed analysis). In general I think people should discount my views somewhat because of this (and I give several related warnings in my talk) but I do think that's pretty different from the hypothesis you mention that I'm being deliberately deceptive.

Ozzie Gooen @ 2025-04-01T02:52 (+5)

Thanks for continuing to engage! I really wasn't expecting this to go so long. I appreciate that you are engaging on the meta-level, and also that you are keeping more controversial claims separate for now.

On the thought experiment of the people in the South, it sounds like we might well have some crux[1] here. I suspect it would be strained to discuss it much further. We'd need to get more and more detailed on the thought experiment, and my guess is that this would make up a much longer debate.

Some quick things:

"in this case it's a memeplex endorsed (to some extent) by approximately half of America"

This is a sort of sentence I find frustrating. It feels very motte-and-bailey - like on one hand, I expect you to make a narrow point popular on some parts of MAGA Twitter/X, then on the other, I expect you to say, "Well, actually, Trump got 51% of the popular vote, so the important stuff is actually a majority opinion.".

I'm pretty sure that very few specific points I would have a lot of trouble with are actually substantially endorsed by half of America. Sure, there are ways to phrase things very careful such that versions of them can technically be seen as being endorsed, but I get suspicious quickly.

The weasel phrases here of "to some extent" and "approximately", and even the vague phrases "memeplex" and "endorsed" also strike me as very imprecise. As I think about it, I'm pretty sure I could claim that that sentence could hold, with a bit of clever reasoning, for almost every claim I could imagine someone making on either side.

In other words, the optimal number of people raising and defending MAGA ideas in EA and AI safety is clearly not zero.

To be clear, I'm fine with someone straightforwardly writing good arguments in favor of much of MAGA[2]. One of my main issues with this piece is that it's not claiming to be that, it feels like you're trying to sneakily (intentionally or unintentionally) make this about MAGA.

I'm not sure what to make of the wording of "the optimal number of people raising and defending MAGA ideas in EA and AI safety is clearly not zero." I mean, to me, the more potentially inflammatory content is, more I'd want to make sure it's written very carefully.

I could imagine a radical looting-promoting Marxist coming along, writing a trashy post in favor of their agenda here, then claiming "the optimal number of people raising and defending Marxism is not zero."

This phrase seems to create a frame for discussion. Like, "There's very little discussion about the topic/ideology X happening on the EA Forum now. Let's round that to zero. Clearly, it seems intellectually close-minded to favor literally zero discussion on a topic. I'm going to do discussion on that topic. So if you're in favor of intellectual activity, you must be in favor of what I'll do."

But for better or worse I am temperamentally a big-ideas thinker, and when I feel external pressure to make my work more careful that often kills my motivation to do it

I could appreciate that a lot of people would have motivation to do more public writing if they don't need to be as careful when doing so. But of course, if someone makes claims that are misleading or wrong, and that does damage, the damage is still very much caused. In this case I think you hurt your own cause by tying these things together, and it's also easy for me to imagine a world in which no one helped correct your work, and some people had takeaways of your points just being correct.

I assume one solution looks like being it clear you are uncertain/humble, to use disclaimers, to not say things too strongly, etc. I appreciate that you did some of this in the comments/responses (and some in the talk), but would prefer it if the original post, and related X/Twitter posts, were more in line with that.

I get the impression that a lot of people with strong ideologies on all spectrum make a bunch of points with very weak evidence, but tons of confidence. I really don't like this pattern, I'd assume you generally wouldn't either. The confidence to evidence disparity is the main issue, not the issue of having mediocre evidence alone. (If you're adequately unsure of the evidence, even putting it in a talk like this demonstrates a level of confidence. If you're really unsure of it, I'd expect it in footnotes or other short form posts maybe)

I do think that's pretty different from the hypothesis you mention that I'm being deliberately deceptive.

That could be, and is good to know. I get the impression that lots of the MAGA (and the far Left) both frequently lie to get their ways on many issues, it's hard to tell.

At the same time, I'd flag that it can be still very easy to accidentally get in the habit of using dark patterns in communication.

Anyhow - thanks again for being willing to go through this publicly. One reason I find this conversation interesting is because you're willing to do it publicly and introspectively - I think most people who I hear making strong ideological claims don't seem to be. It's an uncomfortable topic to talk about. But I hope this sort of discussion could be useful by others, when dealing with other intellectuals in similar situations.

[1] By crux I just mean "key point where we have a strong disagreement".

[2] The closer people get to explicitly defending Fascism, or say White Nationalism here, the more nervous I'll be. I do think that many ideas within MAGA could be steelmanned safely, but it gets messy.

JWS 🔸 @ 2025-03-29T12:20 (+13)

I responded well to Richard's call for More Co-operative AI Safety Strategies, and I like the call toward more sociopolitical thinking, since the Alignment problem really is a sociological one at heart (always has been). Things which help the community think along these lines are good imo, and I hope to share some of my own writing on this topic in the future.

Whether or not I agree with Richard's personal politics or not is kinda beside the point to this as a message. Richard's allowed to have his own views on things and other people are allowed to criticse this (I think David Mathers' comment is directionally where I lean too). I will say that not appreciating arguments from open-source advocates, who are very concerned about the concentration of power from powerful AI, has lead to a completely unnecessary polarisation against the AI Safety community from it. I think, while some tensions do exist, it wasn't inevitable that it'd get as bad as it is now, and in the end it was a particularly self-defeating one. Again, by doing the kind of thinking Richard is advocating for (you don't have to co-sign with his solutions, he's even calling for criticism in the post!), we can hopefully avoid these failures in the future.

On the bounties, the one that really interests me is the OpenAI board one. I feel like I've been living in a bizarro-world with EAs/AI Safety People ever since it happened because it seemed such a collosal failure, either of legitimacy or strategy (most likely both), and it's a key example of the "un-cooperative strategy" that Richard is concerned about imo. The combination of extreme action and ~0 justification either externally or internally remains completely bemusing to me and was big wake-up call for my own perception of 'AI Safety' as a brand. I don't think people can underestimate the second-impact effect this bad on both 'AI Safety' and EA, coming about a year after FTX.

richard_ngo @ 2025-03-29T16:00 (+4)

Thanks for the thoughtful comment! Yeah the OpenAI board thing was the single biggest thing that shook me out of complacency and made me start doing sociopolitical thinking. (Elon's takeover of twitter was probably the second—it's crazy that you can get that much power for $44 billion.)

I do think I have a pretty clear story now of what happened there, and maybe will write about it explicitly going forward. But for now I've written about it implicitly here (and of course in the cooperative AI safety strategies post).

Cullen 🔸 @ 2025-04-01T11:13 (+6)

(Elon's takeover of twitter was probably the second—it's crazy that you can get that much power for $44 billion.)

I think this is pretty significantly understating the true cost. Or put differently, I don't think it's good to model this as an easily replicable type of transaction.

I don't think that if, say, some more boring multibillionaire did the same thing, they could achieve anywhere close to the same effect. It seems like the Twitter deal mainly worked for him, as a political figure, because it leveraged existing idiosyncratic strengths that he had, like his existing reputation and social media following. But to get to the point where he had those traits, he needed to be crazy successful in other ways. So the true cost is not $44 billion, but more like: be the world's richest person, who is also charismatic in a bunch of different ways, have an extremely dedicated online base of support from consumers and investors, have a reputation for being a great tech visionary, and then spend $44B.

richard_ngo @ 2025-04-01T18:14 (+6)

My story is: Elon changing the twitter censorship policies was a big driver of a chunk of Silicon Valley getting behind Trump—separate from Elon himself promoting Trump, and separate from Elon becoming a part of the Trump team.

And I think anyone who bought Twitter could have done that.

If anything being Elon probably made it harder, because he then had to face advertiser boycotts.

Agree/disagree?

Cullen 🔸 @ 2025-04-01T18:36 (+2)

Ah, interesting, not exactly the case that I thought you were making.

I more or less agree with the claim that "Elon changing the twitter censorship policies was a big driver of a chunk of Silicon Valley getting behind Trump," but probably assign it lower explanatory power than you do (especially compared to nearby explanatory factors like, Elon crushing internal resistance and employee power at Twitter). But I disagree with the claim that anyone who bought Twitter could have done that, because I think that Elon's preexisting sources of power and influence significantly improved his ability to drive and shape the emergence of the Tech Right.

I also don't think that the Tech Right would have as much power in the Trump admin if not for Elon promoting Trump and joining the administration. So a different Twitter CEO who also created the Tech Right would have created a much less powerful force.

Sharmake @ 2025-03-29T23:21 (+3)

Some thoughts on this comment:

On this part:

I responded well to Richard's call for More Co-operative AI Safety Strategies, and I like the call toward more sociopolitical thinking, since the Alignment problem really is a sociological one at heart (always has been). Things which help the community think along these lines are good imo, and I hope to share some of my own writing on this topic in the future.

I don't think it was always a large sociological problem, but yeah I've updated more towards the sociological aspect of alignment being important (especially as the technical problem has become easier than circa 2008-2016 views had).

Whether or not I agree with Richard's personal politics or not is kinda beside the point to this as a message. Richard's allowed to have his own views on things and other people are allowed to criticse this (I think David Mathers' comment is directionally where I lean too). I will say that not appreciating arguments from open-source advocates, who are very concerned about the concentration of power from powerful AI, has lead to a completely unnecessary polarisation against the AI Safety community from it. I think, while some tensions do exist, it wasn't inevitable that it'd get as bad as it is now, and in the end it was a particularly self-defeating one. Again, by doing the kind of thinking Richard is advocating for (you don't have to co-sign with his solutions, he's even calling for criticism in the post!), we can hopefully avoid these failures in the future.

I do genuinely believe that concentration of power is a huge risk factor, and in particular I'm deeply worried about the incentives of a capitalist post-AGI company where a few hold basically all of the rent/money, and given both stronger incentives to expropriate property from people, similar to how humans expropriate property from animals routinely, combined with weak to non-existent forces against expropriation of property.

That said, I think the piece on open-source AI being a defense against concentration of power and more generally a good thing akin to the enlightment unfortunately has some quite bad analogies, when giving everyone AI, depending on how powerful it is basically at the high end is enough to create entire very large economies on their own, and at the lower end help immensely/automate the process of biological weapons to common citizens is nothing like education/voting, and more importantly the impacts fundamentally require coordination to get large things done, which super-powerful AIs can remove.

More generally, I think one of the largest cruxes with reasonable open-source people and EAs in general is how much they think AIs can make biology capable for the masses, and how offense dominant is the tech, and here I defer to biorisk experts, including EAs that generally think that biorisk is a wildly offense advantaged domain that is very dangerous to democratize, compared to open source people for at least several years.

On Sam Altman's firing:

On the bounties, the one that really interests me is the OpenAI board one. I feel like I've been living in a bizarro-world with EAs/AI Safety People ever since it happened because it seemed such a collosal failure, either of legitimacy or strategy (most likely both), and it's a key example of the "un-cooperative strategy" that Richard is concerned about imo. The combination of extreme action and ~0 justification either externally or internally remains completely bemusing to me and was big wake-up call for my own perception of 'AI Safety' as a brand. I don't think people can underestimate the second-impact effect this bad on both 'AI Safety' and EA, coming about a year after FTX.

I'll be on the blunt end and say it, in that I think was mildly good or at worst neutral to use the uncooperative strategy to fire Sam Altman, because Sam Altman was going to gain all control by default and probably have better PR if the firing didn't happen, and more importantly he was aiming to disempower the safety people basically totally, which leads to at least a mild increase in existential risk, and they realized they would have been manipulated out of it if they waited, so they had to go for broke.

The main EA mistake was in acting too early, before things got notably weird.

That doesn't mean society will react or that it's likely to react, but I basically agree with Veaulans here:

Cullen 🔸 @ 2025-04-01T11:34 (+2)

I will say that not appreciating arguments from open-source advocates, who are very concerned about the concentration of power from powerful AI, has lead to a completely unnecessary polarisation against the AI Safety community from it.

I think if you read the FAIR paper to which Jeremy is responding (of which I am a lead author), it's very hard to defend the proposition that we did not acknowledge and appreciate his arguments. There is an acknowledgment of each of the major points he raises on page 31 of FAIR. If you then compare the tone of the FAIR paper to his tone in that article, I think he was also significantly escalatory, comparing us to an "elite panic" and "counter-enlightenment" forces.

To be clear, notwithstanding these criticisms, I think both Jeremy's article and the line of open-source discourse descending from it have been overall good in getting people to think about tradeoffs here more clearly. I frequently cite to it for that reason. But I think that a failure to appreciate these arguments is not the cause of the animosity in at least his individual case: I think his moral outrage at licensing proposals for AI development is. And that's perfectly fine as far as I'm concerned. People being mad at you is the price of trying to influence policy.

I think a large number of commentators in this space seem to jump from "some person is mad at us" to "we have done something wrong" far too easily. It is of course very useful to notice when people are mad at you and query whether you should have done anything differently, and there are cases where this has been true. But in this case, if you believe, as I did and still do, that there is a good case for some forms of AI licensing notwithstanding concerns about centralization of power, then you will just in fact have pro-OS people mad at you, no matter how nicely your white papers are written.

JWS 🔸 @ 2025-04-02T12:12 (+4)

Hey Cullen, thanks for responding! So I think there are object-level and meta-level thoughts here, and I was just using Jeremy as a stand-in for the polarisation of Open Source vs AI Safety more generally.

Object Level - I don't want to spend too long here as it's not the direct focus of Richard's OP. Some points:

- On 'elite panic' and 'counter-enlightenment', he's not directly comparing FAIR to it I think. He's saying that previous attempts to avoid democratisation of power in the Enlightenment tradition have had these flaws. I do agree that it is escalatory though.

- I think, from Jeremy's PoV, that centralization of power is the actual ballgame and what Frontier AI Regulation should be about. So one mention on page 31 probably isn't good enough for him. That's a fine reaction to me, just as it's fine for you and Marcus to disagree on the relative costs/benefits and write the FAIR paper the way you did.

- On the actual points though, I actually went back and skim-listened to the the webinar on the paper in July 2023, which Jeremy (and you!) participated in, and man I am so much more receptive and sympathetic to his position now than I was back then, and I don't really find Marcus and you to be that convincing in rebuttal, but as I say I only did a quick skim listen so I hold that opinion very lightly.

Meta Level -

- On the 'escalation' in the blog post, maybe his mind has hardened over the year? There's probably a difference between ~July23-Jeremy and ~Nov23Jeremy, which he may view as an escalation from the AI Safety Side to double down on these kind of legislative proposals? While it's before SB1047, I see Wiener had introduced an earlier intent bill in September 2023.

- I agree that "people are mad at us, we're doing something wrong" isn't a guaranteed logic proof, but as you say it's a good prompt to think "should i have done something different?", and (not saying you're doing this) I think the absolutely disaster zone that was the sB1047 debate and discourse can't be fully attributed to e/acc or a16z or something. I think the backlash I've seen to the AI Safety/x-risk/EA memeplex over the last few years should prompt anyone in these communities, especially those trying to influence policy of the world's most powerful state, to really consider Cromwell's rule.

- On this "you will just in fact have pro-OS people mad at you, no matter how nicely your white papers are written." I think there's some sense in which it's true, but I think that there's a lot of contigency about just how mad people get, how mad they get, and whether other allies could have been made on the way. I think one of the reasons they got so bad is because previous work on AI Safety has understimated the socio-political sides of Alignment and Regulation.[1]

- ^

Again, not saying that this is referring to you in particular

philbert schmittendorf @ 2025-03-27T16:33 (+10)

I broadly agree with the thesis that AI safety needs to incorporate sociopolitical thinking rather than shying away from it. No matter what we do on the technical side, the governance side will always run up against moral and political questions that have existed for ages. That said, I have some specific points about this post to talk about, most of which are misgivings:

- The claim that environmentalism caused climate change is ....reaching. To say that first wave environmentalism's opposition to nuclear energy slowed our mitigation of climate change is a slightly more defensible claim, but at the time, (1) nuclear waste was still a less solved problem, (2) fossil fuel companies were actively contributing to disinformation campaigns to ensure their continued dominance of the energy market, and (3) even without the opposition to nuclear from the early environmentalist movement, which was far from large enough to effect a systemic change to the entire planet and be held responsible for it, there still would likely have not been enough nuclear plants to overtake fossil fuels in some miraculous, instantaneous energy transition for the same reasons that that doesn't happen now: nuclear energy is capital-intensive, and it takes a long time in human-years to break even.

- Also, I am suspicious of framing "opposition to geoengineering" as bad -- this, to me, is a red flag that someone has not done their homework on uncertainties in the responses of the climate system to large-scale interventions like albedo modification. Geoengineering the planet wrong is absolutely an X-risk.

the "Left wants control, Right wants decentralization" dichotomy here seems not only narrowly focused on Republican - Democrat dynamics, but also wholly incorrect in terms of what kinds of political ideology actually leads one to support one versus the other. Many people on the left would argue uncompromisingly for decentralization due to concerns about imperfect actors cementing power asymmetries through AI. Much of the regulation that the center-left advocates for is aimed at preventing power accumulation by private entities, which could be viewed as a safeguard against the emergence of some well-resourced, corporate network-state that is more powerful than many nation-states. I think Matrice nailed it above in that we are all looking at the same category-level, abstract concerns like decentralization versus control, closed-source versus open-source, competition versus cooperation, etc. but once we start talking about object-level politics -- Republican versus Democratic policy, bureaucracy and regulation as they actually exist in the U.S. -- it feels, for those of us on the left, like we have just gotten off the bus to crazy town. The simplification of "left == big government, right == small government" is not just misleading; it is entirely the wrong axis for analyzing what left and right are (hence the existence of the still oversimplified, but one degree less 1-dimensional, Political Compass...). It seems to me that it is important for us to all step outside of our echo chambers and determine precisely what our concerns and priorities are so we can determine areas where we align and can act in unison.

- I sense a pretty clear Silicon Valley style right-wing echo-chamber aura from this post in general, and although I will always assume best intentions, I find it worrying that there are more nods to network-states and ideas that tread closely to the neoreactionary ideas of Curtis Yarvin and Nick Land than there are nods to post-capitalist or left-wing visions of the future, such as AI enabling decentralized planned economies or more direct democracies. We need to be careful that the discussions we are having about AI safety as they relate to politics do not end up reflecting, for instance, a lack of diversity of opinion within the Bay Area tech community that is doing the discussing.

Some points I liked / agree with:

- I agree we need to rethink political structures in a much bigger, broader way as it concerns AI. If we bring AGI into the picture, entirely new kinds of economies become possible, so it doesn't make all that much sense to focus on Republican / Democrat dynamics except for pragmatic actions in the near-term. I actually think we should be focusing way more on what the best ways to handle these things in the abstract would be to avoid muddy waters of current events and the political milieu.

- AI as a policy and moral advisor is among my favorite ideas. I would love to see an AI that can provide an evidence-based case for a given policy over another having taken in the entire corpus of information available on it independent of echo chambers. I do worry about who might build such an AI, though, as if it were not open-source and interpretable, it could be rigged to provide a veneer of rigorous justification for any draconian policy a government prefers to pass.

Sharmake @ 2025-03-28T15:14 (+3)

To respond to a local point here:

- Also, I am suspicious of framing "opposition to geoengineering" as bad -- this, to me, is a red flag that someone has not done their homework on uncertainties in the responses of the climate system to large-scale interventions like albedo modification. Geoengineering the planet wrong is absolutely an X-risk.

While I can definitely buy that geoengineering is a net-negative, I'm not sure how geoengineering gone wrong can actually result in X-risk, at least to me so far, and I don't currently understand the issues that well.

It doesn't speak well that he frames opposition to geoengineering as automatically bad (even if I assume the current arguments against geoengineering are quite bad).

Howie_Lempel @ 2025-03-29T21:49 (+6)

Anybody else in addition to Fukuyama, Friedman, and Cummings who you think is good at doing the kind of thinking you're pointing to here?

Are there alternatives to your two factor model that seem particularly plausible to you?

richard_ngo @ 2025-03-29T23:07 (+6)

Good questions. I have been pretty impressed with:

- Balaji, who tweets about the dismantling of the American Empire (e.g. here, here) are the best geopolitical analysis I've seen of what's going wrong with the current Trump administration

- NS Lyons, e.g. here.

- Some of Samo Burja's concepts (e.g. live vs dead players) have proven much more useful than I expected when I heard about them a few years ago.

I think there are probably a bunch of other frameworks that have as much or more explanatory power as my two-factor model (e.g. Henrich's concept of WEIRDness, Scott Alexander's various models of culture war + discourse dynamics, etc). It's less like they're alternatives though and more like different angles on the same elephant.

Chris Leong @ 2025-03-27T05:28 (+5)

a) The link to your post on defining alignment research is broken

b) "Governing with AI opens up this whole new expanse of different possibilities" - Agreed. This is part of the reason why my current research focus is on wise AI advisors.

c) Regarding malaria vaccines, I suspect it's because the people who focused on high-quality evidence preferred bet nets and folk who were interested in more speculative interventions were drawn more towards long-termism.

Camille @ 2025-03-29T20:30 (+4)

Thanks for posting this. It makes me think -"Oh, someone is finally mentioning this".

Observation : I think that your model rests on hypotheses that are the hypotheses I expect someone from Silicon Valley to suggest, using Silicon-Valley-originating observations. I don't think of Silicon Valley as 'the place' for politics, even less so epistemically accurate politics (not evidence against your model of course, but my inner simulator points at this feature as a potential source of confusion)

We might very well need to use a better approach than our usual tools for thinking about this. I'm not even sure current EAs are better at this than the few bottom-lined social science teachers I met in the past -being truth-seeking is one thing, knowing the common pitfalls of (non socially reflexive) truth-seeking in political thinking is another.

For some reasons that I won't expand on, I think people working on hierarchical agency are really worth talking to on this topic and tend to avoid the sort of issues 'bayesian' rationalists will fall into.

richard_ngo @ 2025-03-29T23:14 (+3)

Thanks for the comment.

I think you probably should think of Silicon Valley as "the place" for politics. A bunch of Silicon Valley people just took over the Republican party, and even the leading Democrats these days are Californians (Kamala, Newsom, Pelosi) or tech-adjacent (Yglesias, Klein).

Also I am working on basically the same thing as Jan describes, though I think coalitional agency is a better name for it. (I even have a post on my opposition to bayesianism.)

Matrice Jacobine @ 2025-03-30T10:48 (+9)

I think you're interpreting as ascendancy what is mostly just Silicon Valley realigning to the Republican Party (which is more of a return to the norm both historically and for US industrial lobbies in general). None of the Democrats you cite are exactly rising stars right now.

jackva @ 2025-03-30T13:03 (+3)

This seems right.

What happens right now is Silicon Valley becoming extremely polarizing, with those voices in the Democratic coalition having the most reach campaigning strongly on an anti-Musk platform.

PeterSlattery @ 2025-03-28T20:41 (+4)

Thanks for this. Is there a place where I can see the sources you are using here?

I am particularly interested in the source for this:

"The other graph here is an interesting one. It's the financial returns to IQ over two cohorts. The blue line is the older cohort, it's from 50 years ago or something. It's got some slope. And then the red line is a newer cohort. And that's a much steeper slope. What that means is basically for every extra IQ point you have, in the new cohort you get about twice as much money on average for that IQ point. "

richard_ngo @ 2025-03-29T04:38 (+2)

No central place for all the sources but the one you asked about is: https://www.sebjenseb.net/p/how-profitable-is-embryo-selection

gwern @ 2025-03-31T00:44 (+9)

FWIW, I am skeptical of the interpretation you put on that graph. I considered the same issue in my original analysis, but went with Strenze 2007's take that there doesn't seem to be much steeper IQ/income slope and kept returns constant. Seb objects that the datapoints have various problems and that's why he redoes an analysis with NLS to get his number, which is reasonable for his use, but he specifically disclaims projecting the slope out (which would make ES more profitable than it seems) like you do:

If the estimate were only based on the more recent cohort (NLSY97), the estimated effect between the ages of 25 and 65 would be $74,508, and adding the rough estimates for the additional years (18-24 and 66-80) increases it to $90,208. I think this estimate is too high; this projection relies on the assumption that the increases in the mean and variance of wages will hold for the future, which may not be the case.

And it's not hard to see why the slope difference might be real but irrelevant: people in NLSY79 have different life cycles than in NLSY97, and you would expect a steeper slope (but same final total lifetime income / IQ correlation) from simply lengthening years of education - you don't have much income as a college student! The longer you spend in college, the higher the slope has to be when you finally get out and can start your remunerative career. (This is similar to those charts which show that Millennials are tragically impoverished and doing far worse than their Boomer parents... as long as you cut off the chart at the right time and don't extend it to the present.) I'm also not aware of any dramatic surge in the college degree wage-premium, which is hard to reconcile with the idea that IQ points are becoming drastically more valuable.

So quite aside from any Inside View takes about how the current AI trends look like they're going to savage holders of mere IQ, your slope take is on shaky, easily confounded grounds, while the Outside View is that for a century or more, the returns to IQ have been remarkably constant and have not suddenly been increasing by many %.

SummaryBot @ 2025-03-28T16:49 (+1)

Executive summary: As EA and AI safety move into a third wave of large-scale societal influence, they must adopt virtue ethics, sociopolitical thinking, and structural governance approaches to avoid catastrophic missteps and effectively navigate complex, polarized global dynamics.

Key points:

- Three-wave model of EA/AI safety: The speaker describes a historical progression from Wave 1 (orientation and foundational ideas), to Wave 2 (mobilization and early impact), to Wave 3 (real-world scale influence), each requiring different mindsets—consequentialism, deontology, and now, virtue ethics.

- Dangers of scale: Operating at scale introduces risks of causing harm through overreach or poor judgment; environmentalism is used as a cautionary example of well-intentioned movements gone wrong due to inadequate thinking and flawed incentives.

- Need for sociopolitical thinking: Third-wave success demands big-picture, historically grounded, first-principles thinking to understand global trends and power dynamics—not just technical expertise or quantitative reasoning.

- Two-factor world model: The speaker proposes that modern society is shaped by (1) technology increasing returns to talent, and (2) the expansion of bureaucracy. These create opposing but compounding tensions across governance, innovation, and culture.

- AI risk framings are diverging: One faction views AI risk as anarchic threat requiring central control (aligned with left/establishment), while another sees it as concentrated power risk demanding decentralization (aligned with right/populists); AI safety may mirror broader political polarization unless deliberately bridged.

- Call to action: The speaker advocates for governance “with AI,” rigorous sociopolitical analysis, moral framework synthesis, and truth-seeking leadership—seeing EA/AI safety as “first responders” helping humanity navigate an unprecedented future.

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.

Sharmake @ 2025-03-27T18:36 (+1)

Crossposting this comment from LW, because I think there is some value here:

The main points are that value alignment will be way more necessary for ordinary people to survive, no matter the institiutions adopted, that the world hasn't yet weighed in that much on AI safety and plausibly never will, but we do need to prepare for a future in which AI safety may become mainstream, that Bayesianism is fine actually, and many more points in the full comment.

Elias Schmied @ 2025-03-27T17:53 (+1)

Love this, thanks!