Do short AI timelines make other cause areas useless?

By Hayley Clatterbuck, arvomm @ 2025-07-23T19:10 (+121)

The cause prioritization landscape in EA is changing. Prominent groups have shut down, others have been founded, and everyone’s trying to figure out how to prepare for AI. This is the third in a series of posts critically examining the state of cause prioritization and strategies for moving forward.

Executive Summary

- An increasingly common argument is that we should prioritize work in AI over work in other cause areas (e.g. farmed animal welfare, reducing nuclear risks) because the impending AI revolution undermines the value of working in those other areas.

- We consider three versions of the argument:

- Aligned superintelligent AI will solve many of the problems that we currently face in other cause areas.

- Misaligned AI will be so disastrous that none of the existing problems will matter because we’ll all be dead or worse.

- AI will be so disruptive that our current theories of change will all be obsolete, so the best thing to do is wait, build resources, and reformulate plans until after the AI revolution.

- We identify some key cruxes of these arguments, and present reasons to be skeptical of them. A more direct case needs to be made for these cruxes before we rely on them in making important cause prioritization decisions.

- Even on short timelines, the AI transition may be a protracted and patchy process, leaving many opportunities to act on longer timelines.

- Work in other cause areas will often make essential contributions to the AI transition going well.

- Projects that require cultural, social, and legal changes for success, and projects where opposing sides will both benefit from AI, will be more resistant to being solved by AI.

- Many of the reasons why AI might undermine projects in other cause areas (e.g. its unpredictable and destabilizing effects) would seem to undermine lots of work on AI as well.

While an impending AI revolution should affect how we approach and prioritize non-AI (and AI) projects, doing this wisely requires case-by-case evaluations, not writing off or privileging entire cause areas.

Introduction

Debates about cause prioritization are evergreen, in part because conversation has coalesced around three main positions—GHD, animal welfare, and x-risk—that are favored by different sets of reasons, and there is persistent uncertainty about which of these reasons should most compel us. Over the past several years, however, it is increasingly common to hear that cause prioritization debates have been settled or made moot because AI trumps all other causes.

Here, we’re interested in a particular version of this view. The claim is not just that AI is most important or that we should direct resources away from other areas (though, under this view, we should). It’s that the coming AI revolution undercuts the justification for doing work in other cause areas, rendering work in those areas useless, or nearly so (for now, and perhaps forever).[1] This claim cross-cuts the traditional three-way cause area debate, as even non-AI x-risk projects are impugned.

The AI cause area[2] arguably has several features that could justify its overwhelming importance relative to other areas:

- It’s impending: significant turning points will likely happen soon. Many other potential x-risks have low probabilities of occurring, and the timeline of potential impact is uncertain. Considering only these other x-risks, risk aversion and time discounting (for whatever reason) can tilt things in favor of GHD or animals. World-changing AI, on the other hand, is almost certainly coming, and timelines seem to get shorter every day. Therefore, even the risk-averse and present-focused should care about AI.

- AI’s effects are expected to be massive but highly uncertain. Even their valence is unknown; many people expect AI’s effects to be either very good or very bad, and we don’t know which.

- Its outcomes are highly contingent. AI development will be highly path-dependent, and actions we take imminently may have outsized effects on the future of AI and the world. A small number of actors, including those in the EA space, could significantly alter AI’s trajectory.

- AI will affect everything, including central foci of the GHD, animal welfare, and x-risk fields. AI acts as a universal solvent, subsuming other cause areas.

- AI will be destabilizing. We expect the world post-AI revolution to look very different from the world now. There are many epistemically possible AI futures that are radically different from the present and from one another, and we don’t have any real clue which future will come to pass.

For the most part, we won’t dispute that AI has these features. Our question is: does it follow that it’s (almost) useless to work on anything other than AI? We’ll consider three arguments that it does, and then consider what these arguments have in common. We provide some reasons to be skeptical of each of these arguments, identifying some key cruxes that need more defending before we conclude that AI significantly undermines other cause areas.

We are not disputing that there might be very good reasons to favor AI projects[3]. We are disputing a general heuristic that privileges the AI cause area and writes off all the others. To wisely navigate an AI transition, we need to engage in more cross- and within-cause prioritization.[4] With an impending AI revolution, it may be irresponsible not to factor AI into your plans, but it doesn't follow that it is irresponsible not to work on AI.

Three versions of the argument

Argument 1: Aligned AI will solve it

This might be the version of the argument we’ve heard most often, e.g. “AI will solve factory farming” or “AI will solve other x-risks”. Suppose the AI revolution goes well, and we end up with superintelligent, aligned AI. Would it be foolish to do work now that could be done, presumably better, by AIs? The answer might be different for research than for other kinds of projects, like advocacy or movement building, so we’ll consider these separately.

The most audacious form of the argument says that aligned AI will directly solve most of the issues that currently concern us. We’ll have no use for the Against Malaria Foundation when AI eradicates malaria, for the Humane League when AI eliminates factory farming, etc. A few observations. First, timelines matter here: even if AI “solves things” in five years, five years of gains from these projects is nothing to sneeze at.[5] Second, this argument can be marshalled to show that “AI will solve AI” too. This means that the heuristic comparing AI to non-AI cause areas is too simple, and within- and cross-cause prioritization is still necessary. We should have similar standards across cause areas for how much our uncertainty should undermine the value of pursuing any particular project.

More to the point, though, proponents of the “AI will solve it” line need to do more than just gesture at AI’s hypothetical superhuman abilities. They should provide some mechanism for how AI could solve the kinds of problems that haven’t yielded to human intelligence. More specifically, progress often faces bottlenecks that intelligence alone can’t solve. There may be obstacles between invention (coming up with a novel solution), innovation (making that solution practical and reliable), and deployment, such as: hurdles in designing hardware, procuring sufficient sources of energy, collecting long-context data, or getting new systems to integrate with old ones. Even if these don’t pose a long-term obstacle to AI solutions, they can slow it down significantly.

Cultural, political, legal ,and economic barriers may also halt or stop seemingly effective AI solutions. For example, suppose aligned AI follows human laws, and our laws stand in the way of implementing efficient solutions to existing problems. In that case, the same kinds of advocacy that matter today will matter in an AI future.

Consider the case of factory farming.[6] Even if aligned AI is committed to or neutral about animal wellbeing, it’s unclear how, or how quickly, it would “solve” factory farming. It’s possible that AI could invent a method for producing cultured meat at a fraction of the cost of conventional meat, which could cause an end to factory farming. Even so, it would likely take years to build out the infrastructure to produce lab-grown meat and make it economically competitive with traditional agriculture. Projects to improve conditions at factory farms in the interim could still have massive impact in the meantime. More pessimistically, we probably won’t end factory farming through technology alone. People have been hesitant to switch to meat substitutes and lab-grown meat. Multinational corporations have significant financial interests in factory farming, and they will also use AI to promote their position. Cultural, political, and economic changes will be necessary.

We also shouldn’t assume that “aligned” AI will seek to eliminate factory farming. That assumes a morally perfected AI, not one that aligns with the revealed preferences of most humans today. Instead, AI might exacerbate factory farming by making it more efficient and profitable, leading to a radical increase in its scale. Some of the most important work to prevent this from happening (e.g., changing present human values, increasing the representation of animal-friendly sources in training data, reducing the power of vested interests, etc.) is first-order work in the animal welfare cause area, not direct AI work.[7]

What about research? Even if superintelligent AI can’t solve the political or cultural challenges we face, surely it can solve the epistemic challenges better than we can. So even if advocacy or movement building work is not undermined by the AI revolution, there’s no reason to waste time doing feeble human research when AI will do much better. While this might be true for some kinds of research, there are reasons to be skeptical that AI will render all kinds of human research obsolete.

Just as present-day LLMs are trained on human sources, even superintelligent AIs may (at least initially) depend on human-provided data. Right now, if you wanted a report on, say, the incidence of footpad dermatitis in farmed chickens, you’d need to pay humans to do it. While it’s possible that this research could be automated (via surveillance drones or sensors in the floor of chicken cages), it might remain cheaper, easier, and more effective to get a human to do it than to build the infrastructure necessary for AIs to do it. However, this kind of research does seem highly susceptible to AI replacement, in the longer term.

While some are optimistic about AI’s ability to automate scientific discovery, both technical and ethical concerns may prevent AIs from doing certain kinds of experiments, especially those on humans. Lastly, we might be skeptical that AI will “solve philosophy.” Moral disputes have survived millennia of development by very intelligent humans, and we suspect that this intransigence can’t be (fully) explained by a lack of intelligence.

Perhaps our skepticism toward “AI will solve it” should be chalked up to a failure of imagination. Superintelligence will come up with solutions that we can’t imagine. It might be so persuasive that it yields significant cultural and political change. Nevertheless, betting on AI to solve serious problems—on its own, independent of the work we could be doing today—is risky. Our failure of imagination might also prevent us from seeing all the ways that AI control or AI research could go horribly awry or the blindspots it might have.

Which areas will be solvable by AI?

If the AI revolution is on the horizon, then it may be wise to prioritize projects that cannot be more efficiently solved by AI in the near future. Identifying what these projects are will be a more effective strategy for cause prioritization than a naive: “AI is good, everything else is bad”. Distinguishing between AI-solvable projects and those that need human involvement involves a lot of uncertainty. Future AI systems will likely be very different from today’s, so we can’t just extrapolate from what today’s AIs are good at.[8] Nevertheless, here’s a tentative framework for assessing the chances that a project is AI-solvable.

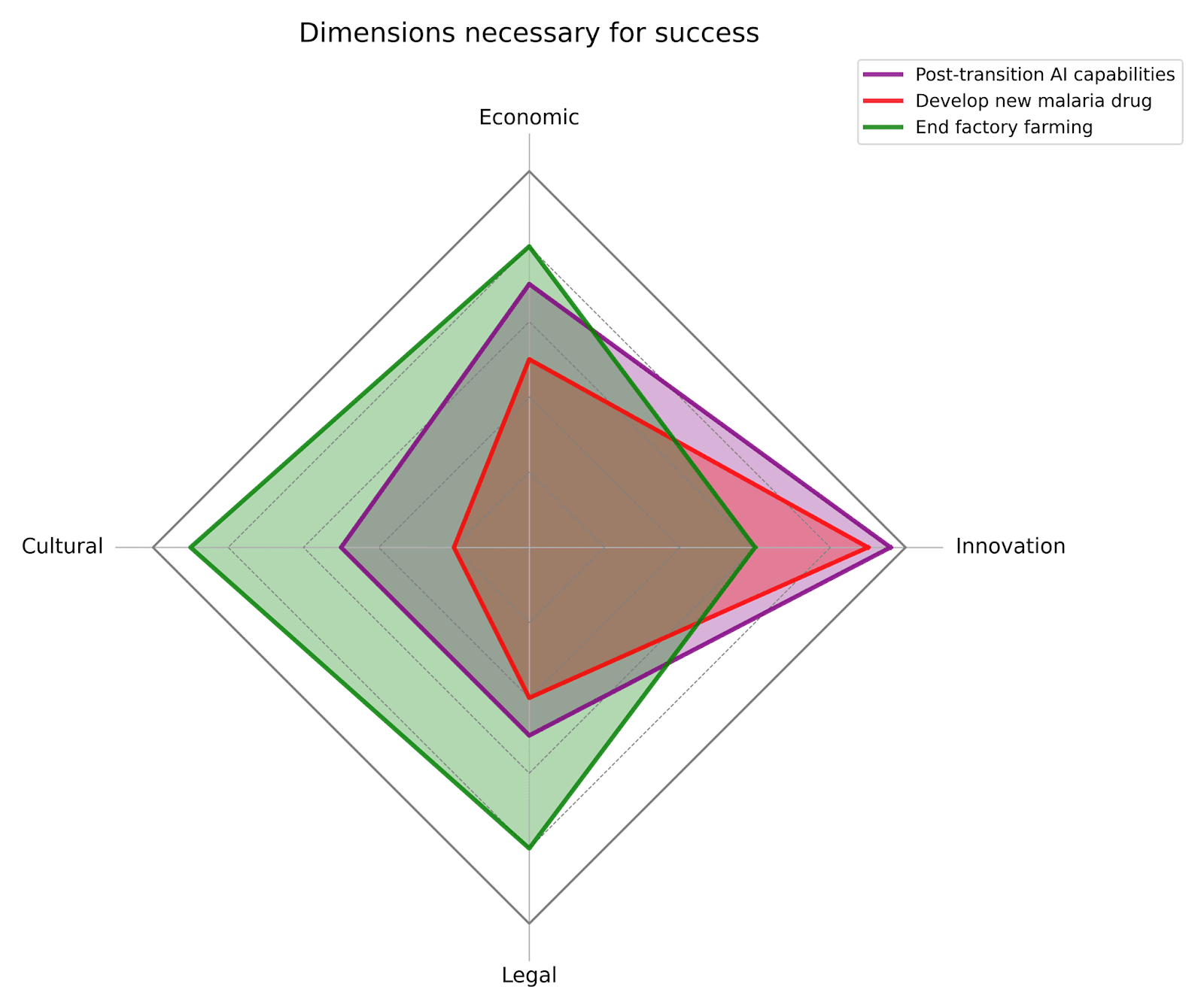

Dimensions necessary for success

How many things need to go well in order for the project as a whole to succeed? How many different battles on different fronts must be won? Important dimensions include:

Epistemic/Innovation[9]

- Cultural/Social

- Political/Legal

- Economic

All else being equal, the more dimensions that need to go well for a project to succeed, and the more independent they are, the less likely it is that the project will be solved by AI alone.

Most projects involve more dimensions than is commonly recognized. For example, you might think that drug discovery is purely Innovative and therefore that many global health projects will be AI solvable. However, recent changes in the political landscape offer distressing reminders about the limits of Innovation alone. For example, Innovation has given us extraordinary treatments for HIV/AIDS. However, political and cultural changes in the US have eliminated the infrastructure and financial support to implement these treatments. Failure on the Political/Legal dimension is estimated to cost 14 million lives between 2025 and 2030. Other dimensions can flip the sign of AI projects’ value. For example, a project might be hugely negative if harnessed to a malevolent dictator, but if harnessed to a democratic regime, then hugely positive. Work on these other dimensions becomes crucial.

Is AI effective on those dimensions?

Obviously, if success requires progress on dimensions that AI is ill-suited for, then it is far less likely to be AI-solvable. Currently, we are most confident in AI’s ability to overcome Innovation challenges.[10] AI will likely yield efficiencies that will solve problems on Economic dimensions as well. AI’s prospects for spurring cultural or political change in desirable directions are much more uncertain. AI already boasts a Nobel prize in chemistry. Its prospects for a Nobel peace prize are much murkier.

Will AI work in opposite directions on those dimensions?

When assessing AI-solvability, don’t just ask: will AI make progress on this dimension? You should also ask: will AI also inhibit progress on this dimension? We need to remember AI’s potential downsides, including the fact that the opposition will be using AI too.

For some projects, AI will probably only help. AI can be used to develop new malaria drugs, and there is no Big Malaria that will use AI to make malaria more drug resistant.[11] For others, AI cuts both ways. It can help facilitate democratic deliberation and consolidate authoritarian power. It can be used to develop alternative proteins and to make factory farming more profitable. It can be used to make scientific discoveries and to produce misinformation. When AI can work in opposing directions, human work will be important in determining which side comes out ahead.

Timeline of impact

Does a project’s forecasted impacts come in 6 months? 5 years? 50 years? Shorter timelines to impact will sometimes make projects less AI-solvable. If you are confident that your project will achieve real good before AI disruption, without too much of an opportunity cost, then you should be less worried about being undermined. Indeed, if you are risk averse, you might favor short-term wins. After all, it would be unfortunate to pass on, say, relieving millions of hours of chicken suffering over the next year because you’re uncertain about what AI will do in 10 years.[12] On the other hand, with longer timelines to impact, there is compounding uncertainty about what will happen with AI in the interim, raising the chance that your project will be undermined.

It would be a mistake to equate short timelines to impact with “not AI-solvable”, however. Some short-term work might build “levers that reach across the paradigm shift” (as these authors aptly put it). For example, some movement building or advocacy work might have a path to impact 50 years in the future. If that work makes the AI transition go better and if AI serves as an effect amplifier, then the AI revolution could enhance the value of that work rather than undermine it. For example, if animal advocacy makes AI more animal friendly, and AI futures without this work would have contained far more factory farmed animals than today, then working on animal advocacy is more important with the pending AI transition.

Argument 2: Misaligned AI will destroy us

On the opposite side of the optimism-pessimism spectrum, you might think that AI is the only work worth doing now because if we don’t get it right, nothing else matters. If you believe that “the most likely result of building a superhumanly smart AI, under anything remotely like the current circumstances, is that literally everyone on Earth will die,” then the most effective work on behalf of animals or impoverished humans is to stop misaligned superintelligent AI from happening. One reason why AI has disrupted traditional cause-prioritization is that it matters from lots of different perspectives: animal- and human-focused, longtermist or shortermist, totalist or harm-focused, etc.

However, these features are not unique to AI. If there’s a worldwide nuclear war, very little[13] we do today matters either, but it’s not commonly believed that we should halt working on everything but the prevention of nuclear war. What could demand allocating more resources to AI than we do to nuclear war, and indeed, allocating resources away from nuclear war (and other x-risks) to AI?

We need to weigh the immense consequences of x-risks against their probabilities of occurring and how much our actions can reduce those probabilities. For many x-risks, the probabilities may be low enough that x-risk projects don’t clearly have higher expected value than other causes (and if you’re averse to spending on projects with high chances of doing nothing, you’ll be even less inclined to invest in x-risk). It’s commonly believed that the probability of x-risk from misaligned AI is significantly higher than other threats; for example, in The Precipice, Ord estimates that over the next 100 years, there is a 1/1000 chance of x-risk due to nuclear weapons and a 1/10 chance due to AI (numbers he has since slightly updated). This is a difference in degree, not in kind.

A second reason is that the consequences of AI x-risks may be worse than other kinds of x-risks, even nuclear war. It may result in total extinction rather than diminishment. Or it may keep us all alive in a persistent terrible state. Third, for various reasons, AI projects might have greater impacts than nuclear ones: AI is still being developed (it’s like working on nuclear in 1943), power over AI might be more distributed than nuclear power that is concentrated in nation states, etc.

High probabilities of doom and opportunities for effective intervention may constitute a reason for prioritizing AI work now. The threat of doom also chips away at some of the expected value of other work. But unless you have very high probabilities of doom, a lot of the value stays intact. In the, say, 90% of worlds where we avoid AI x-risk, work in animal welfare and global health will still have been important. Lastly, there are many doom scenarios that don’t involve all of us dying. Work in non-AI cause areas that helps us avoid these s-risk futures (e.g. worlds with entrenched totalitarianism or vastly expanded factory farming) becomes more important, not less.

Argument 3: AI will change the landscape, we should wait for the dust to settle

Suppose you don’t buy that AI will necessarily be either extremely good or extremely bad, such that AI will either fix all our current problems or render them moot by killing us all. However, nearly everyone believes that the AI revolution will have significant and highly unpredictable effects. It will cause structural changes to technological, cultural, political, and economic systems. These changes will affect:

- The cost-effectiveness of actions

- The possibility space

- The problems that are most pressing

- Political feasibility

- Common value systems against which projects are evaluated

Et cetera.

In light of this uncertainty horizon, why should we trust that any of our current cause prioritization decisions and favored interventions will make sense after AI? The best option now is to grow our resources, keep our options open, and wait until the dust settles in a new AI world before we resume our work.

A first observation is that this scenario is not unique to AI. Since we will typically do better with more information, there’s always a case to be made that we should wait for science to advance before acting. The epistemic challenges presented by AI differ in degree, not in kind, which suggests to us that decisions about when to hold off for more information will still require case-by-case judgments.

Second, while many things will change with AI, some things probably won’t. For example, the relative cost-effectiveness of corporate campaigns might change if AI further consolidates the poultry industry, but will facts about whether chickens are sentient and what causes them to suffer also change? Maybe. With the assistance of AI science, we might genetically engineer chickens that don’t suffer or suffer in different ways. But we shouldn’t be confident that this will happen, and even if it does, more knowledge today will equip us to more quickly understand the changes that AI brings about. Other domains might be even more resistant to AI disruption. Some will take on extra importance during the turbulent AI transition. Human psychology and moral philosophy are key examples.

Third, it’s not obvious that uncertainty about AI should undermine your theory of change. If you really have no idea how AI will affect the future–with some possibilities helping your cause and others hindering it–then these possibilities can cancel each other out, leaving your current theory of change your best bet in expectation.[14] Fourth, pausing work in a cause area can cause it to leach expertise and resources. If we hold off until some unspecified AI future arrives, we risk having no one around to help us navigate the AI future.

Lastly, like the first two versions of the argument, this argument threatens to undermine work in AI as well. AI will be very different in the future, as will the legal and social environment around it, in ways that will render some of our current work moot. The best AI projects will be ones for which we can tell, pre-AI, that the intervention will robustly improve the AI future, despite our deep uncertainty about what the AI future will be like. For many projects, once we acknowledge that the success rate of our actions may be small (making it some fraction of a percentage point more likely that the AI revolution ends up in state 1 rather than state 2) and our uncertainty about the results (whether state 1 really is preferable to state 2), it’s less obvious that this is a cost-effective or the only cost-effective thing we could be doing.

The general structure of the argument

While these three arguments posit different scenarios for how AI undermines other cause areas, they share a common structure.[15]

- The AI revolution is a bottleneck through which the value of the future flows. Other cause areas don’t have significant independent effects on the future.

- If the value of the future bottlenecks through AI, then the only effective thing to do is work to ensure that the AI revolution goes well.

- The only effective work to make sure the AI revolution goes well is direct work on AI safety, alignment, etc.

C: Therefore, the only effective thing to do is direct work on AI safety, alignment, etc.

We’ve presented objections to specific versions of this argument, but it’s worth reflecting on the more general reasons to be skeptical of this kind of argument.

Objections to Premise 1 and 2: The chances of a strong AI bottleneck are overestimated

Premise 1 has two parts: the AI revolution will occur, and its effects will be so far-reaching that all other cause areas’ effects will be mediated, moderated, or cancelled by AI. An extreme interpretation of the premise asserts that transformative AI is a single event, and much of the future causally depends on that event. Even more extreme: that this event will either go very well or very poorly, either resulting in utopia or extinction (or worse). If this binary holds, then we get a dilemma for non-AI work. If the AI revolution turns out badly, then none of the other cause areas matter because we’re all dead (as in Argument 2). If it turns out well, then none of the cause areas matter because it will solve all of our problems (as in Argument 1). We’ve examined these horns of the dilemma, but it’s worth questioning the “binary single event” assumption.

While bearishness about the technological development of transformative AI may be increasingly rare these days, there is still reason to be skeptical that AI will quickly and completely change social, political, and economic structures. Timelines matter here. Suppose you’re doing research on healthcare infrastructure development in a LMIC. You grant that transformative AI will eventually change the facts on the ground and the best interventions so much that the present research won’t apply. If those changes happen next year, then your current project is probably useless, but if those changes come 20 years from now, it might still be extremely effective.

Instead of thinking of the AI revolution as an event, we should probably think of it as a bunch of different events spread out over time and space. Not as a force, but a bunch of different forces with disparate and context-dependent impacts. If defenders of the singularity hypothesis are right, then a specific event, namely the development of a self-improving superintelligence, will radically change everything to come. For those skeptical of the view, the AI revolution looks more like a diffuse and protracted era, and the idea of an imminent AI bottleneck is less plausible.

It is not easy to predict how much change will be wrought by new technologies. For example, many theorists predicted that the development and deployment of the atomic bomb would cause a complete transformation of warfare and international relations, and perhaps much more. For example, in 1951, Bertrand Russell wrote:

“Before the end of the present century, unless something quite unforeseeable occurs, one of three possibilities will have been realized. These three are: —

1. The end of human life, perhaps of all life on our planet.

2. A reversion to barbarism after a catastrophic diminution of the population of the globe.

3. A unification of the world under a single government, possessing a monopoly of all the major weapons of war.”

He was, thankfully, wrong. Even in the areas most disrupted by nuclear weapons, progress made before the nuclear transition (e.g. in conventional weapons, traditional statecraft, international accords) continued to be important. Today’s predictors of AI transformation might be right where Russell was wrong. However, we should retain some credence that projects in non-AI cause areas will continue to have some independent effect on the future.

Objection to Premise 3: AI work doesn’t win by default

Showing that non-AI work is useless (intractable, net negative, etc.) does not show that AI work is useful (tractable, net positive, etc.). Indeed, if you think that the AI revolution is unpredictable, path-dependent, and massive in scope (the reasons why AI will supposedly undercut other kinds of work), then perhaps you should be just as skeptical about the prospects of current work in AI. So, even if you’re evaluating an animal welfare or GHD project on a short timeline (e.g. the next 5 years, before AGI), it might be more cost effective than a candidate AI project. If you are risk averse, those predictable gains will be even more appealing.

There’s also the risk that current work on AI, even with the aim of promoting AI safety, increases the probability of transformative AI. If you think that transformative AI may be a very bad thing, AI work may be net negative.[16] In that case, shifting to non-AI cause areas might have better prospects, in part because it would reduce the chances that we’re in a world where those non-AI cause areas are undermined.

Objection to Premise 3: Work on other cause areas causally contributes to the AI revolution going well

Suppose that the AI revolution can go well or badly, and how well or badly it goes will determine much of the future. We should therefore focus our efforts on making sure this event goes well. But it doesn’t follow that working in other cause areas is useless. In particular, this work might constitute work to make sure the AI revolution goes well.

One way that the AI revolution could go poorly is that it locks-in bad moral values. Instilling the right values requires that we have some good idea of what the right values are (or, at least, what a good process of moral reflection looks like) and have some way of getting AGI to adopt them. This suggests that we should be investing in research across a wide range of non-AI areas. For example, to make sure that AGI has animal-friendly values, we should make sure that AI’s training data contains information about the morally relevant capacities of animals, and we should engage in advocacy to convince those who control AI to direct it toward animal-friendly ends.

The world is not neatly compartmentalized. Progress in one area often supports progress in others. For example, by investing in biosecurity, we hedge against non-AI existential threats, and we also build expertise and infrastructure (like pathogen surveillance and international cooperation frameworks) that could be invaluable in an AI crisis too (say, if we ever needed global coordination on AI similar to a pandemic response). More speculatively, if we improve climate prospects or resource access, countries may become more resilient, prosperous, and cooperative, potentially easing geopolitical tensions (which is critical when managing AI).[17] If you truly fear an AI-related catastrophe, one wise strategy is to strengthen society on all other fronts – so that if we face AI upheaval, we do so as a healthier, wealthier, more unified world. That could make the difference between riding out the turbulence or collapsing under it. If you are an optimist, then you should be interested in developing systems for capitalizing on AI’s considerable benefits: fair and open governments, energy infrastructure, mechanisms for sharing wealth and power, etc.

Investing early in non-AI infrastructure therefore serves two roles: it amplifies any upside that advanced AI can unlock, and it provides a hedge against the downside of moral or institutional stasis. By keeping robust movements, policy channels, and R&D ecosystems alive across cause areas, we greatly reduce the risk that a powerful but blinkered AI era locks humanity into a future we later recognize as deeply sub-optimal.

Conclusion

Arguments for the view that an impending AI revolution undermines all other cause areas sometimes rest on strong assumptions: that it has a high probability of rending our current problems moot (either by solving them or killing us); that direct AI work is the only way to nudge the AI revolution in good directions; that all aspects of the future strongly bottleneck through AI. We've outlined reasons to question each of these assumptions.

Without these assumptions, the case for an exclusive focus on AI is weaker. A diversified portfolio of causes is, among other things, a hedge against our uncertainty about AI timelines, trajectories, and tractability. This diversity also preserves valuable domain expertise and institutional knowledge that will be crucial for navigating a post-AI-transition world.

Particular AI projects really might be the most important ones that we could be pursuing now. But the case for these projects should be made on their specific merits, not a sweeping cause-level heuristic that privileges AI and downgrades other cause areas. It also may be necessary to factor AI into your plans, whatever they happen to be. However, it doesn't follow that it is fruitless to work on other things.

Acknowledgements

This is a project of the Worldview Investigation Team at Rethink Priorities. Thank you to Noah Birnbaum, Marcus Davis, Oscar Delaney, Laura Duffy, Bob Fischer, David Moss, and Derek Shiller for helpful feedback on this post. If you like our work, please consider subscribing to our newsletter. You can explore our completed public work here.

- ^

Anecdotally, this was a common sentiment at EAG Bay Area, and we often heard it mentioned by people working in non-AI areas to express skepticism about their current projects. We’ve also heard potential funders express skepticism about the value of investing in non-AI cause areas. There’s pressure to have a theory of change that either achieves lots of value in the next couple of years, before the arrival of AGI, or which is tailored to achieve value in an AI future.

- ^

Considered liberally, to include AI safety, alignment, capabilities, and other research.

- ^

AI causes might be more cost-effective than projects in other areas, even if AI doesn’t undercut those projects’ efficacy. Assessing the overall effectiveness of these broad cause areas is too big a project to take on here. For our recent work on these big questions, see our CURVE and CRAFT sequences.

- ^

For a very helpful analysis of how AI affects within-cause prioritization in the animal welfare space, see this wide-ranging discussion which makes many of the same points we make here.

- ^

We are not arguing here that these gains are significant compared to various AI projects. Our question here is whether AI undermines the value of these projects, not whether AI outweighs them.

- ^

Again, for a more detailed analysis of this case study, see this post.

- ^

If you have a broader conception of what counts as “work in AI” such that animal advocacy projects count, then the target argument (that AI undermines work in other areas) doesn’t have much force. Accepting the conclusion wouldn’t entail redirecting resources away from projects in animal welfare, GHD, etc.

- ^

Already, we’ve seen quite a few predictions that LLMs are not capable of a kind of task be proven false, e.g. by the advent of reasoning models. On the other hand, LLMs continue to show some surprising failures. The lesson we draw is that we aren’t very good at predicting what AIs will be good or bad at.

- ^

We could break this down further. AI is currently best at solving “problems with certain characteristics, such as huge combinatorial search spaces, large amounts of data, and a clear objective function to benchmark performance against”. AI is not (currently) as good at problems requiring insight and discovery in less well-defined problem spaces or with sparse data.

- ^

If we subdivide this dimension, we are most confident that AI can overcome challenges that involve large search spaces and a clear measure of success.

- ^

Though malevolent actors may design bioweapons to do so. There could also be more diffuse pathways in which an AI-changed society comes to care less about preventing malaria deaths (e.g. involving cultural changes or prioritization of social problems that AI shows promise in solving).

- ^

Especially so if the things you value aren’t fungible, such that greater effects in the future don’t compensate for missed opportunities today.

- ^

Efforts to make civilization resilient to massive catastrophes or to transmit information and supplies to survivors would still be useful.

- ^

These are like cases of Greaves’s “simple cluelessness”.

- ^

This formulation of the argument is admittedly too strong. You can believe that work on AI is the most important thing without thinking that other causes are useless. It’s also too vague. For example, what do we mean by “effective”? Cost effective? Tractable? Likely to make a difference?

- ^

Historically, much of the acceleration of AI capabilities (e.g. founding of companies, funding, etc.) has been caused by people who are motivated by AI x-risk concerns. There’s a plausible argument to be made that AI x-risk fears have significantly accelerated AI timelines and risks.

- ^

Potential convergence here doesn’t strike us as too surprising and suspicious, as there are very plausible causal mechanisms by which x-risk preparedness and avoidance would also help us prepare for and avoid bad AI outcomes. And note, that we are not trying to make the case that these interventions would be the most effective approaches for solving AI, only that they would still contribute to aiding the AI transition. Of course, more work would need to be done to establish these causal mechanisms.

Rohin Shah @ 2025-07-27T14:36 (+38)

We are disputing a general heuristic that privileges the AI cause area and writes off all the others.

I think the most important argument towards this conclusion is "AI is a big deal, so we should prioritize work that makes it go better". But it seems you have placed this argument out of scope:

[The claim we are interested in is] that the coming AI revolution undercuts the justification for doing work in other cause areas, rendering work in those areas useless, or nearly so (for now, and perhaps forever).

[...]

AI causes might be more cost-effective than projects in other areas, even if AI doesn’t undercut those projects’ efficacy. Assessing the overall effectiveness of these broad cause areas is too big a project to take on here.

I agree that lots of other work looks about as valuable as it did before, and isn't significantly undercut by AI. This seems basically irrelevant to the general heuristic you are disputing, whose main argument is "AI is a big deal so is way more important".

Hayley Clatterbuck @ 2025-07-28T16:11 (+6)

We wanted to focus on a specific and somewhat manageable question related to AI vs. non-AI cause prioritization. You're right that it's not the only important question to ask. If you think the following claim is true - 'non-AI projects are never undercut but always outweighed' - then it doesn't seem like an important question at all. I doubt that claim holds generally, for reasons that were presented in the piece. When deciding what to prioritize, there are also broader strategic questions that matter - how is money and effort being allocated by other parties, what is your comparative advantage, etc. - that we don't touch at all here.

Rohin Shah @ 2025-07-30T07:54 (+6)

If you think the following claim is true - 'non-AI projects are never undercut but always outweighed'

Of course I don't think this. AI definitely undercuts some non-AI projects. But "non-AI projects are almost always outweighed in importance" seems very plausible to me, and I don't see why anything in the piece is a strong reason to disbelieve that claim, since this piece is only responding to the undercutting argument. And if that claim is true, then the undercutting point doesn't matter.

Neel Nanda @ 2025-07-28T16:41 (+4)

When you say you doubt that claim holds generally, is that because you think that the weight of AI isn't actually that high, or because you think that AI may make the other thing substantially more important too?

I'm generally pretty sceptical about the latter - something which looked like a great idea not accounting for AI will generally not look substantially better after accounting for AI. By default I would assume that's false unless given strong arguments to the contrary.

Hayley Clatterbuck @ 2025-07-28T20:55 (+6)

I think there are probably cases of each. For the former, there might be some large interventions in things like factory farming or climate change (i) that could have huge impacts and (ii) for which we don't think AI will be particularly efficacious or impactful.

For the latter, here are some cases off the top of my head. Suppose we think that if AI is used to make factory farming more efficient and pernicious, it will be via X (idk, some kind of precision farming technology). Efforts to make X illegal look a lot better after accounting for AI. Or, right now, making it harder for people to buy ingredients for biological weapons might be good bets but not great bets. It reduces the chances of bio weapons somewhat, but knowledge about how to create weapons is the main bottleneck. If AI removes that bottleneck, then those projects look a lot better.

Neel Nanda @ 2025-07-26T03:09 (+24)

I agree with the broad critique that " Even if you buy the empirical claims of short-ish AI timelines and a major upcoming transition, even if we solve technical alignment, there is a way more diverse set of important work to be done than just technical safety and AI governance"

But I'm concerned that reasoning like this can easily implicitly lead to people justifying incremental adaptions to what they were already doing and answering the question of, is what I'm doing useless in the light of AI, rather than the question that actually matters of, given my values, could I be doing things that I have substantially more impact? It would just be pretty surprising to me if the list of the most important cause areas conditional on short-ish timelines was very similar to the list conditioning on the reverse, and I expect there's bunch of areas being dropped here.

Hayley Clatterbuck @ 2025-07-28T16:01 (+19)

By calling out one kind of mistake, we don't want to incline people toward making the opposite mistake. We are calling for more careful evaluations of projects, both within AI and outside of AI. But we acknowledge the risk of focusing on just one kind of mistake (and focusing on an extreme version of it, to boot). We didn't pursue comprehensive analyses of which cause areas will remain important conditional on short timelines (and the analysis we did give was pretty speculative), but that would be a good future project. Very near future, of course, if short-ish timelines are correct!

Michael St Jules 🔸 @ 2025-07-23T23:21 (+11)

Thanks for writing this!

I'm wondering about your factory farming analysis:

Consider the case of factory farming.[6] Even if aligned AI is committed to or neutral about animal wellbeing, it’s unclear how, or how quickly, it would “solve” factory farming. It’s possible that AI could invent a method for producing cultured meat at a fraction of the cost of conventional meat, which could cause an end to factory farming. Even so, it would likely take years to build out the infrastructure to produce lab-grown meat and make it economically competitive with traditional agriculture.

How many years do you have in mind in here? I could imagine this going pretty quickly, and much faster than historically for growing industries, because:

- AI lets us skip to much more efficient alt protein production processes, instead of iterative improvement over years of R&D.

- AI designs faster and more efficient resource extraction and infrastructure building processes. Or, AI designs alt protein production processes that can make good use of other processes and the market at the time.

- Capital investment could be very high because of

- AI-related economic growth,

- interest from now far wealthier AI investors, including billionaire tech (ex-)CEOs, and/or

- proof of efficient alt protein production process designs, rather than investors waiting for more R&D.

The time it takes to build alt protein production plants could be the main bottleneck, and many could be built in parallel, enough to exhaust expected demand after undercutting conventional animal products. Maybe this takes a couple of years after efficient alt protein processes are designed by AI?

Fairly speculative, of course. Seems like high ambiguity here.

More pessimistically, we probably won’t end factory farming through technology alone. People have been hesitant to switch to meat substitutes and lab-grown meat. Multinational corporations have significant financial interests in factory farming, and they will also use AI to promote their position. Cultural, political, and economic changes will be necessary.

I agree with this. However, I wonder how far off sufficient economic changes would be. People could become wealthy enough to pay for (or subsidize others for) high welfare animal products, and this could eliminate the rest of factory farming. Transitioning housing types could take some time, but with enough money thrown at it, it could be very quick. Again, fairly speculative.

Hayley Clatterbuck @ 2025-07-24T17:05 (+15)

Hi Michael!

You've identified a really weak plank in the argument against AI solving factory farming. I agree that capacity-building is not a significant bottleneck, for a lot of the reasons you present.

I think the key issue is whether there will be social and legal barriers that prevent people from switching to farmed animal alternatives. These barriers might prevent the kinds of capacity build-up that would make alternative proteins economically competitive.

I think I might be more pessimistic than you about whether people want to switch to more humane alternatives (and would do so if they were wealthier). That's probably the case for welfare-enhanced meat (as we see with many affluent customers today). I'm less confident about willingness to switch to lab-grown meat or other alternatives.

I'm quite curious about a scenario in which: massive capacity for producing alt proteins happens without cultural buy-in, causing alt proteins to be far cheaper than animal proteins. The economic incentives to switch could cause quite swift cultural changes. But I'm quite uncertain when trying to predict culture changes.

Brad West🔸 @ 2025-07-25T21:59 (+6)

Thanks for this thoughtful analysis! I appreciate the systematic approach to examining how AI might affect other cause areas.

I noticed that the article primarily engages with a particularly strong version of the "AI undermines other causes" argument - that AI renders work in other areas "useless, or nearly so." While you acknowledge you're "disputing a general heuristic that privileges the AI cause area and writes off all the others," much of the analysis focuses on rebutting this extreme position.

There's a more moderate version that deserves engagement: that transformative AI significantly reduces (but doesn't eliminate) the expected value of other cause areas - perhaps by 50-90%. This weaker claim avoids many of the vulnerabilities you identify while still having profound implications for resource allocation. Even if factory farming work retains 30% of its expected value in an AI-transformed world, that might still justify shifting substantial resources toward AI work.

Would your analysis change if engaging with this more moderate position? Some of your arguments (like the value of near-term wins) would still apply, while others might need reframing around questions of degree rather than kind. The practical implications for funding and career decisions could still be quite significant even under this weaker assumption.

Hayley Clatterbuck @ 2025-07-27T17:35 (+6)

You make a helpful point. We've focused on a pretty extreme claim, but there are more nuanced discussions in the area that we think are important. We do think that "AI might solve this" can take chunks out of the expected value of lots of projects (and we've started kicking around some ideas for analyzing this). We've also done some work about how the background probabilities of x-risk affect the expected value of x-risk projects.

I don't think that we can swap one general heuristic (e.g. AI futures make other work useless) for a more moderate one (e.g. AI futures reduce EV by 50%). The possibilities that "AI might make this problem worse" or "AI might raise the stakes of decisions we make now" can also amplify the EV of our current projects. Figuring out how AI futures affect cost-effectiveness estimates today is complicated, tricky, and necessary!

Ian Turner @ 2025-07-25T19:19 (+6)

Odd to me that this article doesn't mention LLINs at all, as it is probably the EA program that has received the most funding. Despite the name, the LLINs only last about 3 years or so before the funding needs to take into account the short-term duration of these programs.

In other words, I don't think AI advances should affect your willingness to fund bednets unless you expect one of the arguments in this piece to take effect in the next 4 years or so (which is an even more aggressive timeline than saying we achieve AGI in the next 4 years).

I would expect that many other EA-funded programs turn out to have a similar analysis when examined in this way.

Denkenberger🔸 @ 2025-07-25T06:03 (+3)

I think you make a lot of good points as to why other causes should not have their funding reduced that much. But I didn't see you making the point that in particular nuclear and pandemic risks could increase because of AI, so the case for funding them remains relatively strong. So maybe a compromise is reducing funding for global poverty/animal welfare/climate projects that have long timelines for impact, increasing funding for AI, and maintaining it for nuclear and pandemic? My understanding of what is happening now is that global poverty/animal welfare funding is being maintained, but non-AI X-risk funding has fallen dramatically.

Hayley Clatterbuck @ 2025-07-25T20:22 (+2)

Thanks for the helpful addition. I'm not an expert in the x-risk funding landscape, so I'll defer to you. Sounds like your suggestion could be a sensible one on cross-cause prio grounds. It's possible that this dynamic illustrates a different pitfall of only making prio judgments at the level of big cause areas. If we lump AI in with other x-risks and hold cause-level funding steady, funding between AI and non-AI x-risks becomes zero sum.