Noah Birnbaum's Quick takes

By Noah Birnbaum @ 2025-07-16T16:56 (+4)

nullNoah Birnbaum @ 2026-01-15T22:41 (+34)

Dwarkesh (of the famed podcast) recently posted a call for new guest scouts. Given how influential his podcast is likely to be in shaping discourse around transformative AI (among other important things), this seems worth flagging and applying for (at least, for students or early career researchers in bio, AI, history, econ, math, physics, AI that have a few extra hours a week).

The role is remote, pays ~$100/hour, and expects ~5–10 hours/week. He’s looking for people who are deeply plugged into a field (e.g. grad students, postdocs, or practitioners) with high taste. Beyond scouting guests, the role also involves helping assemble curricula so he can rapidly get up to speed before interviews.

More details are in the blog post; link to apply (due Jan 23 at 11:59pm PST).

Toby Tremlett🔹 @ 2026-01-16T11:32 (+2)

+1 I would love an EA to be working on this.

Noah Birnbaum @ 2025-07-16T16:57 (+33)

Probably(?) big news on PEPFAR (title: White House agrees to exempt PEPFAR from cuts): https://thehill.com/homenews/senate/5402273-white-house-accepts-pepfar-exemption/. (Credit to Marginal Revolution for bringing this to my attention)

Noah Birnbaum @ 2025-12-04T23:22 (+29)

- Re the new 2024 Rethink Cause Prio survey: "The EA community should defer to mainstream experts on most topics, rather than embrace contrarian views. [“Defer to experts”]" 3% strongly agree, 18% somewhat agree, 35% somewhat disagree, 15% strongly disagree.

- This seems pretty bad to me, especially for a group that frames itself as recognizing intellectual humility/we (base rate for an intellectual movement) are so often wrong.

- (Charitable interpretation) It's also just the case that EAs tend to have lots of views that they're being contrarian about because they're trying to maximize the the expected value of information (often justified with something like: "usually contrarians are wrong, but if they are right, they are often more valuable for information than average person who just agrees").

- If this is the case, though, I fear that some of us are confusing the norm of being contrarian instrumental reasons and for "being correct" reasons.

Tho lmk if you disagree.

Thomas Kwa @ 2025-12-05T11:28 (+14)

I think the "most topics" thing is ambiguous. There are some topics on which mainstream experts tend to be correct and some on which they're wrong, and although expertise is valuable on topics experts think about, they might be wrong on most topics central to EA. [1] Do we really wish we deferred to the CEO of PETA on what animal welfare interventions are best? EAs built that field in the last 15 years far beyond what "experts" knew before.

In the real world, assuming we have more than five minutes to think about a question, we shouldn't "defer" to experts or immediately "embrace contrarian views", rather use their expertise and reject it when appropriate. Since this wasn't an option in the poll, my guess is many respondents just wrote how much they like being contrarian, and EAs have to often be contrarian on topics they think about so it came out in favor of contrarianism.

[1] Experts can be wrong because they don't think in probabilities, they have a lack of imagination, there are obvious political incentives to say one thing over another, and probably other reasons, and lots of the central EA questions don't have actual well-developed scientific fields around them, so many of the "experts" aren't people who have thought about similar questions in a truth-seeking way for many years

Arepo @ 2025-12-07T18:51 (+4)

I agree with Yarrow's anti-'truth-seeking' sentiment here. That phrase seems to primarily serve as an epistemic deflection device indicating 'someone whose views I don't want to take seriously and don't want to justify not taking seriously'.

I agree we shouldn't defer to the CEO of PETA, but CEOs aren't - often by their own admission - subject matter experts so much as people who can move stuff forwards. In my book the set of actual experts is certainly murky, but includes academics, researchers, sometimes forecasters, sometimes technical workers - sometimes CEOs but only in particular cases - anyone who's spent several years researching the subject in question.

Sometimes, as you say, they don't exist, but in such cases we don't need to worry about deferring to them. When they do, it seems foolish to not to upweight their views relative to our own unless we've done the same, or unless we have very concrete reasons to think they're inept or systemically biased (and perhaps even then).

Thomas Kwa @ 2025-12-08T19:00 (+6)

Yeah, while I think truth-seeking is a real thing I agree it's often hard to judge in practice and vulnerable to being a weasel word.

Basically I have two concerns with deferring to experts. First is that when the world lacks people with true subject matter expertise, whoever has the most prestige--maybe not CEOs but certainly mainstream researchers on slightly related questions-- will be seen as experts and we will need to worry about deferring to them.

Second, because EA topics are selected for being too weird/unpopular to attract mainstream attention/funding, I think a common pattern is that of the best interventions, some are already funded, some are recommended by mainstream experts and remain underfunded, and some are too weird for the mainstream. It's not really possible to find the "too weird" kind without forming an inside view. We can start out deferring to experts, but by the time we've spent enough resources investigating the question that you're at all confident in what to do, the deferral to experts is partially replaced with understanding the research yourself as well as the load-bearing assumptions and biases of the experts. The mainstream experts will always get some weight, but it diminishes as your views start to incorporate their models rather than their views (example that comes to mind is economists on whether AGI will create explosive growth, and how recently good economic models have been developed by EA sources, now including some economists that vary assumptions and justify differences from the mainstream economists' assumptions).

Wish I could give more concrete examples but I'm a bit swamped at work right now.

Noah Birnbaum @ 2026-02-11T06:19 (+25)

Why don’t EA chapters exist at very prestigious high schools (e.g., Stuyvesant, Exeter, etc.)?

It seems like a relatively low-cost intervention (especially compared to something like Atlas), and these schools produce unusually strong outcomes. There’s also probably less competition than at universities for building genuinely high-quality intellectual clubs (this could totally be wrong).

Brian Foerster @ 2026-02-11T17:45 (+23)

Without a strongly supportive faculty member, I feel like you would struggle to make a group last longer than 2 years, since the succession and turnover dynamics of uni groups would be amplified.

Could still be worthwhile even with the lack of sustainability.

Julia_Wise🔸 @ 2026-02-11T19:55 (+10)

Students for High Impact Charity was a project to support groups at high schools, but it had difficulty getting traction. It's hard to start a group from afar if you're not a student or faculty there.

Noah Birnbaum @ 2026-02-11T20:00 (+1)

Seems right, though plausibly there are some EA/EA-adjacent students at some of these schools.

Eli Rose🔸 @ 2026-02-11T23:37 (+7)

I think it sounds like an exciting idea. In my role funding EA CB work over the years I've seen a few of these clubs, so there's not literally nothing, but it's true that it's much less common than at universities, and I'm not aware of EA groups at these specific high schools.

The answer to many questions of the form "why isn't there an EA group for XYZ" tends to be "no organizer / no one else working to make it happen" and I'm guessing that's the main answer here too.

Charlie_Guthmann @ 2026-02-16T03:44 (+4)

FWIW I went to the best (or second best lol) high school in Chicago, Northside, and tbh the kids at these top city highschools are of comparable talent to the kids at northwestern, with a higher tail as well. More over everyone has way more time and can actually chew on the ideas of EA. There was a jewish org that sent an adult once a week with food and I pretty much went to all of them even tho i would barely even self identify as jewish because of the free food and somewhere to sit and chat about random stuff while I waited for basketball practice.

So yes I think it would be highly successful. But I think you would need adult actual staff to come at least every other week (as brian mentioned) and as far as I can tell EA is currently struggling pretty hard w/organizing capacity and it seems to be getting worse (in part because as I have said many times, we don't celebrate organizers enough and we focus the movement too much on intellectualism rather than coordination and organizing). So I kind of doubt there is a ton of capacity for this. But if there is it's a good idea. I'm happy to help you understand how you could implement this at CPS selective enrollment schools if you want to help do it yourself.

Noah Birnbaum @ 2025-10-26T06:43 (+25)

MrBeast just released a video about “saving 1,000 animals”—a well-intentioned but inefficient intervention (e.g. shooting vaccines at giraffes from a helicopter, relocating wild rhinos before they fight each other to the death, covering bills for people to adopt rescue dogs from shelters, transporting lions via plane, and more). It’s great to see a creator of his scale engaging with animal welfare, but there’s a massive opportunity here to spotlight interventions that are orders of magnitude more impactful.

Given that he’s been in touch with people from GiveDirectly for past videos, does anyone know if there’s a line of contact to him or his team? A single video/mention highlighting effective animal charities—like those recommended by Animal Charity Evaluators (e.g. The Humane League, Faunalytics, Good Food Institute)—could reach tens of millions and (potentially) meaningfully shift public perception toward impact-focused giving for animals.

If anyone’s connected or has thoughts on how to coordinate outreach, this seems like a high-leverage opportunity I really have no idea how this sorta stuff works, but it seemed worth a quick take — feel free to lmk if I’m totally off base here).

MHR🔸 @ 2025-10-26T21:28 (+36)

Manifesting

Noah Birnbaum @ 2025-10-26T21:34 (+6)

Yooo - nice! Seems good and would cost under ~100k.

Vasco Grilo🔸 @ 2025-11-15T09:50 (+2)

Agreed, Noah. For 15 k shrimps helped per $, it would cost 9.60 k$ (= 144*10^6/(15*10^3)).

huw @ 2025-10-26T10:04 (+11)

Yep—Beast Philanthropy actually did an AMA here in the past! My takeaway was that the video comes first, so that your chances of a partnership would greatly increase if you can make it entertaining. This is somewhat in contrast with a lot of EA charities, which are quite boring, but I suspect on the margins you could find something good.

What IMHO worked for GiveDirectly in that video, and for Shrimp Welfare in their public outreach, has been the counterintuitiveness of some of these interventions. Wild animals, cultured meat, shrimp, are more likely to fit in this bucket than corporate campaigns for chickens I reckon.

Mjreard @ 2025-10-28T00:54 (+8)

As Huw says, the video comes first. I think this puts almost anything you'd be excited about off the table. Factory farming is a really aversive topic for people, and people are quite opposed to large scale WAS interventions. The intervention in the video he did make wasn't chosen at random. People like charismatic megafauna.

Noah Birnbaum @ 2025-08-26T17:30 (+16)

Very random but:

If anyone is looking for a name for a nuclear risk reduction/ x-risk prevention org, consider (The) Petrov Institute. It's catchy, symbolic, and sounds like it has prestige.

NickLaing @ 2025-08-27T21:46 (+7)

Unfortunately it also sounds Russian, which has some serious downsides at the moment....

Lucius Caviola @ 2025-08-28T18:27 (+4)

Perhaps this downside could be partly mitigated by expanding the name to make it sound more global or include something Western, for example: Petrov Center for Global Security or Petrov–Perry Institute (in reference to William J. Perry). (Not saying these are the best names.)

Benjamin M. @ 2025-08-30T01:03 (+1)

For me at least, that implies an institute founded or affiliated with somebody named Petrov, not just inspired by somebody, and it would seem slightly sketchy for it not to be.

OscarD🔸 @ 2025-08-30T15:37 (+4)

Although there is the Alan Turing Institute, Ada Lovelace Institute, Leverhulme Centre, Simon Institute, etc.

Noah Birnbaum @ 2025-11-08T22:57 (+15)

Idea for someone with a bit of free time:

While I don't have the bandwidth for this atm, someone should make a public (or private for, say, policy/reputation reasons) list of people working in (one or multiple of) the very neglected cause areas — e.g., digital minds (this is a good start), insect welfare, space governance, AI-enabled coups, and even AI safety (more for the second reason than others). Optional but nice-to-have(s): notes on what they’re working on, time contributed, background, sub-area, and the rough rate of growth in the field (you probably don’t want to decide career moves purely on current headcounts). And remember: perfection is gonna be the enemy of the good here.

Why this matters

Coordination.

It’s surprisingly hard to know who’s in these niches (independent researchers, part-timers, new entrants, maybe donors). A simple list would make it easier to find collaborators, talk to the right people, and avoid duplicated work.

Neglectedness clarity.

A major reason to work on ultra-neglected causes is… neglectedness. But we often have no real headcount, and that may push people into (or out of) fields they wouldn’t otherwise choose. Even technical AI safety numbers are outdated — the last widely cited 80k estimate (2022) was ~200 people, which is clearly very false now. (To their credit, they emphasized the difficulty and tried to update.)

Even rough FTE (full time equivalent) estimates + who’s active in each area would be a huge service for some fields.

Saul Munn @ 2025-12-16T06:46 (+6)

Started something sorta similar about a month ago: https://saul-munn.notion.site/A-collection-of-content-resources-on-digital-minds-AI-welfare-29f667c7aef380949e4efec04b3637e9?pvs=74

Ben Stevenson @ 2025-12-29T15:26 (+3)

One reason a comprehensive version of this would be difficult for insect welfare is that a couple of projects are 'undercover'. Rethink Priorities have guidance on donating to insects, shrimp and wild animals that might be relevant.

Separately, I understand @JordanStone has a pretty comprehensive sense of who's who in space governance, and would be a good person to contact if you're thinking about getting into this field.

JordanStone @ 2025-12-29T16:11 (+1)

Yeah, lists exist for all the people working on space governance from a longtermist perspective, and they tend to list about 10-15 people. I'm like 90% sure I know of everyone working on longtermist space governance, and I'd estimate that there are the equivalent of ~3 people working full time on this. There's not as much undercover work required for space governance, but I don't like to share lists of names publicly without permission.

At the moment, the main hub for space governance is Forethought and most people contact Fin Moorhouse to learn more about space governance as he's the author of the 80K problem profile on space governance and has been publishing work with Forethought on or related to space governance. From there, people tend to get a lay of the land, introductions are made, and newcomers will get a good idea of what people are working on and where they might be able to contribute.

Eryn Van Wijk @ 2025-12-12T08:42 (+1)

What a wonderful idea! Mayank referred me over to this post, and I think EA at UIUC might have to hop on this project. I'll see about starting something in the next month or so and sharing a link to where I'm compiling things in case anyone else is interested in collaborating on this. Or, it's possible an initiative like it already exists that I'll stumble upon while investigating (though such a thing may well be outdated).

Noah Birnbaum @ 2026-02-12T05:28 (+11)

Here’s a random org/project idea: hire full-time, thoughtful EA/AIS red teamers whose job is to seriously critique parts of the ecosystem — whether that’s the importance of certain interventions, movement culture, or philosophical assumptions. Think engaging with critics or adjacent thinkers (e.g., David Thorstad, Titotal, Tyler Cowen) and translating strong outside critiques into actionable internal feedback.

The key design feature would be incentives: instead of paying for generic criticism, red teamers receive rolling “finder’s fees” for critiques that are judged to be high-quality, good-faith, and decision-relevant (e.g., identifying strategic blind spots, diagnosing vibe shifts that can be corrected, or clarifying philosophical cruxes that affect priorities).

Part of why I think this is important is because I generally think have the intuition that the marginal thoughtful contrarian is often more valuable than the marginal agreer, yet most movement funding and prestige flows toward builders rather than structured internal critics. If that’s true, a standing red-team org — or at least a permanent prize mechanism — could be unusually cost-effective.

There have been episodic versions of this (e.g., red-teaming contests, some longtermist critiquing stuff), but I’m not sure why this should come in waves rather than exist as ongoing infrastructure (org or just some prize pool that's always open for sufficiently good criticisms).

Brian Foerster @ 2026-02-13T01:13 (+2)

While I like the potential incentive alignment, I suspect finder’s fees are unworkable. It’s much easier to promise impartiality and fairness in a single game as opposed to an iterated one, and I suspect participants relying on the fees for income would become very sensitive to the nuances of previous decisions rather than the ultimate value of their critiques.

Ultimately, I don’t think there are many shortcuts in changing the philosophy of a movement. If something is worth challenging, than people strongly believe it and there will have to be a process of contested diffusion from the outside in. You can encourage this in individual cases, but systemizing it seems difficult.

Noah Birnbaum @ 2026-02-20T20:21 (+9)

The Forum should normalize public red-teaming for people considering new jobs, roles, or project ideas.

If someone is seriously thinking about a position, they should feel comfortable posting the key info — org, scope, uncertainties, concerns, arguments for — and explicitly inviting others to stress-test the decision. Some of the best red-teaming I’ve gotten hasn’t come from my closest collaborators (whose takes I can often predict), but from semi-random thoughtful EAs who notice failure modes I wouldn’t have caught alone (or people think pretty differently so can instantly spot things that would have taken me longer to figure out).

Right now, a lot of this only happens at EAGs or in private docs, which feels like an information bottleneck. If many thoughtful EAs are already reading the Forum, why not use it as a default venue for structured red-teaming?

Public red-teaming could:

- reduce unilateralist mistakes,

- prevent coordination failures (I’ve almost spent serious time on things multiple people were already doing — reinventing the wheel is common and costly),

Obviously there are tradeoffs — confidentiality, social risk, signaling concerns — but I’d be excited to see norms shift toward “post early, get red-teamed, iterate publicly,” rather than waiting for a handful of coffee chats.

Alex Parry @ 2026-02-21T09:22 (+1)

I would love to see that normalised, I'm personally in the ideation stage for a new org, and have been considering asking the forum to help red team, but it's not something I've seen done all that much, so I've somewhat held off.

Mo Putera @ 2026-02-22T12:56 (+2)

RoastMyPost by the Quantified Uncertainty Research Institute might be useful for your idea too if you have a gdoc proposal.

Noah Birnbaum @ 2026-02-25T22:40 (+6)

This might feel obvious, but I think it's under-appreciated how much disagreement on AI progress just comes down to priors (in a pretty specific way) rather than object-level reasoning.

I was recently arguing the case for shorter timelines to a friend who leans longer. We kept disagreeing on a surprising number of object-level claims, which was weird because we usually agree more on the kinda stuff we were arguing about.

Then I basically realized what I think was going on: she had a pretty strong prior against what I was saying, and that prior is abstract enough that there's no clear mechanism by which I can push against it. So whenever I made a good object-level case, she'd just take the other side — not necessarily because her reasons were better all else equal, but because the prior was doing the work underneath without either of us really knowing it.

There's something clearly rational here that's kinda unintuitive to get a grip on. If you have a strong prior, and someone makes a persuasive argument against it, but you can't identify the specific mechanism by which their argument defeats it, you should probably update that the arguments against their case are better than they appear, even if you can't articulate them yet. From the outside, this totally just looks like motivated reasoning (and often is), but I think it can be pretty importantly different.

The reason this is so hard to disentangle is that (unless your belief web is extremely clear to you, which seems practically impossible) it's just enormously complicated. Your prior on timelines isn't an isolate thing — it's load-bearing for a bunch of downstream beliefs all at once. So the resistance isn't obviously irrational, it's more like... the system protecting its own coherence.

I think this means that people should try their best to disentangle whether some object level argument they’re having comes from real object level beliefs or pretty abstract priors (in which case, it seems less worthwhile to press on them).

Mo Putera @ 2026-02-28T13:52 (+4)

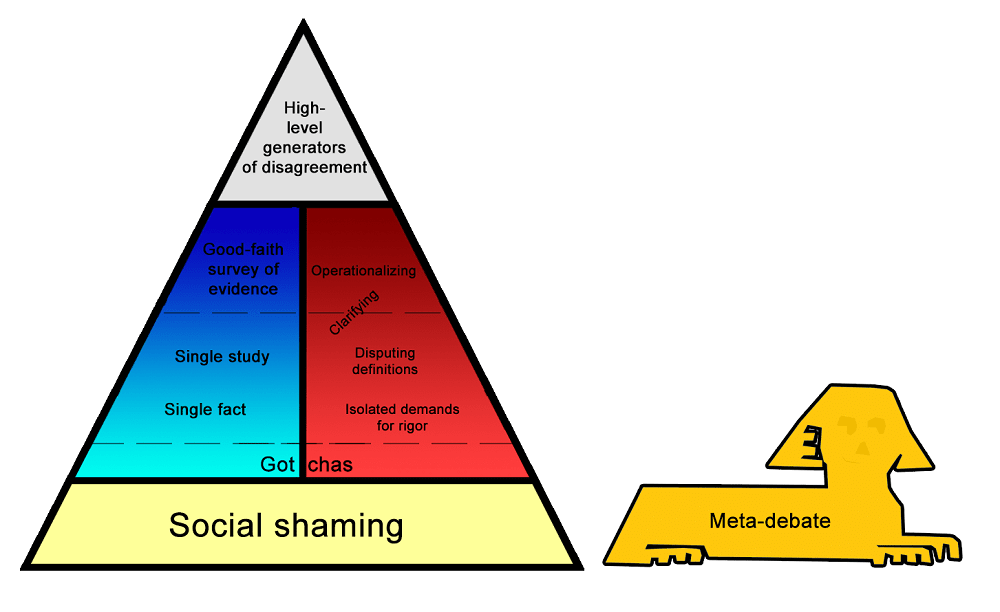

My go-to diagram for illustrating your point, from (who else?) Scott Alexander's varieties of argumentative experience:

[Graham’s hierarchy of disagreements] is useful for its intended purpose, but it isn’t really a hierarchy of disagreements. It’s a hierarchy of types of response, within a disagreement. Sometimes things are refutations of other people’s points, but the points should never have been made at all, and refuting them doesn’t help. Sometimes it’s unclear how the argument even connects to the sorts of things that in principle could be proven or refuted.

If we were to classify disagreements themselves – talk about what people are doing when they’re even having an argument – I think it would look something like this:

Most people are either meta-debating – debating whether some parties in the debate are violating norms – or they’re just shaming, trying to push one side of the debate outside the bounds of respectability.

If you can get past that level, you end up discussing facts (blue column on the left) and/or philosophizing about how the argument has to fit together before one side is “right” or “wrong” (red column on the right). Either of these can be anywhere from throwing out a one-line claim and adding “Checkmate, atheists” at the end of it, to cooperating with the other person to try to figure out exactly what considerations are relevant and which sources best resolve them.

If you can get past that level, you run into really high-level disagreements about overall moral systems, or which goods are more valuable than others, or what “freedom” means, or stuff like that. These are basically unresolvable with anything less than a lifetime of philosophical work, but they usually allow mutual understanding and respect.

More on the high-level generators of disagreement (emphasis mine, other than 1st sentence):

High-level generators of disagreement are what remains when everyone understands exactly what’s being argued, and agrees on what all the evidence says, but have vague and hard-to-define reasons for disagreeing anyway. In retrospect, these are probably why the disagreement arose in the first place, with a lot of the more specific points being downstream of them and kind of made-up justifications. These are almost impossible to resolve even in principle. ...

Some of these involve what social signal an action might send; for example, even a just war might have the subtle effect of legitimizing war in people’s minds. Others involve cases where we expect our information to be biased or our analysis to be inaccurate; for example, if past regulations that seemed good have gone wrong, we might expect the next one to go wrong even if we can’t think of arguments against it. Others involve differences in very vague and long-term predictions, like whether it’s reasonable to worry about the government descending into tyranny or anarchy. Others involve fundamentally different moral systems, like if it’s okay to kill someone for a greater good. And the most frustrating involve chaotic and uncomputable situations that have to be solved by metis or phronesis or similar-sounding Greek words, where different people’s Greek words give them different opinions.

You can always try debating these points further. But these sorts of high-level generators are usually formed from hundreds of different cases and can’t easily be simplified or disproven. Maybe the best you can do is share the situations that led to you having the generators you do. Sometimes good art can help.

The high-level generators of disagreement can sound a lot like really bad and stupid arguments from previous levels. “We just have fundamentally different values” can sound a lot like “You’re just an evil person”. “I’ve got a heuristic here based on a lot of other cases I’ve seen” can sound a lot like “I prefer anecdotal evidence to facts”. And “I don’t think we can trust explicit reasoning in an area as fraught as this” can sound a lot like “I hate logic and am going to do whatever my biases say”. If there’s a difference, I think it comes from having gone through all the previous steps – having confirmed that the other person knows as much as you might be intellectual equals who are both equally concerned about doing the moral thing – and realizing that both of you alike are controlled by high-level generators. High-level generators aren’t biases in the sense of mistakes. They’re the strategies everyone uses to guide themselves in uncertain situations.

(also related: Value Differences As Differently Crystallized Metaphysical Heuristics and the previous essays in that series)

Regarding your "something clearly rational here that's kinda unintuitive to get a grip on", I think of it as epistemic learned helplessness as a "social safety valve" to the downside risk of believing persuasive arguments that can (potentially catastrophically) harm the believer, cf. Reason as memetic immune disorder.

There's a lot more to the study of disagreement if you're keen, shame it's mostly just one person working on it and they're busy writing a book nowadays.

TsviBT @ 2026-03-02T05:13 (+1)

I think there are ways to get the info without threatening the coherence of your system. For example, you can try to understand, and then absorb into intuition, alternative root/basic intuitions. Cf. https://en.wikipedia.org/wiki/World_Hypotheses by Pepper, and Lakoff's ideas on metaphors. As a concrete example in the case of timelines, I would offer https://www.lesswrong.com/posts/wgqcExv9AgN5MuJuY/bioanchors-2-electric-bacilli as an intuition for longer / less confident timelines that you could try to understand intuitively (without necessarily believing). Having multiple of these hypotheses is one good way to make yourself more fluent in viewing multiple alternative states of the world as being intuitively plausible / worth investigating / asking for falsification.

Noah Birnbaum @ 2026-03-21T17:13 (+5)

More EA in da news: https://x.com/DavidSacks/status/2034047505336295904

And the spicy CAIS take: https://x.com/cais/status/2034389842076025164?s=46

MichaelDickens @ 2026-03-21T17:47 (+10)

From the CAIS tweet:

We believe the effective altruism movement is, unfortunately, controlled opposition. The less influence it has on AI safety, the better.

I really don't like it when people paint the whole movement with one brush, but they're not wrong that there's a subset of AI safety/EA that behaves like controlled opposition. Obvious example is Anthropic/Dario (who was one of the first GWWC signatories); Good Ventures basically doesn't fund anything that might be bad for AI company stock prices; I can think of some other possible examples but I don't want to denigrate anyone in case I'm wrong.

It's definitely not true that the whole movement is controlled opposition. Here I am, an EA, loudly advocating for a pause on AI development. There are plenty of us low-level EAs who oppose AI companies, but we don't control funding.

Noah Birnbaum @ 2026-03-11T16:24 (+5)

Experts currently treat being persuaded as reasonably good evidence that something is true — their judgment is calibrated enough that when they find an argument convincing, that's correlated with the argument actually being correct. This allows them to update readily in light of new evidence, and is a big part of how intellectual progress happens: lots of innovation and advances in basically every subject come down to experts taking sometimes weird new ideas seriously.

One worry I have about superpersuasive AI is that it could erode this. If a superpersuasive AI can convince experts of things regardless of whether those things are true, experts may cease to see themselves being persuaded as good evidence that something is true — and start treating it the way laypeople do. Laypeople are typically hesitant to take on new, truth-tracking beliefs in light of new information, and (to some degree) rationally so: the fact that someone was able to convince a layperson of something is just not very strong evidence that it is in fact true. Experts might end up in the same position — only updating rarely, and in ways that are often unrelated to the truth.

This would be quite bad. If experts lose their capacity to reliably update on genuine evidence, we could significantly slow the rate of intellectual progress (which could be very important for making AI go well!). This is, I think, an underappreciated argument for caring about AI for epistemics — curious what others think.

MichaelDickens @ 2026-03-15T01:02 (+2)

I think this is technically true but irrelevant: if we have superpersuasive AI, then there won't be human experts anymore, because the AI will have more expertise than any human. Unless somehow the AI is superpersuasive while still having sub-human performance in most ways, which seems unlikely to me.

Noah Birnbaum @ 2025-09-05T00:33 (+4)

Looking back on old 80k podcasts, and this is what I see (lol):

Bella @ 2025-09-05T23:51 (+8)

They're both great episodes, though — relistened to #138 last week :)