AI Safety Newsletter #43: White House Issues First National Security Memo on AI Plus, AI and Job Displacement, and AI Takes Over the Nobels

By Center for AI Safety, Corin Katzke, AlexaPanYue, Dan H @ 2024-10-28T16:02 (+6)

This is a linkpost to https://newsletter.safe.ai/p/ai-safety-newsletter-43-white-house

Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

Subscribe here to receive future versions.

White House Issues First National Security Memo on AI

On October 24, 2024, the White House issued the first National Security Memorandum (NSM) on Artificial Intelligence, accompanied by a Framework to Advance AI Governance and Risk Management in National Security.

The NSM identifies AI leadership as a national security priority. The memorandum states that competitors have employed economic and technological espionage to steal U.S. AI technology. To maintain a U.S. advantage in AI, the memorandum directs the National Economic Council to assess the U.S.’s competitive position in:

- Semiconductor design and manufacturing

- Availability of computational resources

- Access to workers highly skilled in AI

- Capital availability for AI development

The Intelligence Community must make gathering intelligence on competitors' operations against the U.S. AI sector a top-tier intelligence priority. The Department of Energy will launch a pilot project to evaluate AI training and data sources, while the National Science Foundation will distribute compute through the National AI Research Resource pilot.

The NSM and Framework outline AI safety and security practices. The AI Safety Institute at the National Institute of Standards and Technology becomes the primary U.S. Government point of contact for private sector AI developers. The AI Safety Institute must:

- Test at least two frontier AI models within 180 days of the memorandum

- Issue guidance for testing AI systems' safety and security risks

- Establish benchmarks for assessing AI capabilities

- Share findings with the Assistant to the President for National Security Affairs

The Department of Energy's National Nuclear Security Administration will lead classified testing for nuclear and radiological risks, while the National Security Agency will evaluate cyber threats through its AI Security Center.

The framework prohibits government AI use for:

- Nuclear weapons deployment without human oversight

- Final immigration determinations

- Profiling based on constitutionally protected rights

- Intelligence analysis based solely on AI without warnings

National security agencies must also:

- Appoint Chief AI Officers within 60 days

- Establish AI Governance Boards

- Maintain annual inventories of high-impact AI systems

- Implement risk management practices

- Submit annual reports on AI activities

All requirements take effect immediately, with initial implementation deadlines beginning within 60 days. The memorandum’s fact sheet is available here.

AI and Job Displacement

In this story, we look at recent work on the future of AI job displacement.

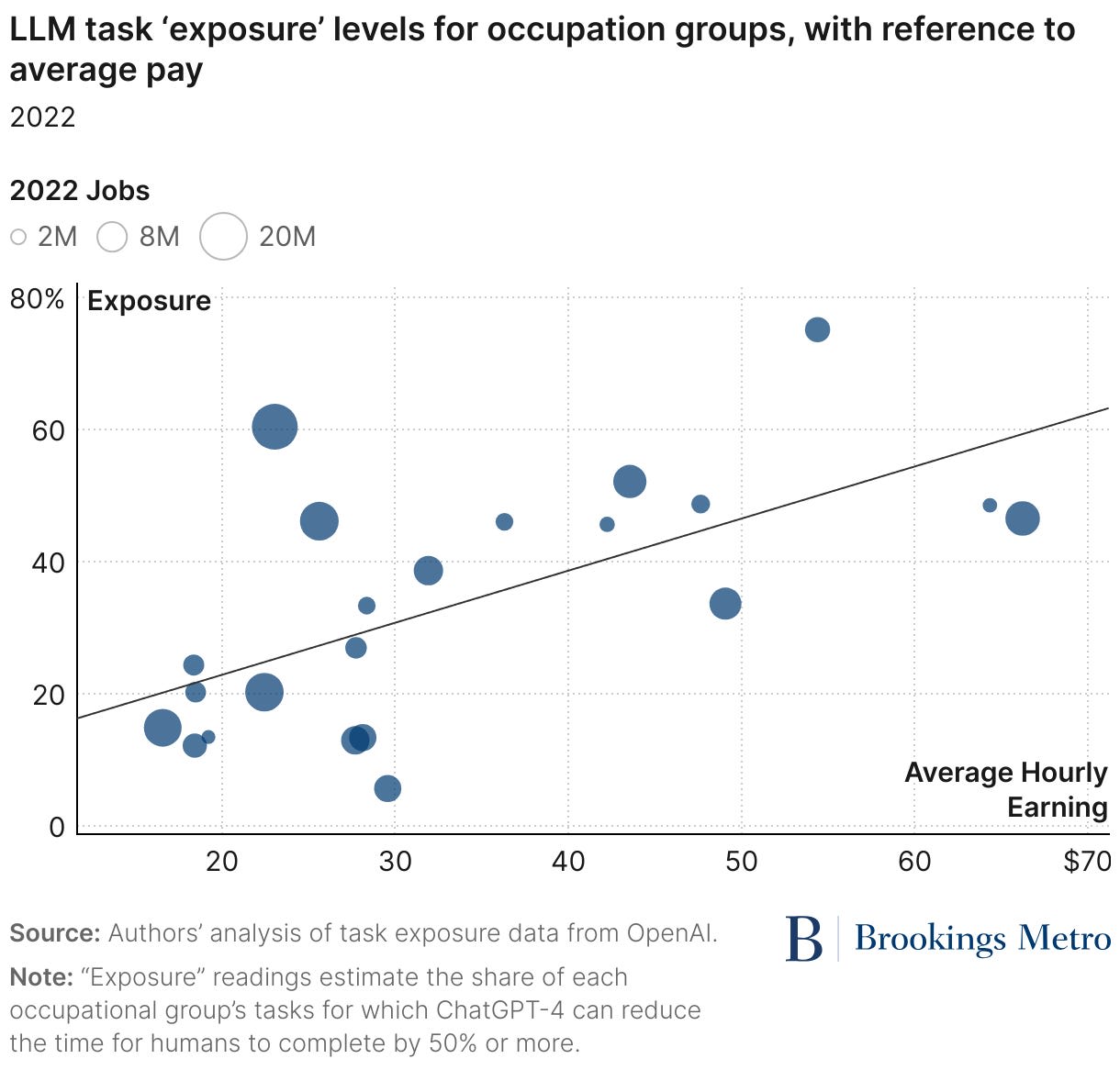

Brookings projects widespread job disruption from GPT-4 capabilities. A study by the Brookings Institution investigates the potential impacts of current generative AI on the American workforce, using estimates shared by OpenAI. Key findings include:

- Widespread effects. Over 30% of all workers could see at least 50% of their occupation's tasks disrupted by generative AI, while 85% could see at least 10% of their work tasks impacted. In many cases, however, it is unclear whether AI will be more labor-augmenting or labor-displacing.

- Disproportionate impact on higher-paying fields. Sectors facing the greatest exposure include STEM pursuits, business and finance, architecture and engineering, and law.

- Gender disparity. Women face higher exposure to generative AI, primarily due to their overrepresentation in white-collar and administrative support roles.

- Mismatch in worker representation. Industries most exposed to generative AI generally have low union representation, potentially limiting workers' ability to shape AI's implementation in their fields.

These results assume GPT-4 capabilities and moderate innovation in system autonomy and applications. Importantly, it “does not attempt to project future capability enhancements from next-generation AI models likely to be released”—meaning that the actual effects of AI on job displacement are likely to be even greater than those above.

GPT-4o can outperform human CEOs in some respects. A recent experiment conducted by researchers at Cambridge found that GPT-4o can significantly outperform human CEOs in strategic decision-making tasks. The study, which involved 344 participants navigating a gamified simulation of the U.S. automotive industry, found that:

- GPT-4o consistently outperformed top human participants on nearly every metric.

- GPT-4o excelled in data-driven tasks like product design and market optimization.

- However, GPT-4o struggled with unpredictable events, such as market collapses during simulated crises.

Traditionally, executive positions have been thought to be insulated from AI automation due to the generalist and strategic nature of executive work. However, this experiment suggests that current frontier AI systems have potential to automate (parts of) even these positions.

Physical labor is not safe from AI. The jobs currently most exposed to frontier AI capabilities involve cognitive labor such as writing and coding. However, if investment is any indication, jobs involving physical labor might be next.

Fei Fei Li’s startup, World Labs, raised $230 million last month. The startup describes itself as a “spatial intelligence company building Large World Models to perceive, generate, and interact with the 3D world.”

Robotics is seeing similar levels of investment. Earlier this month, Tesla previewed “Optimus,” a line of humanoid robots in development. The robots at the event were piloted by humans—but the robotics were impressive and moved stably, and could eventually be piloted by a sufficiently capable AI.

Economic policy implications of AGI. Anton Korinek wrote a working paper for the National Bureau of Economic Research on the economic policy challenges posed by AI. He writes that job displacement resulting from the development of AGI “will necessitate a fundamental reevaluation of our economic structures, social systems, and the meaning of work in human society.”

The paper identifies several challenges the development of AGI would pose for economic policy, including:

- Inequality and income distribution. AGI could lead to unprecedented income concentration and labor market disruption. New mechanisms for income distribution independent of work, like Universal Basic Income, may be necessary.

- Education and skill development. AGI will render many traditional skills obsolete, requiring a fundamental reevaluation of educational goals and curricula. The focus may shift to developing skills for roles requiring human interaction and preparing citizens to critically evaluate AI's societal impacts.

- Social and political stability. Widespread economic discontent due to AGI-induced labor market disruption could lead to social unrest and political instability. Policies will be needed to mitigate economic disruptions, ensure equitable distribution of AGI benefits, and strengthen democratic institutions.

- Antitrust and market regulation. AGI could lead to unprecedented market concentration, requiring a fundamental rethinking of antitrust policies and market regulation. New regulatory approaches may be needed to ensure fair competition and prevent the concentration of technological power.

- Intellectual property. AGI challenges existing intellectual property frameworks and raises questions about ownership and economic incentives for AI-generated innovations. Current IP systems may need to be redesigned to balance incentives and the distribution of AGI benefits.

AI Takes Over the Nobels

Two of the 2024 Nobel Prizes were awarded to AI researchers. In this story, we discuss their implications for AI in science and AI safety.

Geoffrey Hinton, widely known as “the Godfather of AI”, received the Nobel Prize in Physics. Hopfield, a physicist who shares the award, created Hopfield networks which improved associative memory in neural nets. Hinton is credited with Boltzmann machines, a technique building on Hopfield’s, which helped pretrain networks by backpropagation. This then spawned recent explosive progress in machine learning.

Demis Hassabis and John Jumper, both Google Deepmind scientists, were awarded the Nobel Prize in Chemistry. They are recognized for developing AlphaFold2, an AI model trained to predict protein structure from amino acid sequences. Presented in 2020, the model has now succeeded in predicting the structure of virtually all known proteins, previously thought to be extremely difficult for human researchers.

The Nobel decisions suggest that AI is increasingly driving scientific progress. Both the physics and chemistry prize statements highlight the impact of machine learning on their fields. The physics statement reads: “in physics we use artificial neural networks in a vast range of areas, such as developing new materials with specific properties”. Likewise, the Chemistry committee noted that “AlphaFold2 has been used by more than two million people from 190 countries” and for a myriad of scientific applications.

As AI capabilities for scientific research improve, future scientific breakthroughs might become increasingly dominated by AI.

Hinton’s Nobel win could bolster credibility for AI safety. In post-award interviews and press conferences, Hinton has used much of his time to discuss and promote AI safety. In particular, he has emphasized challenges in controlling advanced AI systems and avoiding catastrophic outcomes, and called for more safety research from labs, governments, and young researchers.

The Nobel Prize for Hinton's achievements in AI will hopefully lend more credibility to his advocacy for AI safety.

Government

- After winning an antitrust case against Google this summer, the US Justice Department is now considering asking a federal judge to force Google to sell off parts of its business.

- The US AI Safety Institute is requesting comments on the responsible development and use of chemical and biological (chem-bio) AI models. Comments are due December 3rd.

- The US is investigating whether TSMC violated US export controls by making chips for Huawei.

- The US is also weighing expanding chip export controls to cap exports to some countries in the Middle East.

- The UK AI Safety Institute launched its grant program for systemic AI safety. Applications are due November 26th.

Industry

- The New York Times reports that the partnership between OpenAI and Microsoft is showing signs of stress.

- xAI is hiring AI safety engineers.

- Meta announced Movie Gen, a group of models that generate high-quality video with synchronized audio. It’s unclear whether Meta will release the models’ weights.

- Anthropic updated Sonnet 3.5 and notably did not release Opus 3.5.

- Deepseek, a Chinese AI lab, released Janus, an any-to-any multimodal LLM with notable performance on multimodal understanding benchmarks.

Research

- The Forecasting Research Institute released a leaderboard for LLM forecasting, further corroborating that AIs are roughly crowd-level in performance. The confidence interval around the performance of top LLMs heavily overlaps with the confidence interval around top forecasters. With minor differences in Brier scores and substantial improvements in breadth, speed, and cost, AI forecasters may become noticeably economically superior to human forecasters.

- This paper introduced RoboPAIR, the first algorithm designed to jailbreak LLM-controlled robots.

- This paper finds that LLMs can generate novel NLP research ideas.

- Researchers from Gray Swan AI and the UK AI Safety Institute released a benchmark for measuring the harmfulness of LLM agents.

See also: CAIS website, X account for CAIS, our $250K Safety benchmark competition, our new AI safety course, and our feedback form.

You can contribute challenging questions to our new benchmark, Humanity’s Last Exam. The deadline is November 1st—the end of this week!

The Center for AI Safety is also hiring for several positions, including Chief Operating Officer, Director of Communications, Federal Policy Lead, and Special Projects Lead.

Double your impact! Every dollar you donate to the Center for AI Safety will be matched 1:1 up to $2 million. Donate here.