Resource Allocation: A Research Agenda

By arvomm, Hayley Clatterbuck, Derek Shiller, Bob Fischer, David_Moss @ 2024-11-14T15:34 (+44)

Rethink Priorities' Worldview Investigations Team is sharing research agendas that provide overviews of some key research areas as well as projects in those areas that we would be keen to pursue. This is the last of three such agendas posted in November 2024. While we have flagged specific projects we'd like to work on, these are not exhaustive. We would recommend that prospective donors reach out directly to discuss what might make the most sense for us to focus on.

Introduction

We have limited altruistic resources. How do we best spend them? This question is at the heart of Effective Altruism, and it encompasses many key themes that deserve attention. The Worldview Investigations Team has previously completed a number of projects addressing fundamental questions of this kind: for example, the CURVE sequence, the CRAFT sequence, and the Moral Weights Project. This agenda outlines new projects, proposes extensions of these previous projects, and presents research questions that we would like to prioritize in our future work.

The stakes, our approach, and on ‘getting things wrong’

Getting the agenda’s questions wrong could have severe consequences. Many of the issues described here have a rich intellectual history and run deep into what it means to do good. We see our task here as one of cautious exploration, combining realism about the challenges with the courage to propose a solution where one is needed, however limited it might have to be by nature. These are complex questions so we want to engage with the details but not obsess over them. Many tradeoffs we describe (e.g. how we split resources to help non-human animal lives versus human lives) take place all the time whether we choose to pay attention to them or not. Not taking a stance is itself a stance, and too often a poor one. We would rather do our best to reason through the daunting problems at hand and provide an actionable plan, than to be forced to act in ignorance because of academic paralysis, or in the name of agnosticism. Throughout this agenda we will flag a number of concrete examples of important problems that arise from neglecting the questions or providing the wrong answers. We do this in between sections, under the ‘getting this wrong’ banner.

Projects and Tools

Interspersed throughout this document, we present ideas for tools and models that explore or illustrate the issues at hand. We see tools as a particularly helpful bridge between the theoretical and the applied, as they force us to confront actual numbers and decisions in a way that more abstract ideas do not. We want our tools to take much-discussed ideas and make them concrete, allowing decision-makers to engage in more transparent deliberations and conversations about their strategic priorities.

Contents

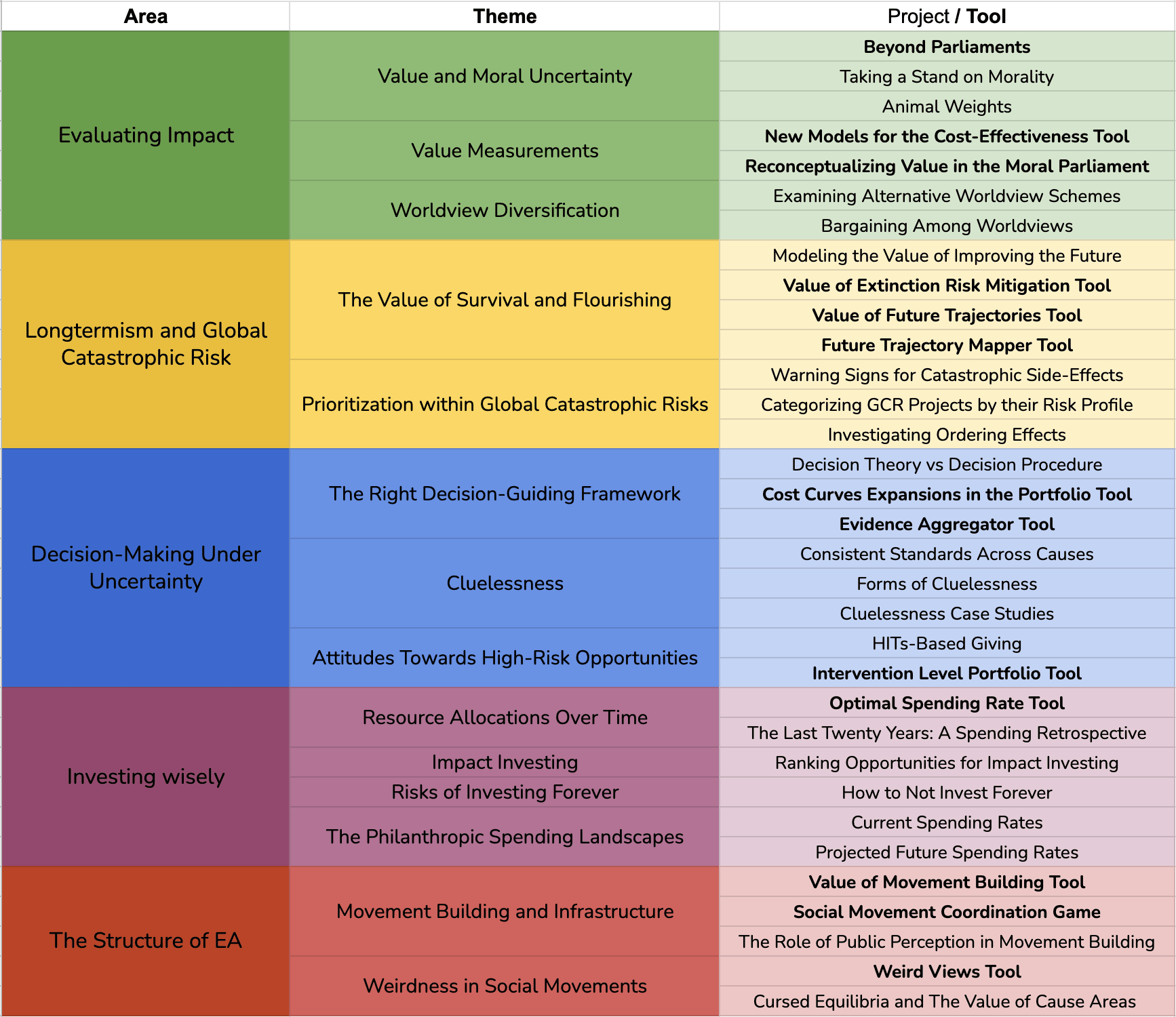

This research agenda is organized around five central themes that collectively address the fundamental question of how to optimally allocate our limited altruistic resources.

- Evaluating Impact: We begin by examining what we should value and how to measure that value across different causes and beneficiaries.

- Longtermism and Global Catastrophic Risk: Next, we consider how to prioritize ways of achieving value in the context of long-term projects and their potential impact on future generations.

- Decision-Making Under Uncertainty: We then explore how to navigate uncertainty and think about the probabilities of possible future events, aiming to make sound decisions despite unpredictable outcomes.

- Investing Wisely: In this section, we address issues of philanthropic spending over time in the face of uncertainty and varying time preferences, seeking to optimize the allocation of resources.

- The Structure of Effective Altruism: Finally, we reflect on how we might build and strengthen the Effective Altruism movement while maintaining our primary focus on doing the most good.

Within each theme, we highlight specific areas where our work could be particularly impactful — topics especially relevant to our past projects, particularly foundational, aligned with the team's expertise, and active areas of current debate.

Evaluating Impact

Value and Moral Uncertainty

In order to find what could be the best strategy for doing good, we need to know what our objectives are and how to measure them. Suppose we want to maximize value. What are the central components of value? Some guiding questions with these components in mind are:

- What kinds of creatures matter? Do benefits to humans count the same as benefits to, say, chickens?

- Is it morally relevant whether a potential beneficiary is currently alive, will exist soon, or will exist in the far future? In what ways?

- How much should we care about adding or creating good things, as opposed to removing or preventing bad things?

- What contributes to the value of a state of affairs? How much do pleasure, pain, flourishing, justice, equity and other possible intrinsic goods matter when evaluating some outcome?

- Do we have a special duty to help those in extreme need first?

Getting things wrong

Consider just one example. If we are wrong about which creatures matter, we are at best wasting resources and at worst failing to protect those who need our help. Around 440 billion shrimp are raised and slaughtered annually with a systematic disregard for their suffering. If shrimps matter and we aren’t devoting sufficient resources to their protection, this is a preventable moral catastrophe. If shrimps do not matter, then we would be wasting resources that could otherwise be spent helping creatures that do matter.

Projects and Tools

- Beyond Parliaments

- Moral theories give a wide range of answers to the normative questions we posed above. Given that we lack full confidence in any singular moral theory, how should we make decisions in the face of moral uncertainty? Rethink Priorities has developed a Moral Parliament Tool that allows users to explore a parliamentary approach that attempts to answer that very question. It assumes that the views over which we’re uncertain can be represented as parties in a moral parliament, where the size of each party reflects our confidence. We are also interested in adding additional allocation methods to the parliamentary tool as well as exploring non-parliamentary alternatives (such as randomly choosing among permissible options or deferring to experts). By developing and executing plausibility tests — such as simulating straightforward scenarios where we feel relatively confident about the best decisions — we aim to compare these methods and understand their strengths and weaknesses.

- Taking a Stand on Morality

- So far we have refrained from making substantive claims about ideal parliament compositions and our proposed credences on any particular worldview. But, if we were forced to choose, what moral frameworks should we endorse, why, and to what extent? Do certain principles and goals imply certain credences in these views? This project aims to explore these questions by conducting substantial philosophical analysis, surveying expert views, and practically applying our existing tools to critically assess the merits of each moral framework.

- Animal Weights

- Do benefits to humans count the same as benefits to, say, chickens? Put differently, what moral weights should we assign to humans and other kinds of animals? In 2020, Rethink Priorities published the Moral Weight Series — a collection of five reports about these and related questions. In May 2021, Rethink Priorities launched the Moral Weight Project, which extended and implemented the research program that those initial reports discussed. We currently think answering these questions is important and sufficiently complicated to warrant focused attention, so we’re devoting an entire separate research agenda to it.

Value Measurements

In order to compare different kinds of charities, it is helpful to be able to assess their values along a single common scale. To do this, we may extend existing units measuring certain kinds of value from the contexts in which they were first defined for use elsewhere. This requires translating between categories of value: for instance, we may take disability-adjusted life years (DALYs) averted as our common currency, and equate any good we do (e.g. improving chicken lives, promoting art or culture, permitting humanity to colonize the stars) to some equivalent amount of DALYs averted.

If we are going to use a common currency, it makes sense to pick a unit that captures as wide a range of interventions as possible without requiring translation and without much controversy. In past work, such as the Cross-Cause Cost-Effectiveness Model tool, we used disability-adjusted life years (DALYs) to investigate the cost-effectiveness of altruistic projects from a wide range of areas. Other alternatives (QALYs, WELLBYs) have been proposed to have advantages that might merit preferring them, and our use of DALYs wasn’t intended as a repudiation of them.

Our choice of common-currency unit for cross-cause comparisons deserves more attention.

One reason to care about our unit is that we may be concerned that DALYs averted are not ideal for measuring the total value of the specific interventions they are most commonly used for[1]. If the methodology around assessing them were biased or caused researchers to overlook important consequences of interventions, then we should be wary about taking standard DALY assessments to comprise the total value. Rather than trying to rewrite DALY estimates for existing charities, this would suggest choosing a different unit that better encompasses their true value.

Second, we may find it more difficult to translate certain other kinds of value into an equivalent amount of DALYs averted than into some of its competitors. Finding equivalent amounts of value across different categories of goods is generally difficult, but may be easier for some units of value than others. Our preferred common currency unit should be one that is more amenable to translation for categories of value which it can’t directly measure.

Projects and Tools

- New Models for Cross-Cause Cost-Effectiveness

- We want to expand the Cross-Cause Cost-Effectiveness Model by incorporating additional models for both the existing areas (such as global health and development, animal welfare, and existential risk) and new areas under consideration. Additional models would allow for greater flexibility and accuracy in assessing cost-effectiveness, as they can account for different assumptions, parameters, and uncertainties within each domain. By introducing a range of more tailored models for various cause areas, users will be able to better explore the unique dynamics and potential impacts of diverse interventions, making this tool more versatile and realistic. The act of modeling them will also help provide insight about the kinds of value that are most challenging to capture using existing measures of value.

- Reconceptualizing Value in the Moral Parliament

- We are considering a reconceptualization of value within the Moral Parliament framework. Instead of using different parameters under categories like beneficiaries and types of beings, we would like to investigate systematically tracking value using alternative building blocks. For example, we might focus on the number of individuals affected, when they are affected, and apply appropriate discounts based on factors like Disability-Adjusted Life Years (DALYs), Quality-Adjusted Life Years (QALYs), or Well-being-Adjusted Life Years (WELBYs). This approach could offer a more quantifiable method for calculating value by multiplying the number of individuals affected by the magnitude of the impact, adjusted with all relevant discounts.

Worldview Diversification

Worldview diversification involves “putting significant resources behind each worldview that we find highly plausible,” and then allowing those resources to be utilized in ways that promote that worldview’s values (Karnofsky 2016). In EA, it has now become standard to divide the space into three main worldviews: Global Health and Development (GHD), Animal Welfare (AW), and Global Catastrophic Risks (GCR).

These worldviews vary across multiple dimensions, most notably the beneficiaries and timeframe of primary concern. However, there are many other ways that we could carve up the space into different worldviews. For example:

- First-order moral theories: we assign credences to utilitarianism, Kantianism, etc. and allocate resources to the projects favored by those moral views (as in RP’s Moral Parliament Tool)

- Risk attitudes: we divide projects according to whether they are sure things (low risk of backfire, high chance of positive outcome), risky (high risk of backfire or failure), or somewhere in between, and divide resources among the different categories of risk

- Types and quality of evidence: some worldviews require projects to meet a high evidentiary bar (e.g. strong empirical evidence, probability of positive effect >80%), while others have lower evidentiary standards and permit more speculative projects

- Existing philanthropic organizations and communities: we identify existing organizations (e.g. UNICEF, Humane League) or existing communities (e.g. global health researchers) and distribute resources in accordance with our judgments about their reputability

- Beliefs about contingent outcomes: we allocate resources across different epistemic worldviews by assigning credences to outcomes of interest (e.g. AI alignment is achievable, the US election turns out a certain way) and allocating resources in accordance with what we would deem best were those outcomes to obtain

Given the many possible conceptions of worldviews, we should ask whether our current scheme is among the best options. Dividing the space differently could have significant implications. For example, if we allocated resources based on fundamental normative ethical positions endorsed by the community, rather than the current cause area division, this might lead us to think that we should allocate significant resources to virtue ethics (endorsed by 7.3% of the community). This might, for example, imply giving significant funding to projects focused on improving institutional decision-making — an area that might promote virtues like wisdom, integrity, and good governance, potentially leading to systemic changes. In contrast, those types of projects seem unlikely to meet the funding bars for any of the current Global Health and Development, Animal Welfare, and Global Catastrophic Risks worldviews.

Projects and Tools

- Examining Alternative Worldview Schemes

- What would these different ways of categorizing worldviews mean for distributing philanthropic resources? What justification can be given for any particular conception of worldview diversification, and which conceptions fare best under that justification? By examining what different worldview diversification schemes would look like, we can shed light on possible sources of value or opportunity that we might miss under the current scheme.

- Bargaining Among Worldviews

- Suppose that we give resources to each worldview and then let them bargain with each other, making deals that they deem to be in their self interest. What kinds of bargains would we expect worldviews to make, and how would this affect the overall distribution of resources? For example, AW and GHD might worry that some of their projects will cancel each other out (AW’s work against factory farms butts up against GHD’s economic development plans), so they agree to work together on climate change instead. GCR might judge that a certain risk must be addressed right now, so GHD lends them resources now to be paid back later. These hypothetical bargains can inform our initial distribution of resources and help us with longer term planning. We can also examine whether the current distribution across GHD, AW, and GCR is one that would be arrived at by other kinds of worldviews (e.g. different kinds of risk attitudes) as a result of bargaining.

Longtermism and Global Catastrophic Risk

The Value of Survival and Flourishing

Suppose that we care not just about the present day but about the future of our world. Should we promote humanity’s survival or improve the trajectory of the future conditional on survival? Completely neglecting either of these dimensions is self-defeating, so how should we navigate tradeoffs between them? Previously, we released a report that investigates the value of extinction risk mitigation by extending a model developed by Ord, Thorstad and Adamczewski. Our work there uses more realistic assumptions, like sophisticated risk structures, variable persistence and new cases of value growth.[2] By enriching the base model, we were able to perform sensitivity analyses and can better evaluate when existential risk mitigation should, in expectation, be a global priority. One thing the report does not explore, however, is the value of increasing the value of the future directly.[3]

Getting things wrong

Imagine a scenario where we do enough to guarantee our survival. Humans survive until the last days of planet Earth and propagate in the Galaxy and beyond once our Solar System becomes uninhabitable. However we were overly focused on survival. Those humans are rarely happy and often have bland or even difficult lives. Perhaps we set up semi-authoritarian states that created a kind of stable dystopia, and while they were one way of securing our survival, they crippled our ability to flourish. Or perhaps, in an alternative version of the story, we see humans surviving millenia with similar levels of welfare to the present day, but much higher levels of welfare would have been accessible, had we done enough to set the scene for a brighter future. Perhaps there was a way of promoting that much-improved future without sacrificing too large a probability of our survival, or perhaps it is worth sacrificing substantial safeguards to maintain those more optimistic trajectories accessible to us.

Projects and Tools

- Modeling the Value of Improving the Future

- In our existing model we keep the value trajectory fixed. In reality, of course, there are many interventions we could carry out that seek to directly improve the trajectory of the future, and these might well be competitive with those that seek to increase our probability of survival, all things considered. Under what circumstances and assumptions should we prioritize interventions that only seek to improve the future? Are there any interventions that do well on both fronts: our survival and flourishing?

The report we published on extinction risk mitigation provided an interactive Jupyter Notebook for readers to explore scenarios, but this format may not be accessible enough for less technically inclined users. We see value in developing three separate tools to better address these topics.

- Value of Extinction Risk Mitigation Tool

- We suggest creating a fully-fleshed tool based on the extinction risk mitigation model and Jupyter Notebook, enhancing its accessibility by creating an online platform with sliders and buttons. This would allow users to easily adjust scenarios and visualize key variables like persistence and growth assumptions, quickly calculating values based on their inputs.

- Value of Future Trajectories Tool

- We aim to build a parallel tool focused on improving future value trajectories. This tool would allow users to explore interventions that enhance humanity’s potential directly, complementing the survival-focused tool by evaluating the expected value of increasing flourishing and overall well-being in the presence of extinction risks.

- Future Trajectory Mapper Tool

- We propose a future trajectory mapper. This interactive tool would enable users to specify assumptions or manually trace potential future trajectories, allowing them to capture, record, compare and update more nuanced beliefs about what the future could hold, factoring in economic and subjective components. For example, after using the tool to explore various scenarios, a user might realize that their initial belief in a promising future was overly optimistic once certain economic or social challenges were factored in. They could discover that without key interventions to promote flourishing — such as improving key institutional structures or maintaining minimal health standards — humanity might survive, but in a suboptimal state. This insight might shift their focus towards addressing any low-hanging fruits that promote flourishing instead of prioritizing existential security.

Together, these tools would provide a more comprehensive framework for examining how different interventions impact both humanity’s survival and its future flourishing.

Prioritization within Global Catastrophic Risks

When doing cause prioritization across major cause areas, we have considered how different cost-effectiveness estimates, risk profiles, and uncertainties should affect our decision-making. These considerations also matter when doing cause prioritization within a cause area. Projects will differ with respect to their cost effectiveness, probabilities of success and backfire, and how much uncertainty we have about key project parameters. Projects may be correlated with one another, in a way that will influence choices over project portfolios. How should we choose (portfolios of) projects within the Global Catastrophic Risks (GCR) cause area?

In the CURVE sequence, we discussed how attitudes about risk and uncertainty about future trajectories affect our assessment of the GCR cause area. We can apply these insights to evaluate when certain GCR projects are better or worse, by the lights of various risk attitudes and decision theories. We highlighted three kinds of risk aversion, involving desires to: avoid worst case scenarios, have a high probability of making a difference, and avoid situations where the chances are unknown. Some GCR projects have high EV but are risky in some or all of these senses, while others have lower EV but are safer bets. For example, in the field of biosecurity, investments in air filtration and PPE have low risk of backfire, predictable effects, and will be beneficial even if no catastrophic pandemic occurs. On the other end of the spectrum, engineering superviruses (in order to anticipate their effects and design vaccines) has a high risk of backfire, is unpredictable, and its possible value derives largely from rare outcomes where those catastrophic viruses emerge. Clearly, these projects will fare very differently when evaluated under risk averse versus risk neutral lenses.

Categorizing various GCR projects by their risk profiles will assist decision-makers in choosing individual projects and giving portfolios that accord with their risk preferences, such as maximizing expected value, minimizing backfire risk, or maximizing the probability of having an effect. This work might also help to diversify the GCR cause area. Many people are drawn to GCR causes precisely because they seek to maximize EV and have judged longtermist interventions to be their best bets (though see the CURVE sequence on whether and when this is the case). The same considerations that cause people to prioritize GCR might also lead them toward high-risk, high-EV GCR projects. Self-selection of the risk tolerant into GCR might cause safer bet GCR projects to be undervalued or underexplored.

GCR projects are, by definition, designed to have long-term and significant consequences. Unlike small, local interventions, these projects are often designed to affect major causal structures and alter future trajectories. We therefore expect that GCR projects will often carry non-negligible risks. Some of these risks can be anticipated. For example, it’s clear how engineering superviruses could backfire. However, some of the risks come from unforeseeable consequences. What can we do to mitigate these risks that seem unpredictable by nature? Are there systematic ways of investigating them?

Projects and Tools

- Warning Signs for Catastrophic Side-Effects

- GCR projects are likely to affect major causal structures, and some of the risks they carry come from unforeseeable consequences. For example, who could have foreseen that the development of a new refrigerant would have imperiled life on earth by depleting the ozone layer? If that’s so, then how confident should we be that projects designed to secure the long-term future won’t have consequences that actually raise the risk of catastrophe? Even if we cannot, by definition, anticipate the unforeseeable consequences of particular GCR projects, we can make progress by identifying the kinds of projects that tend to have unanticipated consequences. We can investigate past episodes where projects had unforeseen effects and see what they have in common. We can think about the kinds of causal structures and interventions that tend to be less predictable. The goal is to develop a list of warning signs that a particular project carries the risk of unpredictable, significant, and potentially harmful consequences.

- Categorizing GCR Projects by their Risk Profile

- Categorizing various GCR projects by their risk profiles will assist decision-makers in choosing individual projects and giving portfolios that accord with their risk preferences, such as maximizing expected value, minimizing backfire, or maximizing the probability of having an effect. This work might also lead to diversifying the GCR cause area.

- Investigating Ordering Effects

- When evaluating the EV of longtermist interventions, future risk trajectories matter. Because outcomes in this area tend not to be independent of one another, the order of actions and their effects may have a significant effect on those trajectories. One reason is that catastrophic risks are so severe that their occurrence severely curtails any future value. For example, pandemic preparedness efforts could do less good if a global nuclear war occurs before the next pandemic. Another reason is that efforts to reduce one kind of risk may change the probabilities of other kinds of risk. For example, the Time of Perils case for prioritizing GCR says that efforts to solve AI alignment have high EV because aligned AI would mitigate other existential risks. If so, we would not only have a reason to prioritize GCR, we would have a particular reason to prioritize AI alignment research among GCR projects. Given that the GCR cause area seems highly sensitive to ordering effects, it would be helpful to have more fine-grained examinations of how ordering influences expected value and risk-based assessments of projects.

Decision-Making Under Uncertainty

The Right Decision-Guiding Framework

Suppose we have figured out what components of value we care about. At this point, there are a number of available frameworks we could use to proceed, and often they will each lead to very different rankings of our options.

We have talked about maximizing value, but given that our actions concern the future, we are forced to think probabilistically about what amount of value will be observed, under what circumstances, and with what probabilities. The standard way of thinking suggests that instead of maximizing value we would maximize expected value, taking into account such probabilities. This decision framework is commonly referred to as Expected Value (or Utility) Theory. We have explored it, alongside some of its alternatives, and considered some of its implications.

Once we have some framework to make decisions in mind, we can proceed to evaluate altruistic efforts and their cost effectiveness. We have systematically provided several estimates from simulated models of a range of areas and projects, and offer a tool to visualize and investigate these.

We can go further and ask: “How should we spend our money as an individual or as a community in order to best satisfy our preferences on what matters?” Designing giving portfolios is crucial for aligning spending with these preferences, as it allows us to account for various goals, risk attitudes, and uncertainties.

In particular, how much heed to pay each decision framework is an open question, which is why we developed a Portfolio Builder Tool that allows users to build a giving portfolio based on (a) a budget, (b) attitudes toward risk, and (c) some assumptions about the features of the interventions being considered. In particular, this tool allows you to see how portfolios vary widely with decision theories and their parameters (e.g. parameters that reflect risk attitudes).

Projects and Tools

- Decision Theory vs Decision Procedure

- We can separate a decision procedure, which is an in-practice method of balancing risk and value to achieve your objectives, from a criterion of rationality, which is an in-principle account of what makes decisions rational. You can be a pure expected value maximizer in principle, accepting Expected Value (EV) maximization as a criterion of rationality, without thinking that ordinary agents in real-world decision-making contexts should use EV maximization to settle how to act. To what extent should these two things come apart? In what domains?

- Cost-Effectiveness Curves Expansions in the Portfolio Tool

- In addition to the existing (trimodal and isoelastic normal) distributions about intervention outcomes, we want to develop a broader range of probability distribution models to further enrich the Portfolio Builder Tool. This feature will allow users to explore how different outcome distributions might better capture the uncertainty and complexity surrounding altruistic interventions. By providing more options, users can experiment with models that reflect various assumptions about risk, diminishing returns, or unexpected outcomes. This flexibility will enable users to build more tailored portfolios, optimizing their decision-making based on a more realistic model.

- Evidence Aggregator Tool

- Decision-makers have to draw evidence from a variety of sources. What should we focus on when we aggregate this evidence? An ‘Evidence Aggregator’ tool might provide insight into the reliability of conclusions drawn from diverse sources of evidence, including models, expert testimony, and heuristic reasoning. It would provide an epistemic playground in which users can customize parameters such as the number of relevant considerations, their counterintuitiveness, discoverability, and the degree to which they are publicly known or formalizable. By simulating how different sources (experts, models, or individual reasoning) interact with these considerations, the tool would aim to provide insights into accuracy, susceptibility to bias, and overall confidence in the resulting conclusions. This ‘Evidence Aggregator’ could improve our understanding of how well models work under varying conditions, how to better incorporate expert testimony without falling into echo chambers, and how to calibrate uncertainty across different decision-making frameworks.

Getting things wrong

We don’t want to be blinded by the promise of certainty. But going too far in the opposite direction is a real danger. Our current altruistic efforts are not all concentrated on creating a time-machine, to give what we think is, right now, a clearly ridiculous example. But suppose there’s a near-infinitesimal yet positive chance that it’d solve all of humanity's problems, present and future. Are we wrong not to obsess over building this machine? While it makes sense to use probabilistic frameworks, make educated guesses where evidence is lacking, take expectations, we must exercise caution and not bet everything on false promises. Yes, we don’t just want to look for the keys where there’s light, but human imagination is vast and many possible ways of wanting to do good are too detached from anything that will realistically bear on our world. And all that wasted effort could have been put to good use, to make progress in tackling the most important issues of our time. Thus, choosing the right frameworks to think about probabilities and risk is a priority.

Cluelessness

One of the strongest challenges for ranking altruistic projects is that it’s really difficult to predict what the full effects of any particular intervention will be.[4] The problem is especially acute when we see multiple plausible stories about an intervention’s effects, which, once accounted for, could radically sway us away or towards funding that intervention (see the meat-eater problem for a commonly used example).[5]

When the total effect of interventions is dominated by unintended consequences, it becomes all the more important to carefully consider what these might be. How should we track our best guesses on long-term effects and update them as more evidence emerges? To what extent should resource allocation be driven by these best guesses? Are traditionally short-term projects systematically undervalued relative to speculative long-termist projects because we dismiss any tenuous or speculative accounts of their possible effects, particularly when these effects involve uncertain causal chains or extend into the distant future? As we trade-off resources between causes, how could we ensure that the scope of our speculation is consistent across projects and domains?

Getting things wrong

To lean on the commonly discussed meat-eater problem, consider the following situation. We successfully lift thousands or millions of people out of poverty. These people, previously unable, can now afford to buy factory-farmed meat products. Already hundreds of millions of animals are being killed every day and are living in some of the worst possible conditions known to us. Adding new meat-eaters could cause this number to grow considerably. We have alleviated suffering but created a second order effect that could very likely outweigh the originally positive impact. What then? First we must realize that these future and higher order effects cannot simply be ignored and can often have outsized impact. Perhaps there are cases where this is a false tradeoff, and there are ways of guarding against the negative future effects. Perhaps interventions that looked promising at first glance are quite negative in expectation, once everything is on the table. Regardless, gathering evidence and updating our beliefs and actions swiftly can often be the difference from us turning from potential heroes into potential villains.

Projects and Tools

- Consistent Standards Across Causes

- Causes that fall under the shortermist bucket are often assessed only with their short-term measurable effects in mind. Longtermist causes, on the contrary, are assessed by their possible long term successes, usually unmeasurable and speculative. These stories frequently purport to show paths of such high value that it would outweigh their unlikely nature. The lack of direct evidence in some areas might give rise to methodological injustices. Should we incorporate second-order effects in shortermist cost-effectiveness estimates? Might there be higher-order virtuous cycles (or negative externalities) that current shortermist cost-effectiveness estimates ignore? Part of the problem is that shortermist cost-effectiveness estimates (e.g., for Global Health interventions) are often directly compared with those from the longtermist bucket, entirely obtained with methods that would simply be disregarded as too detached from evidence in evaluating current human or animal interventions. How could we ensure that the scope of our speculation is consistent across projects and domains? To what extent do we currently observe inconsistent standards?

- Forms of Cluelessness

- The problem of cluelessness arises when an action’s overall value is dominated by its longterm effects, and we do not know the magnitude or direction of these effects. Scholars have distinguished between several types of cluelessness. Are these distinctions real, and do they call for different responses by decision-makers? We propose a critical taxonomy of kinds of cluelessness, how you might know what kind you’re in, and what (if anything) should be done about it.

For example, Greaves distinguishes between simple and complex cluelessness. In simple cases, we do not have evidence suggesting any bias in longterm effects, so our decisions should be dominated by the foreseen short term effects. In cases of complex cluelessness, we have conflicting evidence pointing to different kinds of bias, and we don’t know how to weigh these sources of evidence. However, even in simple cases, we can concoct stories about how our actions could have very good or bad consequences. What distinguishes good evidence about future trajectories (leading to complex cluelessness) from mere stories?[6] When will our evidence regarding longterm bias itself be biased (for example, if it’s much easier to think of scenarios with negative consequences than positive ones)?[7]

Thorstad and Mogensen argue that we should base decisions on short-term effects when stakes are smaller, longterm effects are less predictable, and our estimates of key variables are likely to be biased. How can we judge the stakes when the longterm effects are unknown? How can we tell when longterm effects are more or less unpredictable? When will our estimates of the future err in particular directions?

- The problem of cluelessness arises when an action’s overall value is dominated by its longterm effects, and we do not know the magnitude or direction of these effects. Scholars have distinguished between several types of cluelessness. Are these distinctions real, and do they call for different responses by decision-makers? We propose a critical taxonomy of kinds of cluelessness, how you might know what kind you’re in, and what (if anything) should be done about it.

- Cluelessness Case Studies

- To understand how to respond to cluelessness, it would help to know when we’re clueless of our actions’ effects and what we’re clueless about (e.g. their magnitude, direction, or variance). How can we get a grip on these questions if we cannot predict the future? The past might serve as a guide here. With the benefit of hindsight, we can examine cases of significant unforeseen consequences and see what they have in common. When have people been significantly wrong about the magnitude or direction of effects? For example, what could we learn about Malthusian projections about population growth and their extreme implications? When have effects tended to dissipate, and when have they compounded? By looking at case studies of the unintended effects of past projects , we might discern patterns that can give us clues about the decisions we currently face.

Attitudes Towards High-Risk Opportunities

In pursuit of impact, we can have good reasons to depart from the most rigorous, evidence-based methods for assessing altruistic projects: sometimes the most promising projects cannot (even in principle) be the subject of RCTs. So, insofar as we’re motivated by that value, we have to tolerate making decisions in contexts where we lack much of the evidence we’d like to have. This, however, puts us in an uncomfortable position. The more speculative stories we tell to justify supporting certain causes may never come to fruition. How do we decide which stories are reasonable and probable enough to justify moving resources away from interventions that are clearly doing good? How averse should we be to our altruistic efforts being wasted on promising stories that never came to pass? Moreover, some projects won’t just come to nothing: they’ll backfire. Should we be particularly wary of taking risky actions that would for example, often result in bad outcomes and sometimes result in very good ones? Even once we’ve determined our preferred level of risk aversion, how we should proceed will depend on the decision theory we choose to follow.

These issues are particularly salient for "hits-based giving," an approach to philanthropy that focuses on pursuing high-risk, high-reward opportunities, which raises several fundamental theoretical questions. For instance, how should we make decisions under extreme uncertainty? To what degree should we be bothered by the threat of fanaticism? Second, hits-based giving involves allocating significant resources to projects with a high likelihood of failure. This raises ethical questions about the responsible use of philanthropic funds. Should conscientious decision-makers pursue high-risk strategies when more certain, if less impactful, alternatives exist? How should we weigh the potential opportunity costs of failed initiatives against the possibility of transformative success?

Projects and Tools

- HITs-Based Giving

- How and when is a hits-based giving strategy justified? The familiar justification is that we should judge projects by their expected value, and not by their probability of success. This will not appeal to risk-averse givers who do care about the probability of failure or backfire. However, the strategy can, and has, been justified in other ways. It is predicated on the empirical claim that overall success largely depends on philanthropic unicorns, where “a small number of enormous successes account for a large share of the total impact — and compensate for a large number of failed projects” (Karnofsky 2016). Therefore, a hits-based portfolio that invests in diverse and independent high-risk, high-reward projects may have both high expected value and a high probability of success. Does this justification of hits-based giving only apply to giving portfolios for large philanthropic organizations? Or does it also entail that individual givers should invest in high-risk, high-reward projects over safer bets? Do the right kinds of diversification and independence emerge when we aggregate over individual hits-based donors? We will explore whether hits-based giving could be justified for individuals, including the risk averse. Does a commitment to EA's central principles, including prioritization, impartial altruism, open truth-seeking, entail that we should do hits-based giving? Is pursuing other giving strategies as criticizable as giving to, say, a local arts charity over AMF?

- Intervention Level Portfolio Tool

- While our Portfolio Builder Tool could in principle be used to model individual charities within the same cause area, one should exercise caution in doing so. This is because the outcomes of these charities are likely not independent, and the algorithms to calculate decision-theoretic scores and draw overall outcome distributions assume independence. To explore the impact of relaxing this assumption, we propose developing a tool that allows users to specify interdependence (e.g., correlations) between outcomes. The way we evaluate the risks of opportunities can radically change once correlations are incorporated. This tool would help to identify cases where adding this layer of complexity is crucial, and where assuming independence serves as a reasonable first approximation.

Getting things wrong

Imagine an EA organization aiming to prevent future pandemics by diversifying its investments across a range of biosecurity projects. They allocate funds to: vaccine development initiatives, global surveillance systems, personal protective equipment production, and policy advocacy programs. On the surface, the organization appears to be well-diversified, investing in different aspects of biosecurity. However, a critical oversight could be that all these projects heavily depend on government policies remaining favorable toward biosecurity and pandemic prevention efforts. Suppose that the strong dependence on government support is true.

In the future, a significant political change leads to a new government that neglects biosecurity, perhaps due to budget constraints or differing ideological views. In that world, all funded projects encounter significant obstacles around the same time due to their shared dependency on government policies. The organization's diversification strategy fails because the risks were not independent; they were correlated through the reliance on government support. This means resources were depleted without achieving the desired impact on pandemic prevention, setting back the organization's mission and wasting donor contributions.

In this case, true diversification requires investing in projects with independent risk factors, and simply operating in different niches of the same field may not suffice if underlying dependencies exist. The lesson is that even if a social movement diversifies its spending, it can fail to appreciate underlying correlations — like dependence on government support — that make its diversification strategy less effective and much riskier than expected or desired.

Investing Wisely

Resource Allocations Over Time

What is the optimal rate and timing of altruistic spending? Even if we knew how to optimize our spending across interventions, we would still need to determine what the best spending schedule is. There is evidence that we live in an impatient world, which we can leverage by patiently investing before disbursing.

Though there is room for the current models on optimal spending schedules to be developed further, we believe that there is a pressing sizable gap between the current spending rates and those rates that our best current understanding would recommend. This presents an opportunity: we should meet donors where they are and help them figure out what a more optimal, yet realistic, spending rate would be.

Projects and Tools

- Optimal Spending Rate Tool

- A tool here would attempt to build an interactive experience that extracts key lessons from patient philanthropy work and applies them in order to recommend a particular investment versus spending rate for that year, given the user’s inputs. To ensure feasibility, we might need to hold certain dimensions constant. These could include assumptions about market returns, inflation rates, or typical donor preferences. By nature, any tool will be imperfect, as we reduce technical nuance to enhance usability. We will critically assess the extent to which the tool's recommendations remain valid and useful, and the tool's limitations might shed light into which assumptions are commonly made in simplified models, and how those can go wrong.

- The Last Twenty Years: A Spending Retrospective

- Given what we now know about the cost-effectiveness of newly discovered interventions, what approaches would have actually had the highest payoffs in retrospect? Are the answers generally robust to different worldviews and preferences? What can we deduce about the value of waiting for research about what to fund and can we quantify that value to help us choose optimal spending rates in the future? Are there any lessons we can draw to improve future spending rates?

Philanthropists would do well to develop the best possible frameworks that model dynamic giving and carefully consider the often neglected dimensions. We explore three of these dimensions as an example: impact investing, investing forever, and empirical spending rates.

Impact Investments

Entirely self-interested investors have a clear objective: to maximize their financial gains. In contrast, altruistic investors have an opportunity not only to grow their wealth but also to channel those resources into profitable projects that directly add value to the world. We might call this 'impact investing'. The ideal investment opportunity would excel in both expected returns and impact. However, there are often trade-offs involved, and altruistic investors must strike a balance between higher returns and meaningful impact. For example, investing in renewable energy can reduce greenhouse emissions while still being profitable, though it might offer lower returns compared to investments in oil.

Projects and Tools

- Ranking Opportunities for Impact Investing

- How can we achieve more balanced portfolios if altruistic investors engage in impact investing? Ideally, we would have a clear menu of investment opportunities with some notion of the expected returns, the risk profile and the expected impact achieved per dollar invested. We might want a larger, systematic and continued evaluation effort that mirrors the role GiveWell plays in the charity space. There are several challenges to it but, perhaps because of this lack of information, impact investing may be largely undervalued in the Effective Altruism space.

Risks of Investing Forever

One of the problems that patient philanthropy carries with it is the risk of never achieving impact. The world could end, the money could vanish, the money could be spent selfishly, or an overcautious investor could miss out on enormous amounts of value in the name of patience.

Projects and Tools

- How to Not Invest Forever

- It is clear that investing in perpetuity would be self-defeating: yes the wealth could have grown an arbitrary amount, but it wouldn’t have actually been used effectively. Consider a brute-force guard against never giving. This could be a non-dynamic self-imposed threshold for a minimal amount of giving (e.g., 1%) which is suboptimal in some scenarios but could increase the value achieved from the philanthropic portfolio in expectation. Another option could be to have a ‘deadline’ by which the entirety of the wealth should be given away. Are these methods ever defensible from first principles? How can we model the considerations that lead to picking one threshold over another? What, if any, are the viable alternatives? In particular, are there more sophisticated mechanisms we could design that avoid some of the arbitrariness? Both philosophically and practically, what are the merits of tying our hands in order to avoid the temptation to invest forever?

The Philanthropic Spending Landscapes

In many ways, the key question for an altruistic investor is: how impatient is philanthropic spending today?[8] If, by their lights, others are too impatient, then there’s a clear opportunity to invest and give later. Similarly, if the world isn’t spending fast enough on the altruist’s priorities, they should step in and increase their spending rate.

Projects and Tools

- Current Spending Rates

- Different models and preferences give rise to different optimal spending rates, but they all need external actors’ overall spending rate as an input in order to recommend any sensible investment rate. We propose thoroughly investigating this very rate. What are the estimated combined spending rates of first, effective altruists and, second, the world at large? For this, we suggest narrowing the focus by concentrating on investigating spending rates in specific areas, e.g. global health. Given these, how fast do current models recommend we should disburse our investments, and under what amount of patience or future discounting?

- Projected Future Spending Rates

- Ideally, we would also investigate future projected spending rates given the historical trends. Could these change because of predictable shocks or other considerations, like a wealthy individual entering or leaving the space? Under what simulated scenarios would models recommend spending sooner or later than expected?

Getting things wrong

Had Benjamin Franklin been impatient with his philanthropy, in all likelihood, he would have had considerably less impact. Despite legal challenges and cripplingly outdated investment strategies, his decision to set up long-term trusts for Boston and Philadelphia in 1790, with initial investments of about $200k (in today’s dollars), ultimately produced gifts of around $6.5m and $1.5m in 1890, in present value terms, and $5m and $2.25m in 1990. Franklin’s patient approach, though strikingly imperfect, significantly multiplied the value of his original investments, likely exceeding what immediate spending would have achieved (see a more detailed discussion in section 5.4 here).

The problem of allocation schedules is commonly applied to capital, but it naturally arises with other resources too, like labor. As time passes, and a social movement grows, how should we allocate the movement’s talent? To answer this question, we need to estimate how much talent will be available and what we can do with it. In the case of a social movement, this will entirely depend on the movement building dynamics, which deserve separate attention.

The Structure of Effective Altruism

Movement Building and Infrastructure

A basic resource allocation question we should ask is ‘how much should a social movement invest in its own growth and infrastructure?’ This question can be situated within the context of Effective Altruism where there is no intrinsic value placed on movement size. Instead, the movement characteristics are only important insofar as they help or hinder the ultimate objectives: making the world better, doing the most good. Given this, what proportion of EA talent and capital should be devoted to recruitment and community infrastructure versus directly doing good? In practice, organizations often do not know whether to increase or decrease spending on recruitment and infrastructure, and they are in serious need of tools that allow them to navigate the inevitable trade-offs in their spending — especially as the messaging shifts back and forth on whether talent or funding is the main constraint.

Several existing frameworks — such as DIPYs, CPBCs, CECCs, HEAs — attempt to measure EA labor. However, accurately determining the value of a unit of movement-aligned labor requires addressing the fundamental movement building budgeting question: How should we allocate resources — both financial and human — between building the movement and doing good directly?

We think that the work by Sempere and Trammell constitutes a useful starting point for a theoretically principled model tackling this key question.[9] However, the model is unfinished, making it impossible to apply it to make any actionable recommendations to the funders in this space.[10] We would like to see full, actionable models for optimal spending on movement building.

We think that the best current models could be developed into more realistic frameworks and potentially be adapted to a particular funder’s considerations. For example, there is considerable heterogeneity in individuals’ earnings and talents. How should the model best capture these differences?

Projects and Tools

- Value of Movement Building Tool

- Having tied up the model’s loose ends, we could use known numerical techniques to simulate this problem and code a tool, with which decision makers could use to obtain recommended courses of action. It is intuitive that we should not spend all philanthropic resources on growing social altruistic movements, since those are only instrumentally valuable. However, it is also clear that neglecting them is far from ideal. Exactly how to navigate trading off doing direct good versus meta-movement work deserves nuanced attention.

When considering specific ways to invest in movement building, we must also consider the kind of structure and governance of the movement that we should invest in. Should it lean more towards centralized governance, which provides clear and consistent direction, or should it embrace a decentralized structure that fosters innovation and adaptability? How can we strike a balance between these two approaches to ensure maximum impact? A critical consideration is the risk of value drift — how can we establish robust accountability and transparency mechanisms to keep all levels of the movement aligned with its core values? Would regular reviews and dynamic feedback systems be sufficient to detect and address any undesirable shifts in priorities? How many resources should be devoted to incorporate diverse perspectives within leadership and decision-making bodies to enhance the movement's resilience and adaptability? How much should we tie our ignorant hands versus maintain high amounts of freedom and flexibility within the movement? Ultimately, it seems particularly important to investigate the design of a governance model that is flexible enough to evolve with the movement while steadfastly maintaining its foundational principles and goals.[11]

Projects and Tools

- Social Movement Coordination Game

- A healthy movement depends on good coordination and effective information-sharing processes. To facilitate this, we propose developing a game that immerses the user in a dynamic environment where some actors have aligned objectives, while others do not. The user, as one actor in this pool, must navigate the challenges of responding to various actions and strategically share key signals and information with those who are aligned. The tool is designed to highlight potential pitfalls, especially those arising from poor coordination, inadequate information flow, or ineffective strategies when facing other strategic players. By simulating these interactions, the tool aims to illustrate worst-case scenarios and how to avoid them. The initial version is envisioned as a game-like experience, where users can engage with these complex dynamics and receive insightful lessons at the conclusion of the exercise.

Related to the question of how a movement should spend its resources are considerations around how the public will perceive the spending. Self-investment is important for sustaining and growing the movement. However, too much self-investment may start to look like self-dealing. Both for brand health and the movement’s capacity to influence key leaders and populations, funding certain projects, at particular historical times, in particular ways, could be very damaging. This is especially salient for Effective Altruism, given its emphasis on cost-effectiveness. Effective Altruism has taken a reputational hit for some of the money that it has spent on movement building.

Projects and Tools

- The Role of Public Perception in Movement Building

- How should we value reputation in models of movement building? How much value should we be willing to sacrifice for a boost in reputation? More generally, how much does a social movement’s success depend upon public perception? We are especially interested in extending movement building models to incorporate reputation and model its effects. There are also opportunities to integrate survey work with existing modeling approaches. For example, surveying both EA and non-EA people might help us calibrate the parameters that represent the importance of movement building.

Getting things wrong

If there’s a stigma about being part of a social movement, especially among big donors, this could hinder movement building. Relatedly, if there’s a stigma about pursuing weird positions, big donors might feel pressure to steer the movement away from this.

Weirdness in Social Movements

Public perception of a movement is heavily influenced by how strange its central tenets and how laudable its public actions are judged to be. For example, some common beliefs and actions among members of Effective Altruism are significantly outside of the mainstream, while others (such as giving to alleviate poverty) are much more inline with common sentiment. What are the ways to track how alien and weird a movement can be? When might weirdness become a sweeping consideration? Which of the movement’s views and actions are deemed weird? How does perceived weirdness affect approval by both the public and people within the Effective Altruism movement? Are different kinds of weirdness reputationally or epistemically relevant in different ways? We believe it is important to improve our understanding of how public-facing work is perceived and how much (or little) this should affect our behavior.

Additionally, unpopular choices and opinions might be so for good reasons. Does the fact that a view is weird give you a reason to doubt its truth? If so, how strong is the reason? When, concretely, is weirdness enough to defeat your justification for believing that you ought to act on a view? Given the importance of both how the movement is perceived and the epistemic weight that poor public feedback might carry, we think there is space to build formal frameworks and tools that allow us to better study and track this, like constructing a weirdness-scale.

Effective Altruism tends to look for neglected cause areas, areas in which there might be opportunities for cost-effective interventions that other actors are missing out on. However, the fact that other actors have failed to invest in a space also raises the question: why aren't they investing? Do they know something that we don't?

When various agents in an interaction have private information, their actions reveal, in part, some of that private information. Therefore, we should update not only on their behavior but also what their behavior tells us about what they privately know. Failing to do so can lead to irrational behaviors (such as the winner's curse, in which winners of auctions routinely overpay because they fail to conditionalize on the fact that low bidders believe the object to have less value).

When other major actors refuse to invest in a project or cause area, this can provide information that they know something we don't about its value or cost-effectiveness. Whether and how much it does depends on facts about their private information. Have they investigated the cause area? Do other actors have good common sense about how such things go? Do we think that their neglect comes from bias or ignorance?

Getting things wrong

Here’s the worst case scenario. EAs identify a philanthropic project that seems cost-effective but has been completely neglected by other philanthropic and governmental actors. This neglect causes the EAs to devote even more resources to the project; the absence of other actors can only mean that there’s more marginal value to be had! However, the reason that these other actors have neglected the project is that they all investigated it and found key flaws (e.g. scientific work on the cause area is all faulty, crucial local actors are unreliable, etc.). By failing to take the neglect of other actors into account, EAs do not discount their cost-effectiveness estimates at all and continue to pour money into a lost cause.

Projects and Tools

- Weird Views Tool

- We propose a tool that assesses the perceived "weirdness" and popularity of ideas common within Effective Altruism, helping users evaluate how far certain beliefs or actions deviate from mainstream perspectives and the potential risks or rewards of promoting them. This tool would help users track public perception over time and see it evolve along different dimensions (e.g. see it broken down for different demographics and for specific projects or clusters of projects). Additionally, this project could track the endorsement of different ideas within EA to allow a better comparison of EAs’ views and outside groups. By integrating public opinion data and models of idea acceptance, the tool would evaluate the “weirdness” of specific projects or ideas, reflecting their unconventionality and riskiness. As part of this project we might investigate how these different aspects of perceived “weirdness” correlate with public approval and strategic outcomes, helping users determine when advocating for unconventional views is valuable and when it may be too damaging. The tool might also help uncover any thoughtful reasons why mainstream philanthropic efforts neglect some areas.

- Cursed Equilibria and The Value of Cause Areas

- We want to explore whether EAs (members of Effective Altruism) routinely underestimate how much others' actions are correlated with reliable private information. Using methods such as cursed equilibrium models (Eyster and Rabin 2005), we can examine how much we might be over (or under) valuing various cause areas. We can also study how coordination and information-sharing with actors outside Effective Altruism is necessary to mitigate worries about private information and whether we are currently doing enough of it.

Acknowledgements

The post was written by Rethink Priorities' Worldview Investigations Team. Thank you to Marcus Davis and Oscar Delaney for their helpful feedback. This is a project of Rethink Priorities, a global priority think-and-do tank, aiming to do good at scale. We research and implement pressing opportunities to make the world better. We act upon these opportunities by developing and implementing strategies, projects, and solutions to key issues. We do this work in close partnership with foundations and impact-focused non-profits or other entities. If you're interested in Rethink Priorities' work, please consider subscribing to our newsletter. You can explore our completed public work here.

- ^

A DALY burden estimate of a condition represents the loss of value of a year of life with that condition relative to full health. They are generated by people's judgments about trade-offs they would make between years of healthy life vs. life with the condition. Measuring effects by the number of DALYs an intervention prevents may cause us to overlook impact outside of physical wellbeing.

- ^

See the report for clarifications of these terms.

- ^

There are several other extensions that we think would be very fruitful, and offer some examples in the report’s concluding remarks.

- ^

Greaves contrasts this with ‘simple cluelessness’, which is thought to to be less of an issue because for every imagined bad effect of some trivial action that we could perform, there’s roughly an opposite good thing we can also imagine.

- ^

This subsection has strong and evident ties to the 'Longtermism and Global Catastrophic Risk' section as well as 'Decision-Making Under Uncertainty'.

- ^

If one thinks there's a distinction between simple and complex cluelessness, then there's some relevant difference between mere hypotheticals and plausible hypotheticals for which we have some evidence. The task then becomes studying that threshold for plausibility.

- ^

We do not mean ‘bias’ in the statistical sense.

- ^

This question should consider not just the overall impatience of philanthropic spending but also how aligned that spending is with your own values. Non-aligned philanthropic efforts may have limited impact from your perspective, but unless you regard their value as truly negligible, you should also factor them into your strategy after having adjusted for their lesser alignment with your priorities.

- ^

The work is also described informally here.

- ^

As the authors note: “Ideally, we would simply solve the patient movement’s optimization problem. However, this does not appear to be possible using standard dynamic optimization techniques.”

- ^

In particular, it seems that having a centralized structure allows for strategic decisions and bargains among worldviews. Without it, there's no good reason to think that different worldviews would trust others to hold up their end of bargains.

SummaryBot @ 2024-11-14T21:53 (+3)

Executive summary: Rethink Priorities outlines a research agenda focused on optimizing the allocation of limited altruistic resources across five key areas: impact evaluation, longtermism, decision-making under uncertainty, investment strategies, and movement building.

Key points:

- Evaluating impact requires resolving questions about moral weights, value measurement, and worldview diversification to compare different interventions' effectiveness.

- Longtermist considerations demand balancing survival vs flourishing, with careful attention to risk profiles and ordering effects between different catastrophic risks.

- Decision-making frameworks must address deep uncertainty and cluelessness while maintaining consistent standards across cause areas.

- Investment strategies need to optimize spending rates over time while managing risks of investing forever and balancing direct impact vs returns.

- Movement building requires careful resource allocation between growth and direct impact, while managing public perception and organizational structure.

This comment was auto-generated by the EA Forum Team. Feel free to point out issues with this summary by replying to the comment, and contact us if you have feedback.

NickLaing @ 2024-11-15T07:38 (+2)

This sounds great and I instinctively really like it. My reservation is when im the research will end up being somewhat biased towards animal welfare, considering that has been a major research focus and passion for most of these researchers for a long time.

My weak suggestion (I know probably not practical) would be to try and intentionally hire some animal welfare skeptic philosophy people to join the team to provide some balance and perhaps fresh perspectives.

David_Moss @ 2024-11-15T13:55 (+10)

Thanks Nick!

My reservation is when im the research will end up being somewhat biased towards animal welfare, considering that has been a major research focus and passion for most of these researchers for a long time.

This seems over-stated to me.

WIT is a cross-cause team in a cross-cause organization. The Animal Moral Weight project is one project we've worked on, but all our other subsequent projects (the Cross-Cause Model, the Portfolio Tool, the Moral Parliament Tool, the Digital Consciousness Model, and our OpenAI project on Risk Alignment in Agentic AI) are all not specifically animal related. We've also elsewhere proposed work looking at human moral weight and movement building.

You previously suggested that the team who worked on the Moral Weight project was skewed towards people who would be friendly to animals (though the majority of the staff on the team were animal scientists of one kind or another). But all the researchers on the current WIT team (aside from Bob himself) were hired after the completion of the Moral Weight project. In addition, I personally oversee the team and have worked on a variety of cause areas.

Also, regarding interpreting our resource allocation projects: the key animal-related input to these are the moral weight scores. And our tools purposefully give users the option to adjust these in line with their own views themselves.

NickLaing @ 2024-11-15T17:46 (+2)

I might well have overstated it. My argument here though is based on previous work of individual team members, even before they joined RP, not just the nature of the previous work of the team as part of RP. All 5 of the team members worked publicly (googlably) to a greater or lesser extent on animal welfare issues before joining RP, which does seem significant to me when the group undertaking such an important project which involves such important questions assessing impact, prioritisation and funding questions across a variety of causes.

It might be a"cross cause team", but there does seem a bent here..

1. Animal welfare has been at the center of Derek and Bob's work for some time.

2. Arvon founded the "Animal welfare library" in 2022 https://www.animalwelfarelibrary.org/about

3. You and Hayley worked (perhaps to a far lesser extent) on animal welfare before joining Rethink too. On Hayley's faculty profile it says"With her interdisciplinary approach and diverse areas of expertise, she helps us understand both animal minds and our own."

And yes I agree that you, leading the team seems to have the least work history in this direction.

This is just to explain my reasoning above, I don't think there's necessarily intent here and I'm sure the team is fantastic - evidenced by all your high quality work. Only that the team does seem quite animal welfar-ey. I've realised this might seem a bit stalky and this was just on a super quick google. This may well be misleading and yes I may well be overstating.

David_Moss @ 2024-11-15T18:43 (+8)

4 out of 5 of the team members worked publically (googlably) to a greater or lesser extent on animal welfare issues even before joining RP

I think this risks being misleading, because the team have also worked on many non-animal related topics. And it's not surprising that they have, because AW is one of the key cause areas of EA, just as it's not surprising they've worked on other core EA areas. So pointing out that the team have worked on animal-related topics seems like cherry-picking, when you could equally well point to work in other areas as evidence of bias in those directions.

For example, Derek has worked on animal topics, but also digital consciousness, with philosophy of mind being a unifying theme.

I can give a more detailed response regarding my own work specifically, since I track all my projects directly. In the last 3 years, 112/124 (90.3%)[1] of the projects I've worked on personally have been EA Meta / Longtermist related, with <10% animal related. But I think it would be a mistake to conclude from this that I'm longtermist-biased, even though that constitutes a larger proportion of my work.

Edit: I realise an alternative way to cash out your concern might not be in terms of bias towards animals relative to other cause areas, but rather than we should have people on both sides of all the key cause areas or key debates (e.g. we should have people on both extreme of being pro- and anti- animal, pro- and anti-AI, pro- and anti- GHD, and also presumably on other key questions like suffering focus etc).

If so, then I agree this would be desirable as an ideal, but (as you suggest) impractical (and perhaps undesirable) to achieve in a small team.

- ^

This is within RP projects, if we included non-RP academic projects, the proportion of animal projects would be even lower.

NickLaing @ 2024-11-18T07:58 (+2)

It's not that they've just worked on animal welfare. It's that they they have been animal rights advocates (which is great). Derek was the Web developer for the humane League for 5 years... Which is fantastic and I love it but towards my point...

Thanks for the clarification. I was indeed trying to say option a - that There's a "bias towards animals relative to other cause areas," . Yes I agree it would be ideal to have people on different sides of debates in these kind of teams but that's often impractical and not my point here.

I still think most independent people who would come in with a more balanced (less "cherry picking") approach than mine to look at the teams' work histories are likely to find the teams' work history to be at least moderately bent towards animals.

I also agree you as the leader aren't in that category.

David_Moss @ 2024-11-18T15:23 (+14)

I was indeed trying to say option a - that There's a "bias towards animals relative to other cause areas," . Yes I agree it would be ideal to have people on different sides of debates in these kind of teams but that's often impractical and not my point here.

Thanks for clarifying!

- Re. being biased in favour of animal welfare relative to other causes: I feel at least moderately confident that this is not the case. As the person overseeing the team I would be very concerned if I thought this was the case. But it doesn't match my experience of the team being equally happy to work on other cause areas, which is why we spent significant time proposing work across cause areas, and being primarily interested in addressing fundamental questions about how we can best allocate resources.[1]

- I am much more sympathetic to the second concern I outlined (which you say is not your concern): we might not be biased in favour of one cause area against another, but we still might lack people on both extremes of all key debates. Both of us seem to agree this is probably inevitable (one reason: EA is heavily skewed towards people who endorse certain positions, as we have argued here, which is a reason to be sceptical of our conclusions and probe the implications of different assumptions).[2]

Some broader points:

- I think that it's more productive to focus on evaluating our substantive arguments (to see if they are correct or incorrect) than trying to identify markers of potential latent bias.

- Our resource allocation work is deliberately framed in terms of open frameworks which allow people to explore the implications of their own assumptions.

- ^

And if the members of the team wanted to work solely on animal causes (in a different position), I think they'd all be well-placed to do so.

- ^

That said, I don't think we do too badly here, even in the context of AW specifically, e.g. Bob Fischer has previously published on hierarchicalism, the view that humans matter more than other animals).