[Fiction] Improved Governance on the Critical Path to AI Alignment by 2045.

By Jackson Wagner @ 2022-05-18T15:50 (+20)

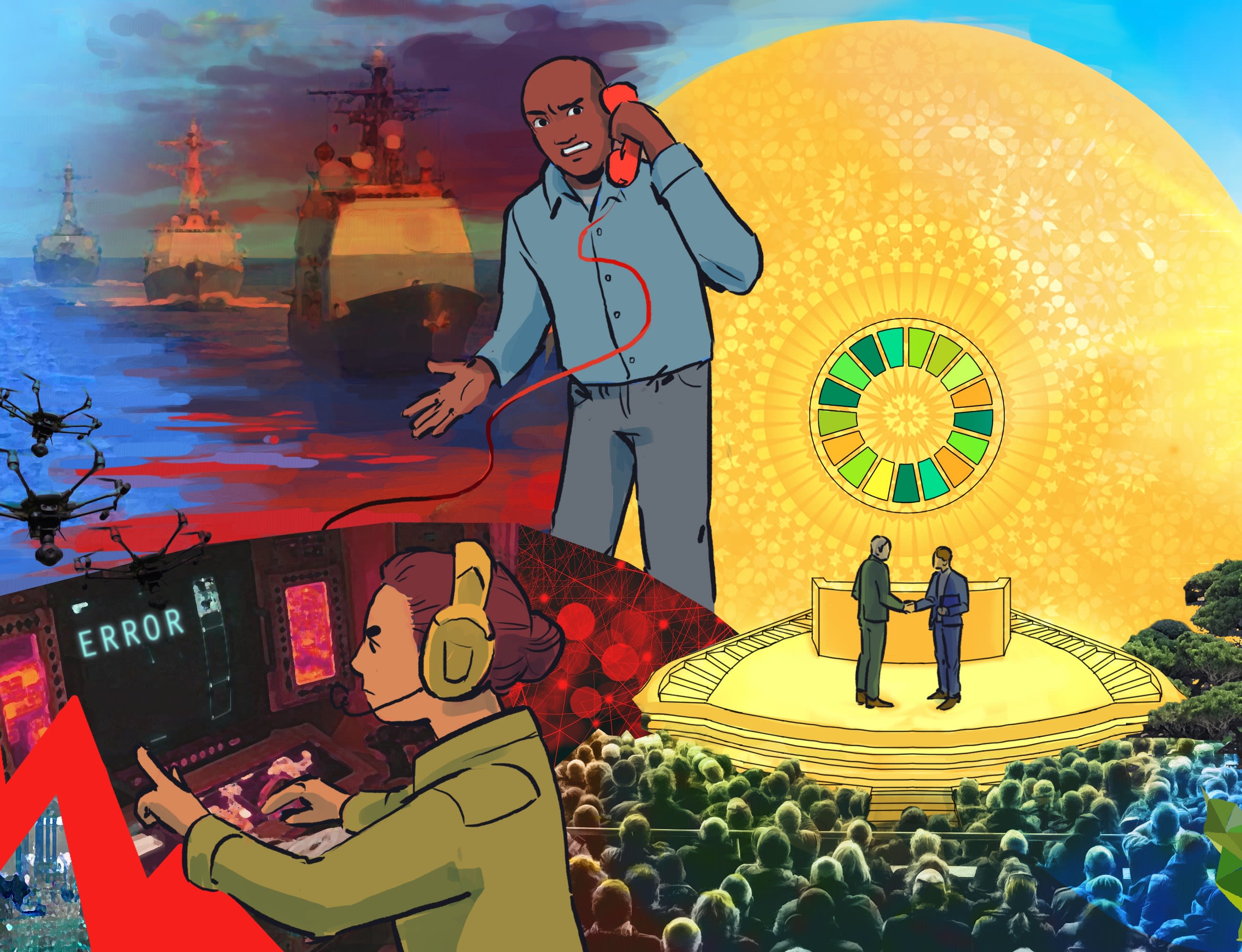

Summary: This post showcases my winning entry in the Future of Life Institute's AI worldbuilding contest. It imagines:

- How we might make big improvements to decisionmaking via mechanisms like futarchy and liquid democracy, enhanced by Elicit-like research/analysis tools.

- How changes could spread to many countries via competition to achieve faster growth than rivals, and via snowball effects of reform.

- How the resulting, more "adequate" civilization could recognize the threat posed by alignment and coordinate to solve the problem.

Motivation for our scenario:

Human civilization's current ability to coordinate on goals, make wise decisions quickly, and capably execute big projects, seems inadequate to handle the challenge of safely developing aligned AI. Evidence for this statement can be found practically all around you, but the global reaction to covid-19 is especially clarifying. Quoting Gwern:

The coronavirus was x-risk on easy mode: a risk (global influenza pandemic) warned of for many decades in advance, in highly specific detail, by respected & high-status people like Bill Gates, which was easy to understand with well-known historical precedents, fitting into standard human conceptions of risk, which could be planned & prepared for effectively at small expense, and whose absolute progress human by human could be recorded in real-time happening rather slowly over almost half a year while highly effective yet cheap countermeasures like travel bans & contact-tracing & hand-made masks could—and in some places did!—halt it. Yet, most of the world failed badly this test; and many entities like the CDC or FDA in the USA perversely exacerbated it, interpreted it through an identity politics lenses in willful denial of reality, obstructed responses to preserve their fief or eek out trivial economic benefits, prioritized maintaining the status quo & respectability, lied to the public “don’t worry, it can’t happen! go back to sleep” when there was still time to do something, and so on. If the worst-case AI x-risk happened, it would be hard for every reason that corona was easy. When we speak of “fast takeoffs”, I increasingly think we should clarify that apparently, a “fast takeoff” in terms of human coordination means any takeoff faster than ‘several decades’ will get inside our decision loops. Don’t count on our institutions to save anyone: they can’t even save themselves.

Around LessWrong, proposed AI x-risk-mitigation strategies generally attempt to route around this problem by aiming to first invent an aligned superintelligent AI, then use the superintelligent AI to execute a "pivotal action" that prevents rival unaligned AIs from emerging and generally brings humanity to a place of existential security.

This is a decent Plan A -- it requires solving alignment, but we have to solve that eventually in almost every successful scenario (including mine). It doesn't require much else, making it a nice and simple plan. One problem might be that executing a massive "pivotal action" might work less well if AI capabilities develop more smoothly and capabilities are distributed evenly among many actors, a la "slow takeoff" scenarios.

But some have argued have argued that we might be neglecting "Plan B" strategies built around global coordination. The post "What An Actually Pessimistic Containment Strategy Looks Like" considers Israel's successful campaign to stop Iran from developing nuclear weapons, and argues that activist efforts to slow down AGI research at top tech companies might be similarly fruitful. Usually (including in my worldbuilding scenario), it's imagined that the purpose of such coordination is to buy a little more time for technical alignment safety work to happen. But for a more extreme vision of permanently suppressing AI technology, we can turn to the fictional world of Dath Ilan, or to Nick Bostrom's "easy nukes" thought experiment exploring how humanity could survive if nuclear weapons were absurdly easy to make.

The idea that we should push for improved governance in order to influence AI has its problems. It takes a long time, making it might be very helpful in 2070 but not by 2030. (In this respect it is similar to other longer-term interventions like gene-editing to create more scientific geniuses or general EA community-building investments.) And of course you still have to solve the technical challenge of AI alignment in the end. But improving governance also has a lot to recommend it, and it's something that can ideally be done in parallel with technical alignment research -- complementing rather than substituting, worked on by different people who have different strengths and interests.

Finally, another goal of the story was expressing the general value of experimentation and governance competition. I think that existing work in the cause area of "improving institutional decisonmaking" too heavily focused on capturing the commanding heights of existing prestigious institutions and then implementing appropriate reforms "from the inside". This is good, but it too should be complemented by the presence of more radical small-scale experimentation on the "outside" -- things like charter cities and experimental intentional communities -- which could test out wildly different concepts of ideal governance.

Below, I've selected some of the most relevant passages from my contest submission. To get more of the sci-fi utopian flavor of what daily life would be like in the world I'm imagining (including two wonderful short stories written by my friend Holly, a year-by-year timeline, and more), visit the full page here. Also, the Future of Life Institute would love it if you submitted feedback on my world and the other finalists -- how realistic do you find this scenario, how much would you enjoy living in the world I describe, and so forth.

Excerpts from my team's contest submission:

Artificial General Intelligence (AGI) has existed for at least five years but the world is not dystopian and humans are still alive! Given the risks of very high-powered AI systems, how has your world ensured that AGI has at least so far remained safe and controlled?

Ultimately, humanity was able to navigate the dangers of AGI development because the early use of AI to automate government services accidentally kicked off an “arms race” for improved governance technology and institution design. These reforms improved governments’ decision-making abilities, enabling them to recognize the threat posed by misalignment and coordinate to actually solve the problem, implementing the “Delhi Accords” between superpowers and making the Alignment Project civilization’s top priority.

In a sense, all this snowballed from a 2024 Chinese campaign to encourage local governments to automate administrative processes with AI. Most provinces adopted mild reforms akin to Estonia’s e-governance, but some experimented with using AI economic models to dynamically set certain tax rates, or using Elicit-like AI research-assistant tools to conduct cost-benefit analyses of policies, or combining AI with prediction markets. This goes better than expected, kickstarting a virtuous cycle:

- Even weak AI has a natural synergy with many government functions, since it makes predicting / planning / administering things cheap to do accurately at scale.

- Successful reforms are quickly imitated by competing regions (whether a neighboring city or a rival superpower) seeking similar economic growth benefits.

- After adopting one powerful improvement to fundamental decisionmaking processes, it’s easier to adopt others (ie, maybe the new prediction market recommends switching the electoral college to a national-popular-vote with approval voting).

One thing leads to another, and soon most of the world is using a dazzling array of AI-assisted, prediction-market-informed, experimental institutions to govern a rapidly-transforming world.

The dynamics of an AI-filled world may depend a lot on how AI capability is distributed. In your world, is there one AI system that is substantially more powerful than all others, or a few such systems, or are there many top-tier AI systems of comparable capability?

Through the 2020s, AI capabilities diffused from experimental products at top research labs to customizable commercial applications much as they do today. Thus, new AI capabilities steadily advanced through different sectors of economy.

The 2030s brought increasing concern about the power of AI systems, including their military applications. Against a backdrop of rapidly improving governance and a transforming international situation, governments started rushing to nationalize most top research organizations, and some started to restrict supercomputer access. Unfortunately, this rush to monopolize AI technology still paid too little attention to the problem of alignment; new systems were deployed all the time without considering the big picture.

After 2038’s Flash Crash War, the world woke up to the looming dangers of AGI, leading to much more comprehensive consolidation. With the Delhi Accords, all top AI projects were merged into an internationally-coordinated Apollo-Program-style research effort on alignment and superintelligence. Proliferation of advanced AI research/experimentation outside this official channel is suppressed, semiconductor supply chains are controlled, etc. Fortunately, the world transitioned to this centralized a few years before truly superhuman AGI designs were discovered.

As of 2045, near-human and “narrowly superhuman” capabilities are made broadly available through API for companies and individuals to use; hardware and source code is kept secure. Some slightly-superhuman AGIs, with strict capacity limits, are being cautiously rolled out in crucial areas like medical research and further AI safety research. The most cutting-edge AI designs exist within highly secure moonshot labs for researching alignment.

How has your world avoided major arms races and wars?

Until 2038, geopolitics was heavily influenced by arms races, including the positive "governance arms race" described earlier. Unfortunately, militaries also rushed to deeply integrate AI. The USA & China came to the brink of conflict during the “Flash Crash War”, when several AI systems on both sides of the South China Sea responded to ambiguous rival military maneuvers by recommending that their own forces be deployed in a more aggressive posture. These signaling loops between rival AI systems lead to an unplanned, rapidly escalating cycle of counter-posturing, with forces being rapidly re-deployed, in threatening and sometimes bizarre ways. For about a day, both countries erroneously believed they were being invaded by the other, leading to intense panic and confusion until the diplomatic incident was defused by high-level talks.

Technically, the Flash Crash War was not caused by misalignment per se (rather, like the 2010 financial Flash Crash, by the rapid interaction of multiple complex automated systems). Nevertheless, it was a fire-alarm-like event which elevated "fixing the dangers of AI systems" to a pressing #1 concern among both world leaders and ordinary people.

Rather than the lukewarm, confused response to crises like Covid-19, the world's response was strong and well-directed thanks to the good-governance arms race. Prediction markets and AI-assisted policy analysts quickly zeroed in on the necessity of solving alignment. Adopted in 2040, the Delhi Accords began an era of intensive international cooperation to make AI safe. This put a stop to harmful military & AI-technology arms races.

In the US, EU, and China, how and where is national decision-making power held, and how has the advent of advanced AI changed that?

The wild success of China's local-governance experiments led to freer reign for provinces. Naturally, each province is very unique, but each now uses AI to automate basic government services, and advanced planning/evaluation assistants to architect new infrastructure and evaluate policy options.

The federal government's remaining responsibilities include foreign relations and coordinating national projects. The National People's Congress now mostly performs AI-assisted analysis of policies, while the Central Committee (now mostly provincial governors) has regained its role as the highest governing body.

In the United States, people still vote for representatives, but Congress debates and tweaks a basket of metrics rather than passing laws or budgets directly. This weighted index (life expectancy, social trust, GDP, etc) is used to create prediction markets where traders study whether a proposed law would help or hurt the index. Subject to a handful of basic limits (laws must be easy to understand, respect rights, etc), laws with positive forecasts are automatically passed.

This system has extensively refactored US government, creating both wealth and the wisdom needed to tackle alignment.

The EU has taken a cautious approach, but led in other areas:

- Europe has created an advanced hybrid economy of "human-centered capitalism", putting an automated thumb on the scale of nearly every transaction to favor richer social connections and greater daily fulfillment.

- Europe has also created the most accessible, modular ecosystem of AI/governance tech for adoption by other countries. Brazil, Indonesia, and others have benefited from incorporating some of the EU's open-source institutions.

What changes to the way countries govern the development, deployment and/or use of emerging technologies (including AI) played an important role in the development of your world?

After the world woke up to the dangers of powerful misaligned AI in 2038, nations realized that humanity is bound together by the pressing goal of averting extinction. Even if things go well, the far-future will be so strange and wonderful that the political concept of geopolitical “winners” and “losers” is impossible to apply.

This situation, like a Rawlsian veil of ignorance, motivated the superpowers to cooperate with 2040 Delhi Accords. Key provisions:

- Nationalizing and merging top labs to create the Alignment Project.

- Multi-pronged control of the “AI supply chain” (inspired by uranium & ICBM controls) to enforce nonproliferation of powerful AI — nationalizing semiconductor factories and supercomputer clusters, banning dangerous research, etc.

- Securing potential attack vectors like nuclear command systems and viral synthesis technology.

- API access and approval systems so people could still develop new applications & benefit from prosaic AI.

- Respect for rights, plus caps on inequality and the pace of economic growth, to ensure equity and avoid geopolitical competition.

Although the Accords are an inspiring achievement, they are also provisional by design: they exist to help humanity solve the challenge of developing safe superintelligent machines. The Alignment Project takes a multilayered approach -- multiple research teams pursue different strategies and red-team each other, layering many alignment strategies (myopic oracle wrappers, adversarial AI pairs, human-values-trained reward functions, etc). With luck, these enable a “limited” superintelligence not far above human abilities, as a tool for further research to help humanity safely take the next step.

What is a new social institution that has played an important role in the development of your world?

New institutions have been as impactful over recent decades as near-human-level AI technology. Together, these trends have had a multiplicative effect — AI-assisted research makes evaluating potential reforms easier, and reforms enable society to more flexibly roll out new technologies and gracefully accommodate changes. Futarchy has been transformative for national governments; on the local scale, "affinity cities" and quadratic funding have been notable trends.

In the 2030s, the increasing fidelity of VR allows productive remote working even across international and language boundaries. Freed from needing to live where they work, young people choose places that cater to unique interests. Small towns seeking growth and investment advertise themselves as open to newcomers; communities (religious beliefs, hobbies like surfing, subcultures like heavy-metal fans, etc) select the most suitable town and use assurance contracts to subsidize a critical mass of early-adopters to move and create the new hub. This has turned previously indistinct towns to a flourishing cultural network.

Meanwhile, Quadratic Funding (like a hybrid of local budget and donation-matching system, usually funded by land value taxes) helps support community institutions like libraries, parks, and small businesses by rewarding small-dollar donations made by citizens.

The most radical expression of institutional experimentation can be found in the constellation of "charter cities" sprinkled across the world, predominantly in Latin America, Africa, and Southeast Asia. While affinity cities experiment with culture and lifestyle, cities like Prospera Honduras have attained partial legal sovereignty, giving them the ability to experiment with innovative regulatory systems much like China’s provinces.

What is a new non-AI technology that has played an important role in the development of your world?

Improved governance technology has helped societies to better navigate the “bulldozer vs vetocracy” axis of community decision-making processes. Using advanced coordination mechanisms like assurance contracts, and clever systems (like Glen Weyl’s “SALSA” proposal) for pricing externalities and public goods, it’s become easier for societies to flexibly make net-positive changes and fairly compensate anyone affected by downsides. This improved governance tech has made it easier to build lots of new infrastructure while minimizing disruption. Included in that new infrastructure is a LOT of new clean power.

Solar, geothermal, and fusion power provide most of humanity’s energy, and they do so at low prices thanks to scientific advances and economies of scale. Abundant energy enables all kinds of transformative conveniences:

- Cheap desalinization changes the map, allowing farming and habitation of previously desolate desert areas. Whole downtown areas of desert cities can be covered with shade canopies and air-conditioned with power from nearby solar farms.

- Carbon dioxide can be captured directly from the air at scale, making climate change a thing of the past.

- Freed from the pressing need to economize on fuel, vehicles like airplanes, container ships, and self-driving cars can simply travel at higher speeds, getting people and goods to their destinations faster.

- Indoor farming using artificial light becomes cheaper; instead of shipping fruit from the opposite hemisphere, people can enjoy local, fresh fruit year-round.

What’s been a notable trend in the way that people are finding fulfillment?

The world of 2045 is rich enough that people don’t have to work for a living — but it’s also one of the most exciting times in history, running a preposterously hot economy as the world is transformed by new technologies and new ways of organizing communities, so there’s a lot to do!

As a consequence, careers and hobbies exist on an unusual spectrum. On one end, people who want to be ambitious and help change the world can make their fortune by doing the all the pressing stuff that the world needs, like architecting new cities or designing next-generation fusion power plants.

With so much physical transformation unleashed, the world is heavily bottlenecked on logistics / commodities / construction. Teams of expert construction workers are literally flown around the world on private jets, using seamless translation to get up to speed with local planners and getting to work on what needs to be built using virtual-reality overlays of a construction site.

Most people don't want to hustle that much, and 2045's abundance means that increasing portions of the economy are devoted to just socializing and doing/creating fun stuff. Rather than tedious, now-automated jobs like "waiter" or "truck driver", many people get paid for essentially pursuing hobbies -- hosting social events of all kinds, entering competitions (like sailing or esports or describing hypothetical utopias), participating in local community governance, or using AI tools to make videos, art, games, & music.

Naturally, many people's lives are a mix of both worlds.

A Note of Caution

The goal of this worldbuilding competition was essentially to tell the most realistic possible story under a set of unrealistic constraints: that peace and prosperity will abound despite huge technological transformations and geopolitical shifts wrought by AI.

In my story, humanity lucks out and accidentally kick-starts a revolution in good governance via improved institution design – this in turn helps humanity make wise decisions and capably shepherd the safe creation of aligned AI.

But in the real world, I don’t think we’ll be so lucky. Technical AI alignment, of course, is an incredibly difficult challenge – even for the cooperative, capable, utopian world I’ve imagined here, the odds might still be against them when it comes to designing “superintelligent” AI, on a short schedule, in a way that ends well for humanity.

Furthermore, while I think that a revolutionary improvement in governance institutions is indeed possible (it’s one of the things that makes me feel most hopeful about the future), in the real world I don’t think we can sit around and just wait for it to happen by itself. Ideas like futarchy need support to persuade organizations, find winning use-cases, and scale up to have the necessary impact.

Nobody should hold up my story, or the other entries in the FLI’s worldbuilding competition, as a reason to say “See, it’ll be fine – AI alignment will work itself out in the end, just like it says here!”

Rather, my intent is to portray:

- In 2045, an inspiring, utopian end state of prosperity, with humanity close to achieving a state of existential security.

- From 2022-2044, my vision of what’s on the most-plausible critical path taking us from the civilization we live in today to the kind of civilization that can capably respond to the challenge of AI alignment, in a way that might be barely achievable if a lot of people put in a lot of effort.

_will_ @ 2023-01-06T18:40 (+5)

How we might make big improvements to decisionmaking via mechanisms like futarchy

On this point, see ‘Issues with Futarchy’ (Vaintrob, 2021). Vaintrob writes the following, which I take to be her bottom line: ‘The EA community should not spend resources on interventions that try to promote futarchy.’