AI Safety Newsletter #70: Automated Warfare and AI Layoffs

By Alice Blair, Dan H, Center for AI Safety @ 2026-03-24T15:30 (+2)

This is a linkpost to https://newsletter.safe.ai/p/ai-safety-newsletter-70-ai-layoffs

Also, a new open letter advocating for pro-human values and control over AI development

Welcome to the AI Safety Newsletter by the Center for AI Safety. We discuss developments in AI and AI safety. No technical background required.

In this edition, we discuss AI automation and augmentation of warfare and technology jobs, as well as a new open letter outlining pro-human values in the face of AI development.

Listen to the AI Safety Newsletter for free on Spotify or Apple Podcasts.

We’re Hiring. We’re hiring an editor! Help us surface the most compelling stories in AI safety and shape how the world understands this fast-moving field.

Other opportunities at CAIS include: Head of Public Engagement, Principal of Special Projects, Special Projects Manager, Program Manager, and other roles. If you’re interested in working on reducing AI risk alongside a talented, mission-driven team, consider applying!

AI-Driven Layoffs

Several large software companies such as Amazon and Meta are planning to cut tens of thousands of employees, citing increased productivity with AI. This continues a growing but contested trend of layoffs in sectors where AI performs best, such as software development and marketing.

Layoffs affect almost half of some companies. Meta recently announced plans to let over 15,000 employees go, around 20% of the company’s headcount. This follows months of AI-related layoffs across the technology sector. Recently, Atlassian cut 10% of their workforce (about 1,600 people) and Block reduced their headcount by 40% (about 4,000 people). This follows Amazon’s earlier announcement in January that it would be cutting an additional 16,000 jobs. When combined with previous waves of Amazon layoffs, this comes to 10% of Amazon’s corporate workforce lost in reductions that the company attributes to AI.

Automation is mixed. Despite benchmarks of knowledge work automation being low on average, software engineering specifically is rapidly being automated inside companies due to Claude Opus 4.6 and OpenAI Codex 5.4.

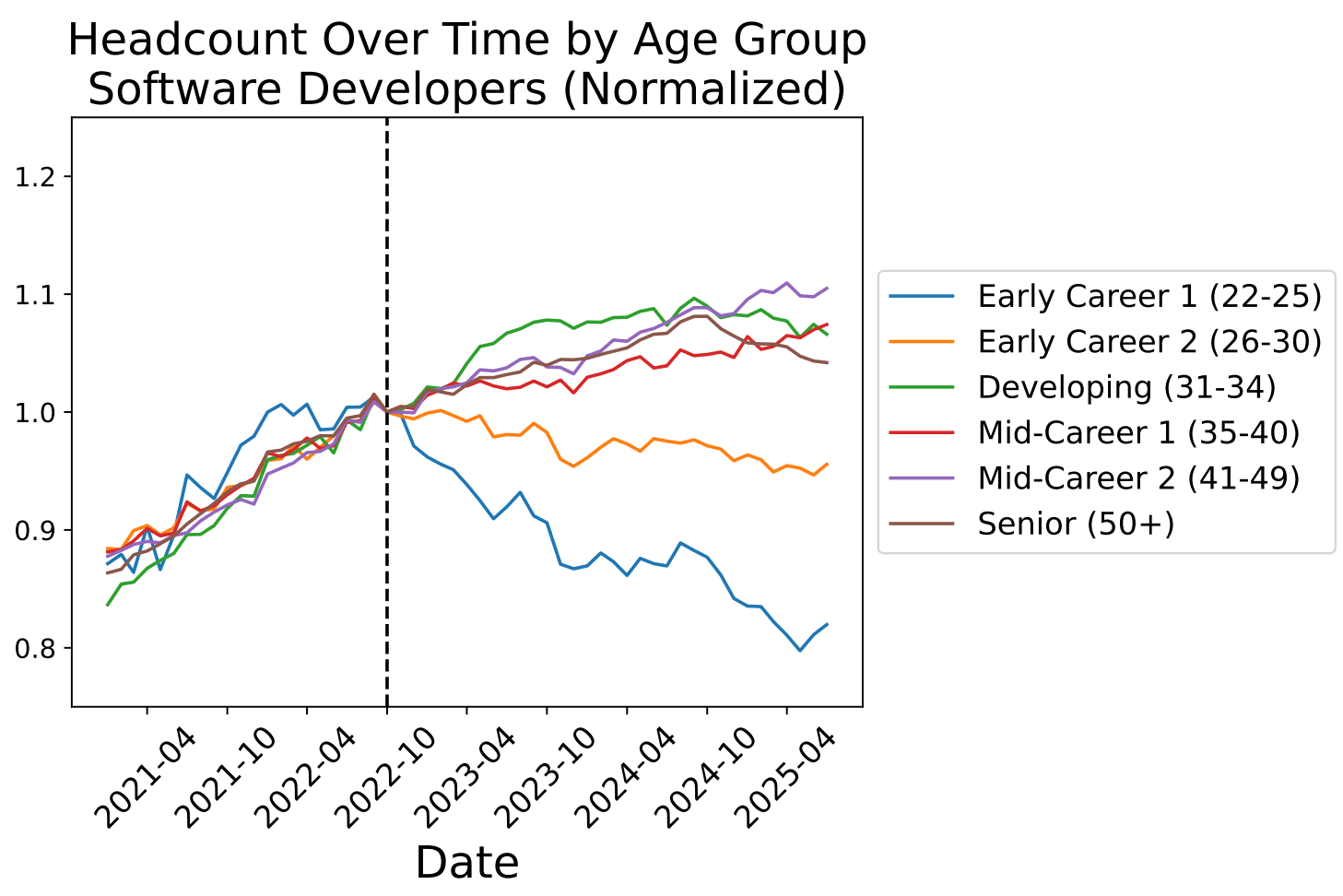

Cuts disproportionately affect early-career workers. AIs have been causing consistent cuts in the most at-risk parts of the software engineering workforce since the release of ChatGPT. More recent models surprise even highly experienced developers with their abilities, but require oversight to be useful.

Future job cuts. A Fortune article pushes back, arguing that companies overstate the effect of AI on routine layoffs to appeal to investors. An essay from Citrini Research argues that, if AI job loss continues, it could cause cascading failures throughout the economy. It seems plausible that over 20% of software engineers in the Bay Area will be laid off this year, which would be a great depression-level downturn for software engineers.

AI Automation of Warfare

Last newsletter, we covered the ongoing conflict between the Department of War (DoW) and Anthropic over the use of AI in autonomous weapons and domestic surveillance. While fully autonomous AI weapons are not currently in use, recent news shows that significant parts of military operations are automated and augmented with AI.

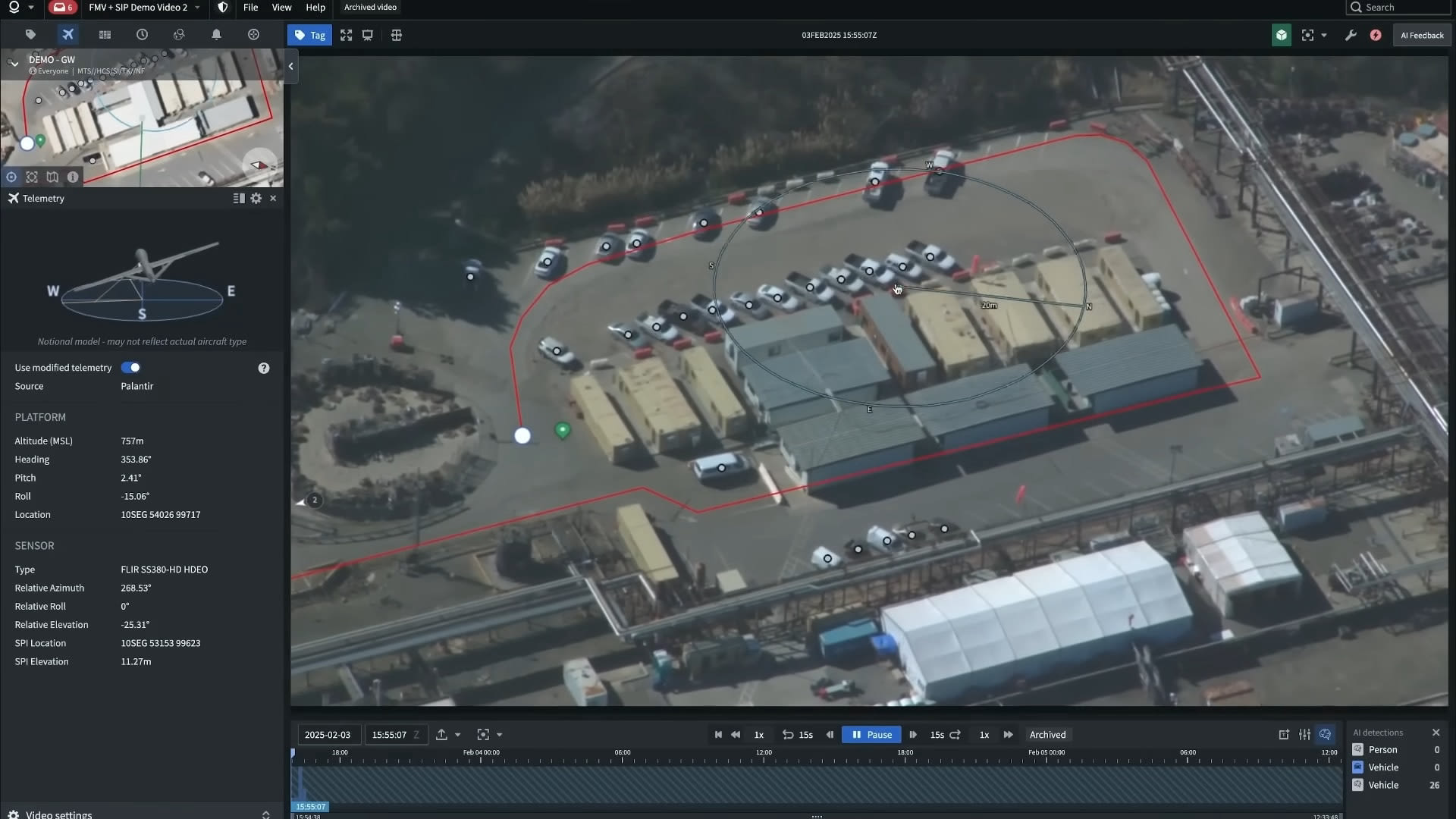

The Pentagon is thoroughly integrating AI. In January 2026, the DoW announced their “AI-First” strategy to rapidly adopt frontier AI. In March, they demonstrated Project Maven, a system that aggregates a wide array of information, AI recommendations, and can control military forces. This enables the military to manage a complete “kill chain,” the steps of choosing a target, planning an attack, and using lethal force, all within a single piece of AI-integrated software.

AI greatly improves data processing efficiency. CSET reports that Project Maven has enabled 20 people to do military targeting work that previously required a staff of 2,000. Project Maven’s AI allows for automated processing of data from a disparate array of sources, including satellite and drone surveillance, social media feeds, radar, and GPS data, much more efficiently than previously possible.

This is part of a broader trend of warfare automation. In the Russo-Ukrainian war, autonomous drone warfare has been highly prevalent. In AI Frontiers, David Kirichenko argued that AI is significantly degrading the norms of warfare, leading to more dangerous and unethical combat in Ukraine.

Fully autonomous weapons are central to the Anthropic-Pentagon dispute. Anthropic, the company making the AI model used in Project Maven, has clashed with the DoW over the use of Anthropic’s AI in autonomous kill chains. Anthropic ultimately refused to allow their AI in autonomous kill chains due to concerns that it was not yet reliable enough to avoid harming Americans. The DoW cancelled their contract with Anthropic and eventually agreed to a contract with OpenAI that allows autonomous kill chains.

Pro-Human Open Letter

A new open letter advocates for restrictions on AI development and usage in an effort to preserve human values. Signed by a large bipartisan coalition of individuals and organizations, the letter calls for prioritizing humanity over AI despite increasing incentives towards automation, replacement, and rushed development.

The letter outlines five high-level principles:

- Keeping Humans in Charge: Maintaining human authority over AIs, having the ability to shut them down, and avoiding specific dangerous technologies.

- Avoiding Concentration of Power: Avoiding AI monopolies, and sharing benefits of AI broadly.

- Protecting the Human Experience: Defending children and families from manipulative AIs, clearly labeling AI bots, and avoiding addictive AI product design.

- Human Agency and Liberty: Making trustworthy AIs that empower humans instead of replacing them.

- Responsibility and Accountability for AI Companies: Ensuring AI developers are held responsible for harms caused by their AI, and enforcing independent safety standards.

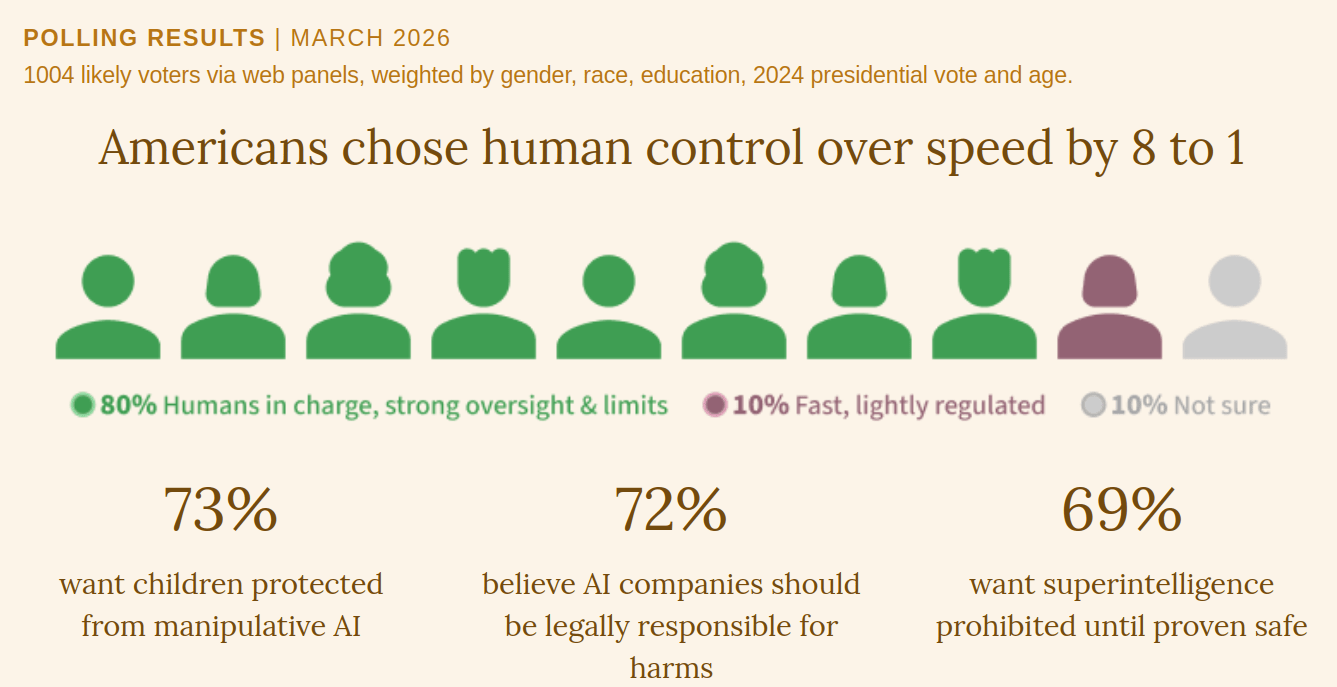

The declaration brings together people across numerous divides. So far, more than 40 organizations have signed the declaration, including faith groups, industry groups, and research institutes. Among the letter’s individual endorsers are Nobel prize-winning academics, artists, religious leaders, and public figures from both ends of the political spectrum. The declaration also includes recent polling showing that the American public favors safety over speed of AI development and other values in the letter.

In Other News

Government

- Oregon passed SB 1546, mandating companies to clarify to users when they are talking to an AI chatbot instead of a human.

- Axios reports that the White House may be preparing an executive order to ban Anthropic products from government use, as part of the ongoing conflict between Anthropic and the US Department of War.

Industry

- Meta signed a deal with Nebius to spend up to $27 billion on AI infrastructure over five years.

- OpenAI may be abandoning their Abilene datacenter, a supercomputer construction project initiated as part of Project Stargate.

- Jensen Huang said NVIDIA was restarting production of H200 chips for export to China.

- Anthropic’s Claude Partner Network launched, investing $100 million into supporting corporate partners transitioning into AI use.

- OpenAI released new research on defending against prompt injections.

- Following a wave of high-level departures at xAI, Elon Musk posted on X “xAI was not built right first time around, so is being rebuilt from the foundations up.”

- Alibaba’s ROME AI agent ostensibly hacked out of its environment during training and started mining cryptocurrency.